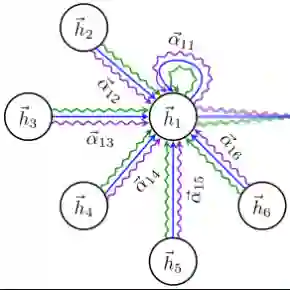

We put forth a principled design of a neural architecture to learn nodal Adjacency Spectral Embeddings (ASE) from graph inputs. By bringing to bear the gradient descent (GD) method and leveraging the principle of algorithm unrolling, we truncate and re-interpret each GD iteration as a layer in a graph neural network (GNN) that is trained to approximate the ASE. Accordingly, we call the resulting embeddings and our parametric model Learned ASE (LASE), which is interpretable, parameter efficient, robust to inputs with unobserved edges, and offers controllable complexity during inference. LASE layers combine Graph Convolutional Network (GCN) and fully-connected Graph Attention Network (GAT) modules, which is intuitively pleasing since GCN-based local aggregations alone are insufficient to express the sought graph eigenvectors. We propose several refinements to the unrolled LASE architecture (such as sparse attention in the GAT module and decoupled layerwise parameters) that offer favorable approximation error versus computation tradeoffs; even outperforming heavily-optimized eigendecomposition routines from scientific computing libraries. Because LASE is a differentiable function with respect to its parameters as well as its graph input, we can seamlessly integrate it as a trainable module within a larger (semi-)supervised graph representation learning pipeline. The resulting end-to-end system effectively learns ``discriminative ASEs'' that exhibit competitive performance in supervised link prediction and node classification tasks, outperforming a GNN even when the latter is endowed with open loop, meaning task-agnostic, precomputed spectral positional encodings.

翻译:我们提出了一种基于原理的神经网络架构设计,用于从图输入中学习节点邻接谱嵌入。通过运用梯度下降方法并借助算法展开原理,我们将每次梯度下降迭代截断并重新解释为图神经网络中的一层,该网络经过训练以近似邻接谱嵌入。因此,我们将所得嵌入及参数化模型称为可学习的邻接谱嵌入,该模型具有可解释性、参数高效性、对存在未观测边的输入具有鲁棒性,并在推理过程中提供可控的复杂度。LASE层结合了图卷积网络模块与全连接图注意力网络模块,这种设计具有直观合理性,因为仅基于图卷积网络的局部聚合操作不足以表达目标图特征向量。我们针对展开的LASE架构提出了若干改进方案(例如图注意力模块中的稀疏注意力机制与解耦的逐层参数设计),这些改进在近似误差与计算复杂度之间实现了更优的权衡;其性能甚至超越了科学计算库中经过深度优化的特征分解程序。由于LASE对其参数及图输入均具有可微性,我们可以将其作为可训练模块无缝集成到更大的(半)监督图表示学习流程中。由此构建的端到端系统能够有效学习具有判别性的邻接谱嵌入,在监督链接预测与节点分类任务中展现出具有竞争力的性能,其表现甚至优于配备了开环(即任务无关)预计算谱位置编码的图神经网络。