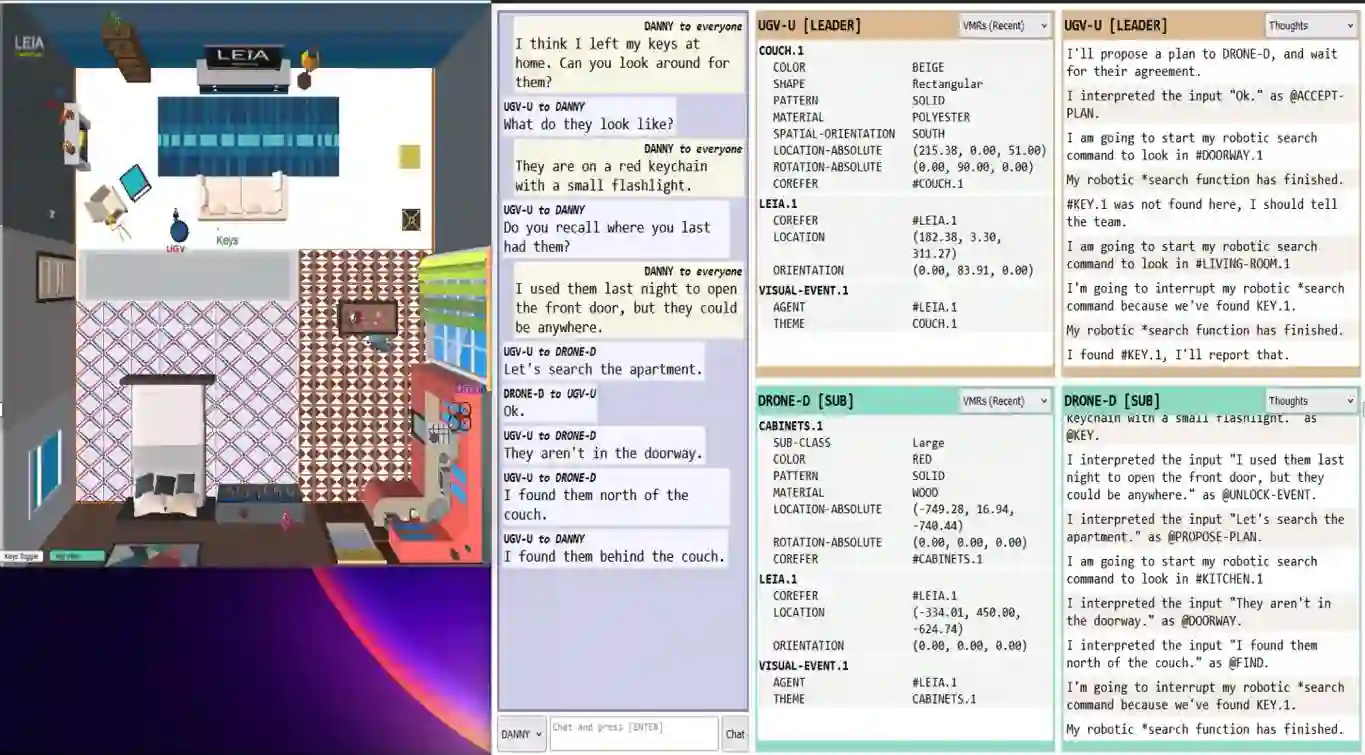

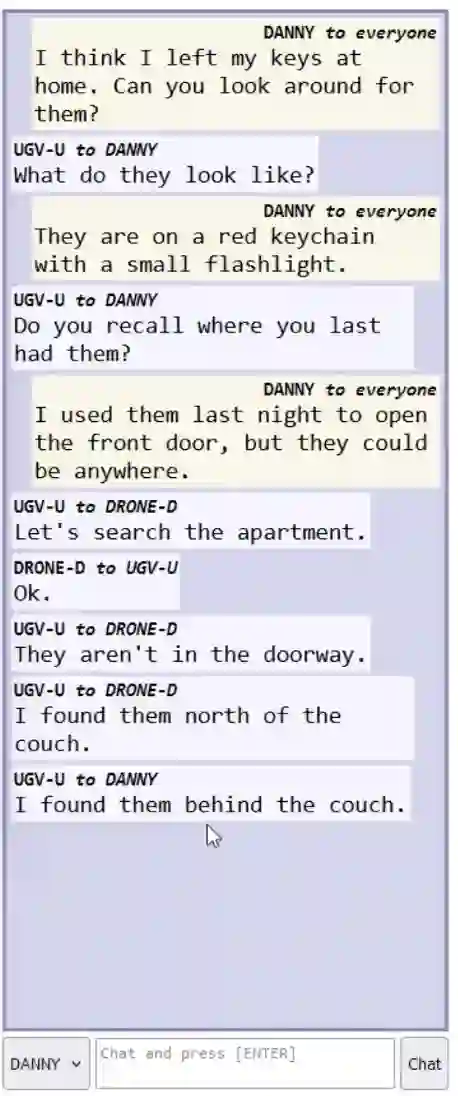

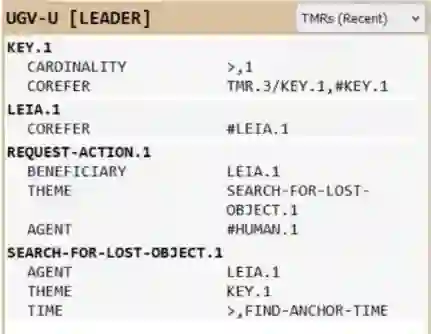

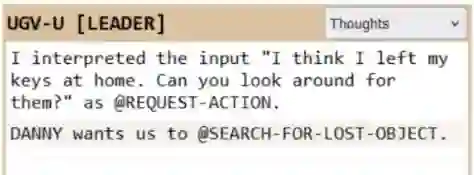

Explanation is key to people having confidence in high-stakes AI systems. However, machine-learning-based systems - which account for almost all current AI - can't explain because they are usually black boxes. The explainable AI (XAI) movement hedges this problem by redefining "explanation". The human-centered explainable AI (HCXAI) movement identifies the explanation-oriented needs of users but can't fulfill them because of its commitment to machine learning. In order to achieve the kinds of explanations needed by real people operating in critical domains, we must rethink how to approach AI. We describe a hybrid approach to developing cognitive agents that uses a knowledge-based infrastructure supplemented by data obtained through machine learning when applicable. These agents will serve as assistants to humans who will bear ultimate responsibility for the decisions and actions of the human-robot team. We illustrate the explanatory potential of such agents using the under-the-hood panels of a demonstration system in which a team of simulated robots collaborates on a search task assigned by a human.

翻译:解释是人们对高风险人工智能系统建立信心的关键。然而,基于机器学习的系统——几乎构成了当前所有人工智能的主体——因其通常为黑箱而无法提供解释。可解释人工智能(XAI)运动通过重新定义“解释”来规避此问题。以人为本的可解释人工智能(HCXAI)运动虽识别了用户对解释导向的需求,但由于其对机器学习的坚持而无法满足这些需求。为了在关键领域为实际使用者提供所需的解释类型,我们必须重新思考构建人工智能的路径。我们描述了一种开发认知智能体的混合方法,该方法以知识基础设施为核心,并在适用时辅以通过机器学习获取的数据。这些智能体将作为人类的助手,而人类将对人机团队的决策与行动承担最终责任。我们通过一个演示系统的内部面板,展示了此类智能体的解释潜力;在该系统中,一组模拟机器人协作执行由人类分配的搜索任务。