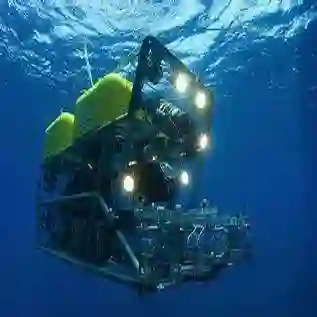

Localization and mapping are core perceptual capabilities for underwater robots. Stereo cameras provide a low-cost means of directly estimating metric depth to support these tasks. However, despite recent advances in stereo depth estimation on land, computing depth from image pairs in underwater scenes remains challenging. In underwater environments, images are degraded by light attenuation, visual artifacts, and dynamic lighting conditions. Furthermore, real-world underwater scenes frequently lack rich texture useful for stereo depth estimation and 3D reconstruction. As a result, stereo estimation networks trained on in-air data cannot transfer directly to the underwater domain. In addition, there is a lack of real-world underwater stereo datasets for supervised training of neural networks. Poor underwater depth estimation is compounded in stereo-based Simultaneous Localization and Mapping (SLAM) algorithms, making it a fundamental challenge for underwater robot perception. To address these challenges, we propose a novel framework that enables sim-to-real training of underwater stereo disparity estimation networks using simulated data and self-supervised finetuning. We leverage our learned depth predictions to develop SurfSLAM, a novel framework for real-time underwater SLAM that fuses stereo cameras with IMU, barometric, and Doppler Velocity Log (DVL) measurements. Lastly, we collect a challenging real-world dataset of shipwreck surveys using an underwater robot. Our dataset features over 24,000 stereo pairs, along with high-quality, dense photogrammetry models and reference trajectories for evaluation. Through extensive experiments, we demonstrate the advantages of the proposed training approach on real-world data for improving stereo estimation in the underwater domain and for enabling accurate trajectory estimation and 3D reconstruction of complex shipwreck sites.

翻译:定位与建图是水下机器人的核心感知能力。立体相机为直接估计度量深度提供了一种低成本手段,从而支持这些任务。然而,尽管近期陆上立体深度估计取得了进展,在水下场景中从图像对计算深度仍然具有挑战性。在水下环境中,图像会因光衰减、视觉伪影和动态光照条件而退化。此外,真实世界的水下场景通常缺乏对立体深度估计和三维重建有用的丰富纹理。因此,在空气中数据上训练的立体估计网络无法直接迁移到水下领域。同时,也缺乏用于神经网络监督训练的真实世界水下立体数据集。水下深度估计的不足在基于立体的同时定位与建图(SLAM)算法中被进一步放大,使其成为水下机器人感知的根本性挑战。为应对这些挑战,我们提出了一种新颖框架,能够利用仿真数据和自监督微调实现水下立体视差估计网络的仿真到现实训练。我们利用学习到的深度预测开发了SurfSLAM,这是一个新颖的实时水下SLAM框架,融合了立体相机、IMU、气压计和多普勒速度计程仪(DVL)测量。最后,我们使用水下机器人收集了一个具有挑战性的真实世界沉船勘测数据集。我们的数据集包含超过24,000个立体图像对,以及用于评估的高质量密集摄影测量模型和参考轨迹。通过大量实验,我们证明了所提出的训练方法在真实世界数据上的优势,能够改进水下领域的立体估计,并实现对复杂沉船遗址的精确轨迹估计和三维重建。