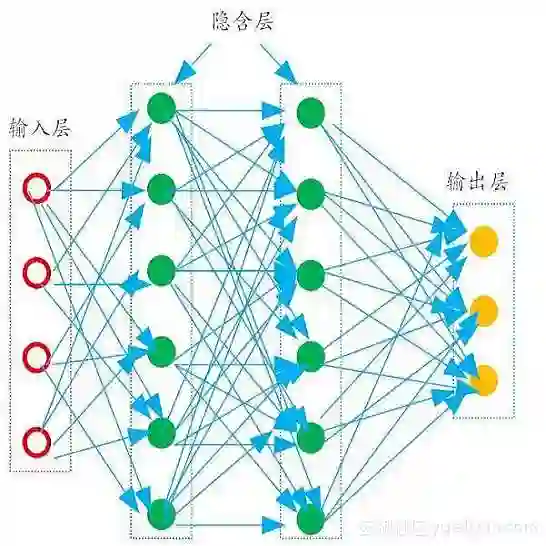

Physics-Informed Neural Networks (PINNs) have emerged as a promising method for approximating solutions to partial differential equations (PDEs) using deep learning. However, PINNs, based on multilayer perceptrons (MLP), often employ point-wise predictions, overlooking the implicit dependencies within the physical system such as temporal or spatial dependencies. These dependencies can be captured using more complex network architectures, for example CNNs or Transformers. However, these architectures conventionally do not allow for incorporating physical constraints, as advancements in integrating such constraints within these frameworks are still lacking. Relying on point-wise predictions often results in trivial solutions. To address this limitation, we propose SetPINNs, a novel approach inspired by Finite Elements Methods from the field of Numerical Analysis. SetPINNs allow for incorporating the dependencies inherent in the physical system while at the same time allowing for incorporating the physical constraints. They accurately approximate PDE solutions of a region, thereby modeling the inherent dependencies between multiple neighboring points in that region. Our experiments show that SetPINNs demonstrate superior generalization performance and accuracy across diverse physical systems, showing that they mitigate failure modes and converge faster in comparison to existing approaches. Furthermore, we demonstrate the utility of SetPINNs on two real-world physical systems.

翻译:物理信息神经网络(PINNs)已成为利用深度学习近似求解偏微分方程(PDEs)的一种有前景的方法。然而,基于多层感知器(MLP)的PINNs通常采用逐点预测,忽略了物理系统内部的隐式依赖关系(如时间或空间依赖性)。这些依赖关系可通过更复杂的网络架构(例如CNN或Transformer)来捕捉。然而,这些架构传统上无法纳入物理约束,因为在此类框架中整合此类约束的进展仍显不足。依赖逐点预测常导致平凡解。为应对这一局限,我们提出SetPINNs——一种受数值分析领域有限元方法启发的新方法。SetPINNs能够在纳入物理系统固有依赖关系的同时,兼容物理约束的引入。它们精确近似区域内的PDE解,从而建模该区域内多个相邻点间的固有依赖关系。实验表明,SetPINNs在多种物理系统中展现出卓越的泛化性能与精度,相较于现有方法,其能缓解失效模式并实现更快收敛。此外,我们通过两个真实物理系统验证了SetPINNs的实用性。