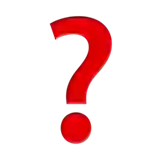

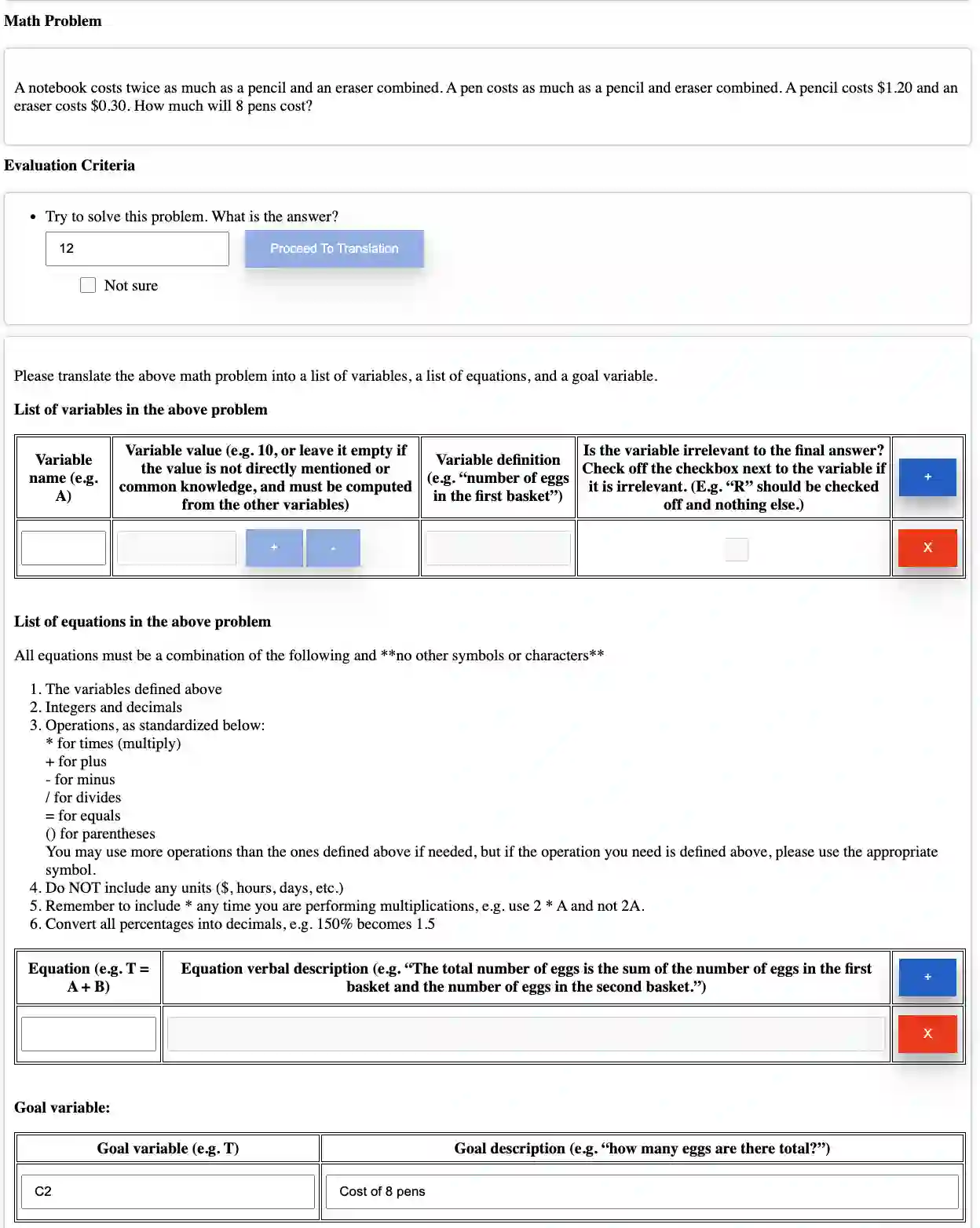

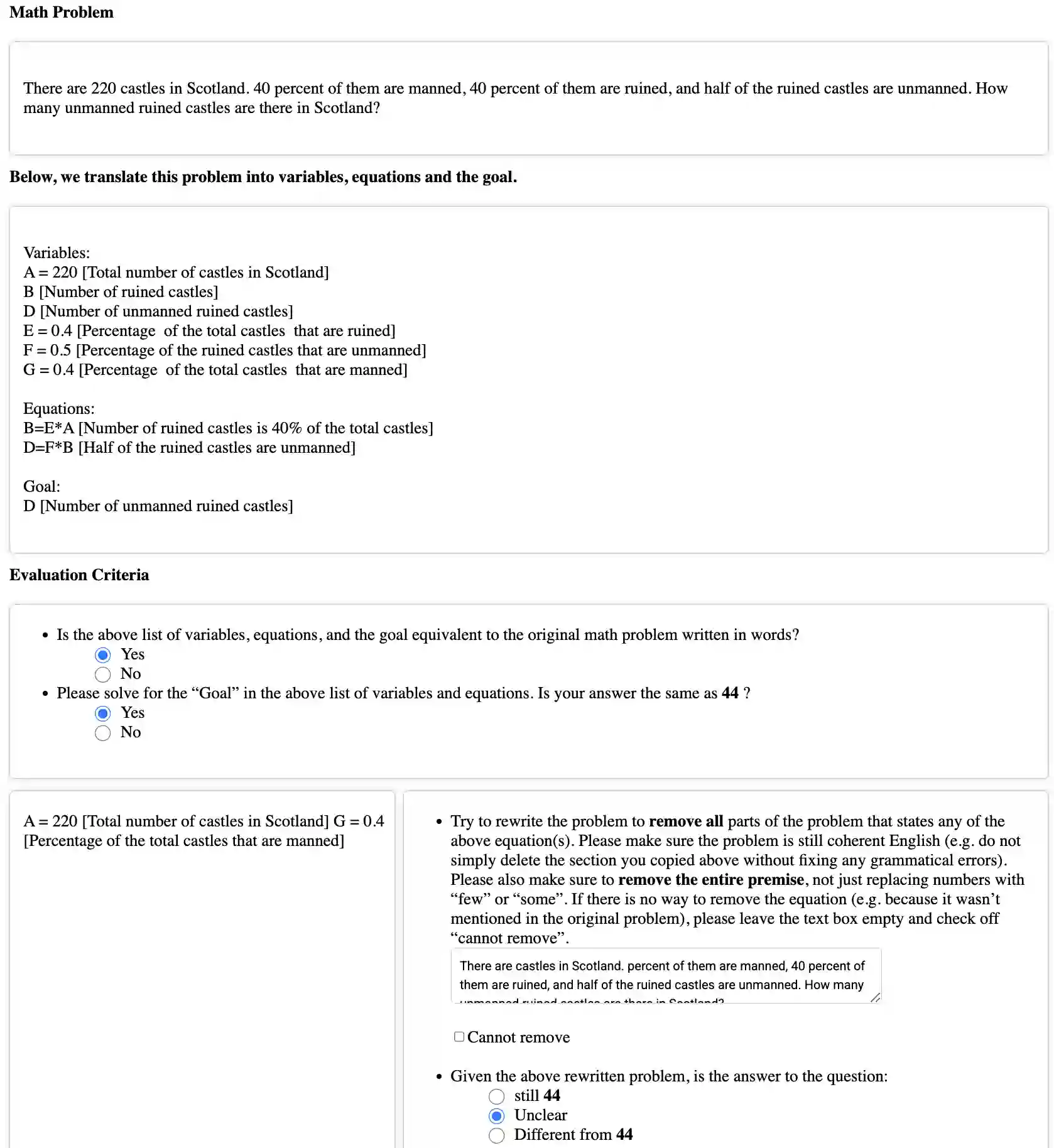

Recently, a large amount of work has focused on improving large language models' (LLMs') performance on reasoning benchmarks such as math and logic. However, past work has largely assumed that tasks are well-defined. In the real world, queries to LLMs are often underspecified, only solvable through acquiring missing information. We formalize this as a constraint satisfaction problem (CSP) with missing variable assignments. Using a special case of this formalism where only one necessary variable assignment is missing, we can rigorously evaluate an LLM's ability to identify the minimal necessary question to ask and quantify axes of difficulty levels for each problem. We present QuestBench, a set of underspecified reasoning tasks solvable by asking at most one question, which includes: (1) Logic-Q: Logical reasoning tasks with one missing proposition, (2) Planning-Q: PDDL planning problems with initial states that are partially-observed, (3) GSM-Q: Human-annotated grade school math problems with one missing variable assignment, and (4) GSME-Q: a version of GSM-Q where word problems are translated into equations by human annotators. The LLM is tasked with selecting the correct clarification question(s) from a list of options. While state-of-the-art models excel at GSM-Q and GSME-Q, their accuracy is only 40-50% on Logic-Q and Planning-Q. Analysis demonstrates that the ability to solve well-specified reasoning problems may not be sufficient for success on our benchmark: models have difficulty identifying the right question to ask, even when they can solve the fully specified version of the problem. Furthermore, in the Planning-Q domain, LLMs tend not to hedge, even when explicitly presented with the option to predict ``not sure.'' This highlights the need for deeper investigation into models' information acquisition capabilities.

翻译:近年来,大量研究工作致力于提升大型语言模型(LLMs)在数学与逻辑等推理基准测试中的表现。然而,过往研究大多假设任务本身是明确定义的。在现实世界中,向LLMs提出的查询往往存在信息不足的情况,只有通过获取缺失信息才能解决。我们将此形式化为一个带有缺失变量赋值的约束满足问题(CSP)。利用该形式化框架中仅缺失一个必要变量赋值的特例,我们可以严格评估LLMs识别最小必要提问的能力,并为每个问题量化难度维度。我们提出了QuestBench,这是一组最多通过提出一个问题即可解决的信息不足推理任务,包括:(1)Logic-Q:缺失一个命题的逻辑推理任务;(2)Planning-Q:初始状态为部分可观测的PDDL规划问题;(3)GSM-Q:人工标注的、缺失一个变量赋值的小学数学应用题;(4)GSME-Q:GSM-Q的一个变体,其中文字问题由人工标注者转化为方程。LLM的任务是从选项列表中选出正确的澄清问题。虽然最先进的模型在GSM-Q和GSME-Q上表现出色,但它们在Logic-Q和Planning-Q上的准确率仅为40-50%。分析表明,解决明确定义推理问题的能力可能不足以在我们的基准测试中取得成功:即使模型能够解决完全明确的问题版本,它们在识别正确提问方面仍存在困难。此外,在Planning-Q领域,即使明确提供了预测“不确定”的选项,LLMs也倾向于不进行规避性表达。这凸显了对模型信息获取能力进行更深入研究的必要性。