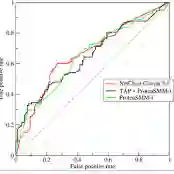

Large language models (LLMs) have made it remarkably easy to synthesize plausible source code from natural language prompts. While this accelerates software development and supports learning, it also raises new risks for academic integrity, authorship attribution, and responsible AI use. This paper investigates the problem of distinguishing human-written from machine-generated code by comparing two complementary approaches: feature-based detectors built from lightweight, interpretable stylometric and structural properties of code, and embedding-based detectors leveraging pretrained code encoders. Using a recent large-scale benchmark dataset of 600k human-written and AI-generated code samples, we find that feature-based models achieve strong performance (ROC-AUC 0.995, PR-AUC 0.995, F1 0.971), while embedding-based models with CodeBERT embeddings are also very competitive (ROC-AUC 0.994, PR-AUC 0.994, F1 0.965). Analysis shows that features tied to indentation and whitespace provide particularly discriminative cues, whereas embeddings capture deeper semantic patterns and yield slightly higher precision. These findings underscore the trade-offs between interpretability and generalization, offering practical guidance for deploying robust code-origin detection in academic and industrial contexts.

翻译:大型语言模型(LLMs)显著降低了从自然语言提示生成可信源代码的难度。尽管这加速了软件开发并支持学习过程,但也对学术诚信、作者归属和负责任的人工智能使用带来了新的风险。本文通过比较两种互补方法研究区分人工编写代码与机器生成代码的问题:基于特征的检测器利用代码轻量级、可解释的文体计量与结构属性构建;基于嵌入的检测器则利用预训练的代码编码器。使用近期包含60万个人工编写与AI生成代码样本的大规模基准数据集进行实验,我们发现基于特征的模型实现了强劲性能(ROC-AUC 0.995,PR-AUC 0.995,F1 0.971),而采用CodeBERT嵌入的基于嵌入模型同样具有很强竞争力(ROC-AUC 0.994,PR-AUC 0.994,F1 0.965)。分析表明,与缩进和空白字符相关的特征提供了特别具有区分度的线索,而嵌入则捕获了更深层的语义模式并产生略高的精确率。这些发现揭示了可解释性与泛化能力之间的权衡,为在学术和工业场景中部署鲁棒的代码溯源检测提供了实践指导。