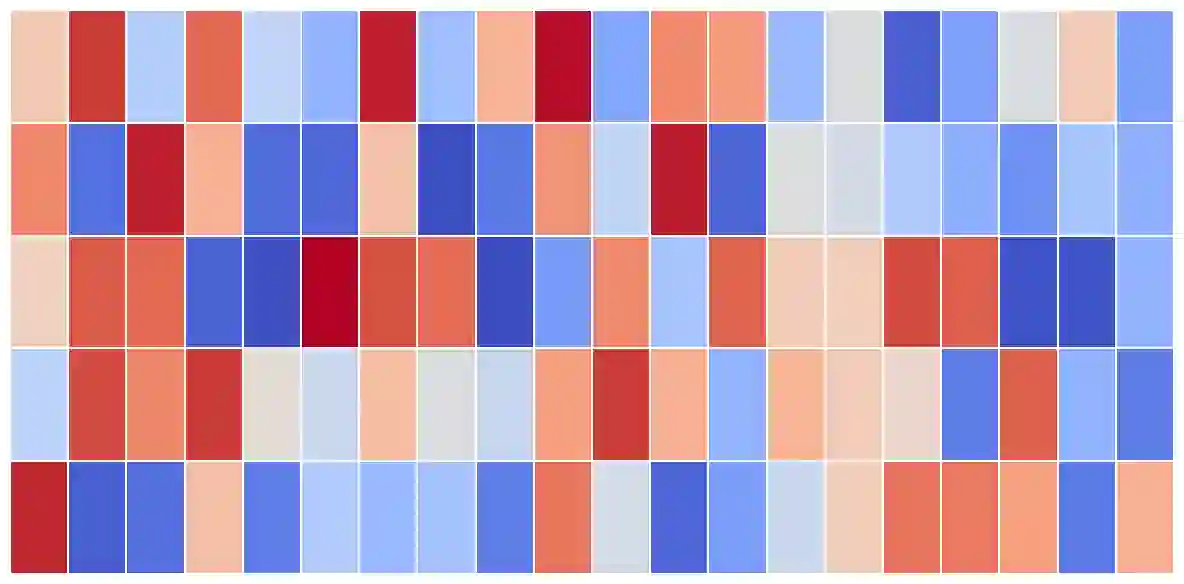

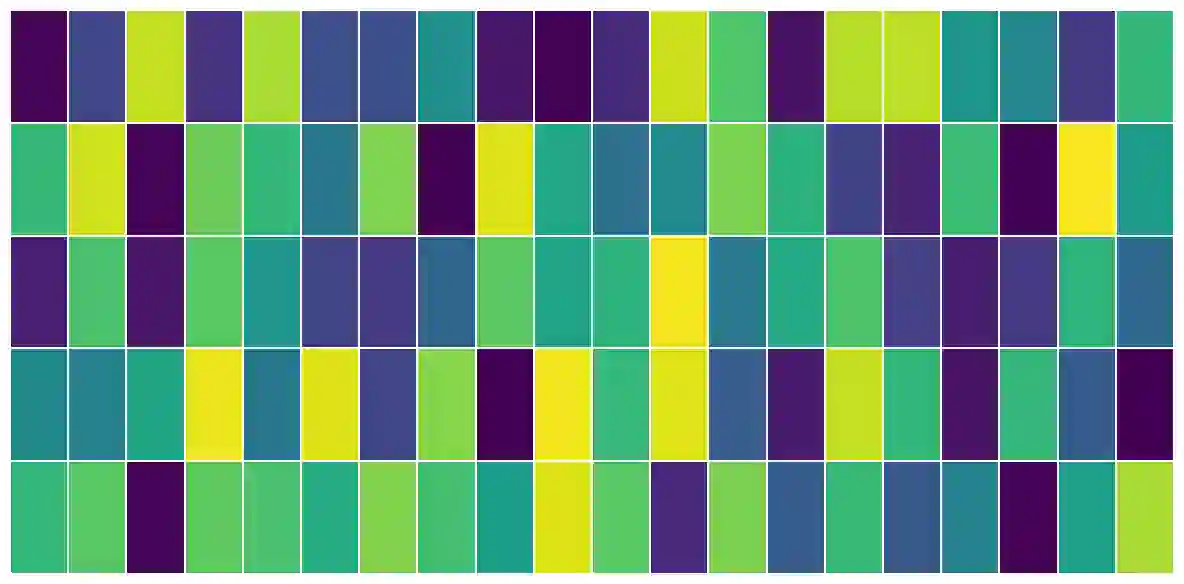

Large language models have been widely applied, but can inadvertently encode sensitive or harmful information, raising significant safety concerns. Machine unlearning has emerged to alleviate this concern; however, existing training-time unlearning approaches, relying on coarse-grained loss combinations, have limitations in precisely separating knowledge and balancing removal effectiveness with model utility. In contrast, we propose Fine-grained Activation manipuLation by Contrastive Orthogonal uNalignment (FALCON), a novel representation-guided unlearning approach that leverages information-theoretic guidance for efficient parameter selection, employs contrastive mechanisms to enhance representation separation, and projects conflict gradients onto orthogonal subspaces to resolve conflicts between forgetting and retention objectives. Extensive experiments demonstrate that FALCON achieves superior unlearning effectiveness while maintaining model utility, exhibiting robust resistance against knowledge recovery attempts.

翻译:大语言模型已得到广泛应用,但可能无意中编码敏感或有害信息,引发重大安全担忧。机器遗忘技术应运而生以缓解此问题;然而,现有基于粗粒度损失组合的训练时遗忘方法,在精确分离知识及平衡遗忘效果与模型效用方面存在局限。相比之下,我们提出基于对比正交非对齐的细粒度激活操纵方法(FALCON),这是一种新颖的表征引导遗忘方法:其利用信息论指导实现高效参数选择,采用对比机制增强表征分离,并将冲突梯度投影到正交子空间以化解遗忘与保留目标间的矛盾。大量实验表明,FALCON在保持模型效用的同时实现了卓越的遗忘效果,并对知识恢复尝试展现出强大的抵抗能力。