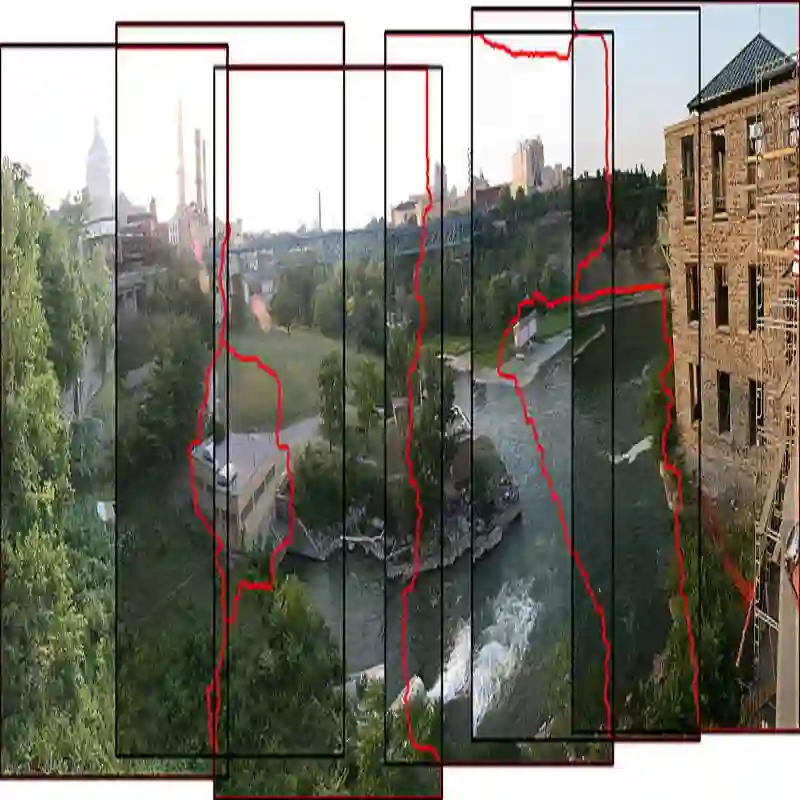

Challenging to capture, and challenging to display on a cellphone screen, the panorama paradoxically remains both a staple and underused feature of modern mobile camera applications. In this work we address both of these challenges with a spherical neural light field model for implicit panoramic image stitching and re-rendering; able to accommodate for depth parallax, view-dependent lighting, and local scene motion and color changes during capture. Fit during test-time to an arbitrary path panoramic video capture -- vertical, horizontal, random-walk -- these neural light spheres jointly estimate the camera path and a high-resolution scene reconstruction to produce novel wide field-of-view projections of the environment. Our single-layer model avoids expensive volumetric sampling, and decomposes the scene into compact view-dependent ray offset and color components, with a total model size of 80 MB per scene, and real-time (50 FPS) rendering at 1080p resolution. We demonstrate improved reconstruction quality over traditional image stitching and radiance field methods, with significantly higher tolerance to scene motion and non-ideal capture settings.

翻译:全景图像在移动摄影应用中既是标配功能又未被充分利用,其捕捉与在手机屏幕上的呈现均具挑战性。本研究通过构建球形神经光场模型,同时应对这两项挑战,实现隐式全景图像拼接与重渲染;该模型能够适应深度视差、视角相关光照,以及拍摄过程中的局部场景运动与色彩变化。通过在测试阶段适配任意路径的全景视频采集(垂直、水平或随机游走),这些神经光球可联合估计相机路径并重建高分辨率场景,从而生成新颖的宽视场环境投影。我们的单层模型避免了昂贵的体素采样,将场景解耦为紧凑的视角相关光线偏移与色彩分量,单场景模型总尺寸为80 MB,并支持1080p分辨率下的实时(50 FPS)渲染。实验证明,相较于传统图像拼接与辐射场方法,本模型在重建质量上有所提升,且对场景运动与非理想拍摄条件具有显著更高的容忍度。