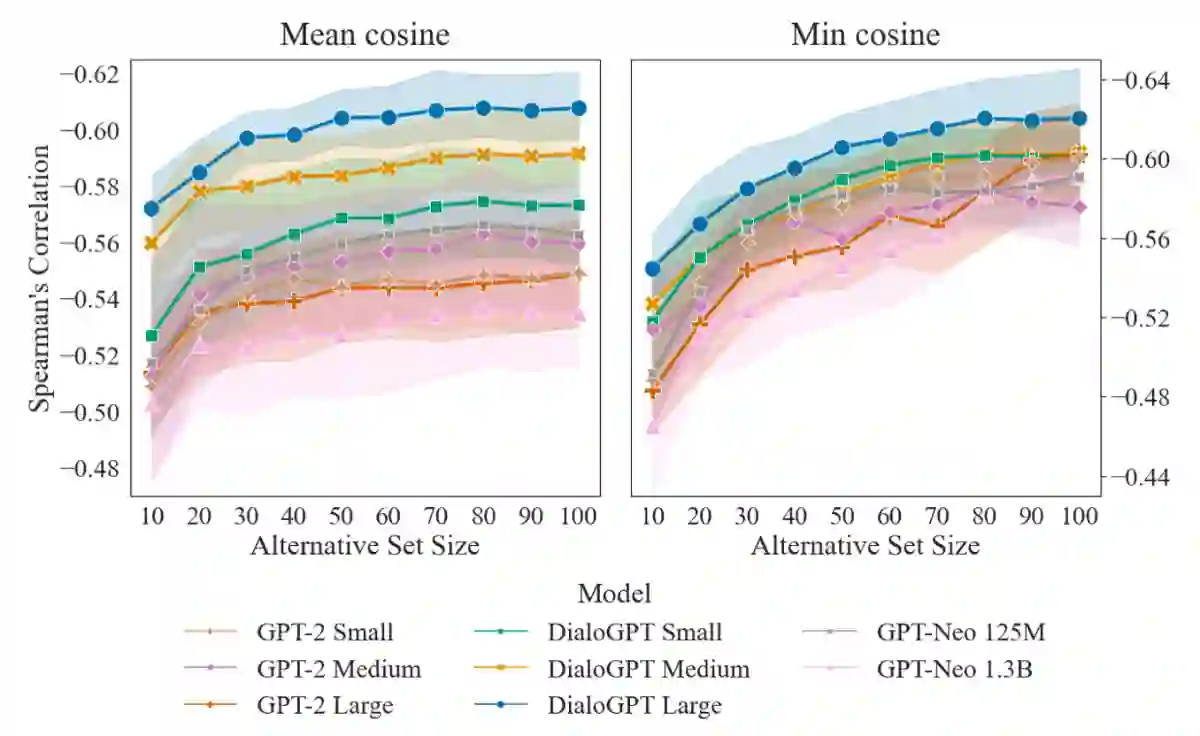

Existing benchmarks for evaluating foundation models mainly focus on single-document, text-only tasks. However, they often fail to fully capture the complexity of research workflows, which typically involve interpreting non-textual data and gathering information across multiple documents. To address this gap, we introduce M3SciQA, a multi-modal, multi-document scientific question answering benchmark designed for a more comprehensive evaluation of foundation models. M3SciQA consists of 1,452 expert-annotated questions spanning 70 natural language processing paper clusters, where each cluster represents a primary paper along with all its cited documents, mirroring the workflow of comprehending a single paper by requiring multi-modal and multi-document data. With M3SciQA, we conduct a comprehensive evaluation of 18 foundation models. Our results indicate that current foundation models still significantly underperform compared to human experts in multi-modal information retrieval and in reasoning across multiple scientific documents. Additionally, we explore the implications of these findings for the future advancement of applying foundation models in multi-modal scientific literature analysis.

翻译:现有的基础模型评估基准主要关注单文档、纯文本任务。然而,这些基准往往无法充分捕捉研究工作的复杂性,因为研究工作通常涉及解释非文本数据以及在多个文档中收集信息。为弥补这一不足,我们提出了M3SciQA,一个多模态、多文档的科学问答基准,旨在为基础模型提供更全面的评估。M3SciQA包含1,452个专家标注的问题,涵盖70个自然语言处理论文簇,每个簇代表一篇主论文及其所有被引文档,通过要求多模态和多文档数据来模拟理解单篇论文的工作流程。基于M3SciQA,我们对18个基础模型进行了全面评估。结果表明,当前的基础模型在多模态信息检索以及跨多个科学文档的推理方面,与人类专家相比仍存在显著差距。此外,我们探讨了这些发现对未来在多模态科学文献分析中应用基础模型发展的启示。