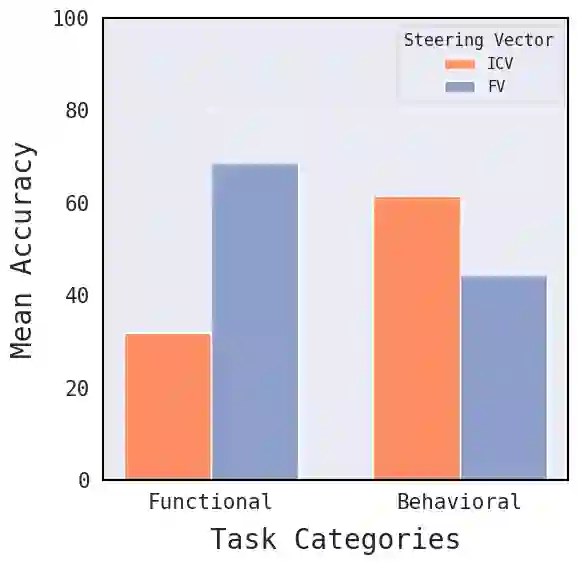

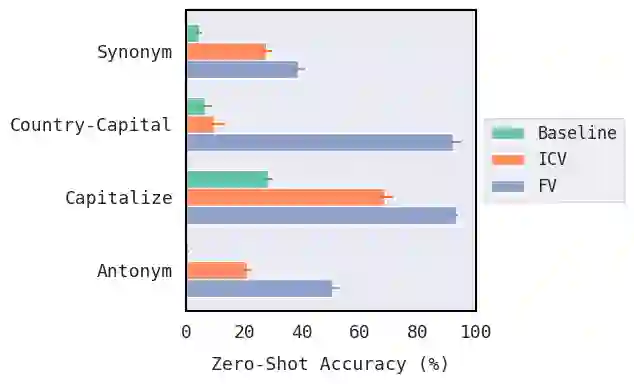

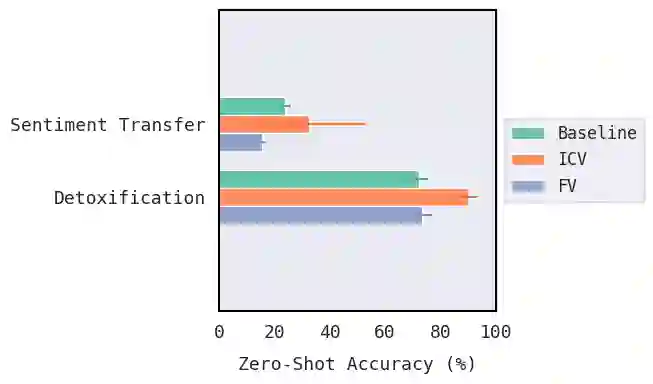

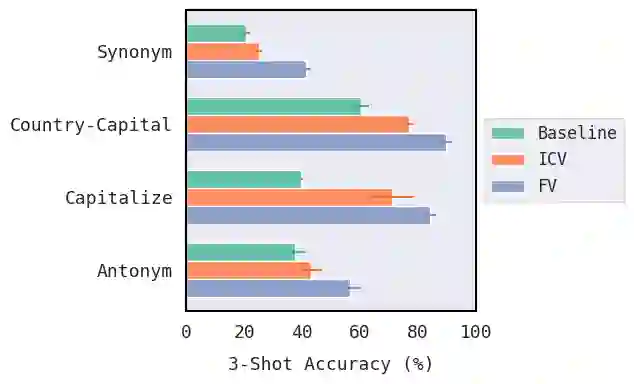

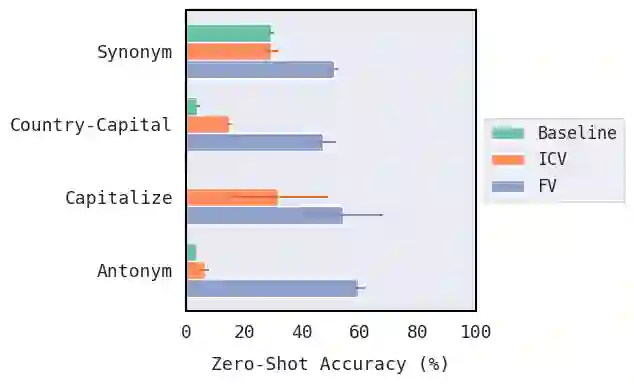

A key objective of interpretability research on large language models (LLMs) is to develop methods for robustly steering models toward desired behaviors. To this end, two distinct approaches to interpretability -- ``bottom-up" and ``top-down" -- have been presented, but there has been little quantitative comparison between them. We present a case study comparing the effectiveness of representative vector steering methods from each branch: function vectors (FV; arXiv:2310.15213), as a bottom-up method, and in-context vectors (ICV; arXiv:2311.06668) as a top-down method. While both aim to capture compact representations of broad in-context learning tasks, we find they are effective only on specific types of tasks: ICVs outperform FVs in behavioral shifting, whereas FVs excel in tasks requiring more precision. We discuss the implications for future evaluations of steering methods and for further research into top-down and bottom-up steering given these findings.

翻译:大型语言模型(LLM)可解释性研究的一个关键目标是开发能够稳健地将模型导向期望行为的方法。为此,两种不同的可解释性方法——“自底向上”与“自顶向下”——已被提出,但二者之间缺乏定量的比较。我们通过一项案例研究,比较了来自这两个分支的代表性向量导向方法的有效性:作为自底向上方法的函数向量(FV;arXiv:2310.15213),以及作为自顶向下方法的上下文向量(ICV;arXiv:2311.06668)。虽然两者都旨在捕捉广泛上下文学习任务的紧凑表示,但我们发现它们仅在特定类型的任务中有效:ICV 在行为转变任务中优于 FV,而 FV 在需要更高精度的任务中表现更佳。我们讨论了这些发现对未来导向方法评估以及进一步研究自顶向下和自底向上导向的启示。