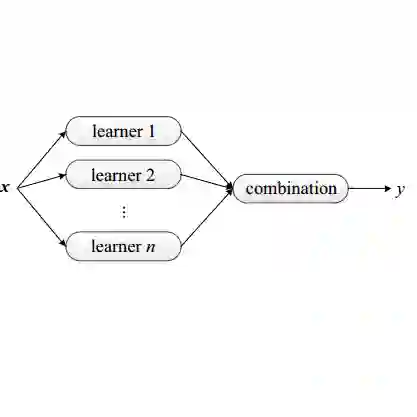

In this paper, we present our solution to the Cross-View Isolated Sign Language Recognition (CV-ISLR) challenge held at WWW 2025. CV-ISLR addresses a critical issue in traditional Isolated Sign Language Recognition (ISLR), where existing datasets predominantly capture sign language videos from a frontal perspective, while real-world camera angles often vary. To accurately recognize sign language from different viewpoints, models must be capable of understanding gestures from multiple angles, making cross-view recognition challenging. To address this, we explore the advantages of ensemble learning, which enhances model robustness and generalization across diverse views. Our approach, built on a multi-dimensional Video Swin Transformer model, leverages this ensemble strategy to achieve competitive performance. Finally, our solution ranked 3rd in both the RGB-based ISLR and RGB-D-based ISLR tracks, demonstrating the effectiveness in handling the challenges of cross-view recognition. The code is available at: https://github.com/Jiafei127/CV_ISLR_WWW2025.

翻译:本文介绍了我们在WWW 2025会议上举办的跨视角孤立手语识别挑战赛中的解决方案。跨视角孤立手语识别旨在解决传统孤立手语识别中的一个关键问题:现有数据集主要从正面视角采集手语视频,而现实世界中的摄像机角度往往多变。为了从不同视角准确识别手语,模型必须能够理解多角度手势,这使得跨视角识别极具挑战性。为此,我们探索了集成学习的优势,该策略能有效增强模型在不同视角下的鲁棒性与泛化能力。我们的方法基于多维Video Swin Transformer模型,利用该集成策略实现了具有竞争力的性能。最终,我们的解决方案在基于RGB的孤立手语识别赛道和基于RGB-D的孤立手语识别赛道中均获得第三名,证明了该方法在应对跨视角识别挑战方面的有效性。代码已开源:https://github.com/Jiafei127/CV_ISLR_WWW2025。