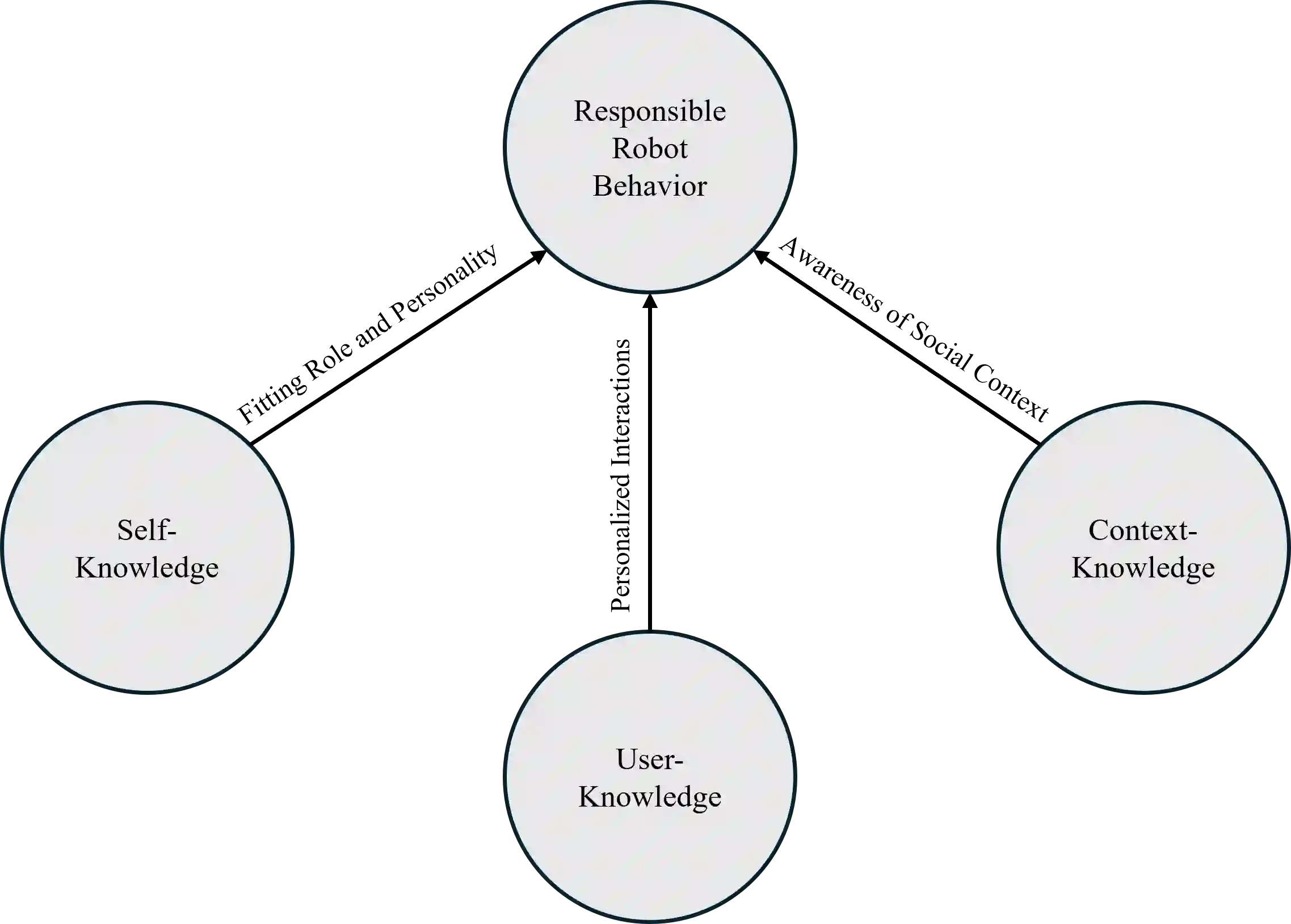

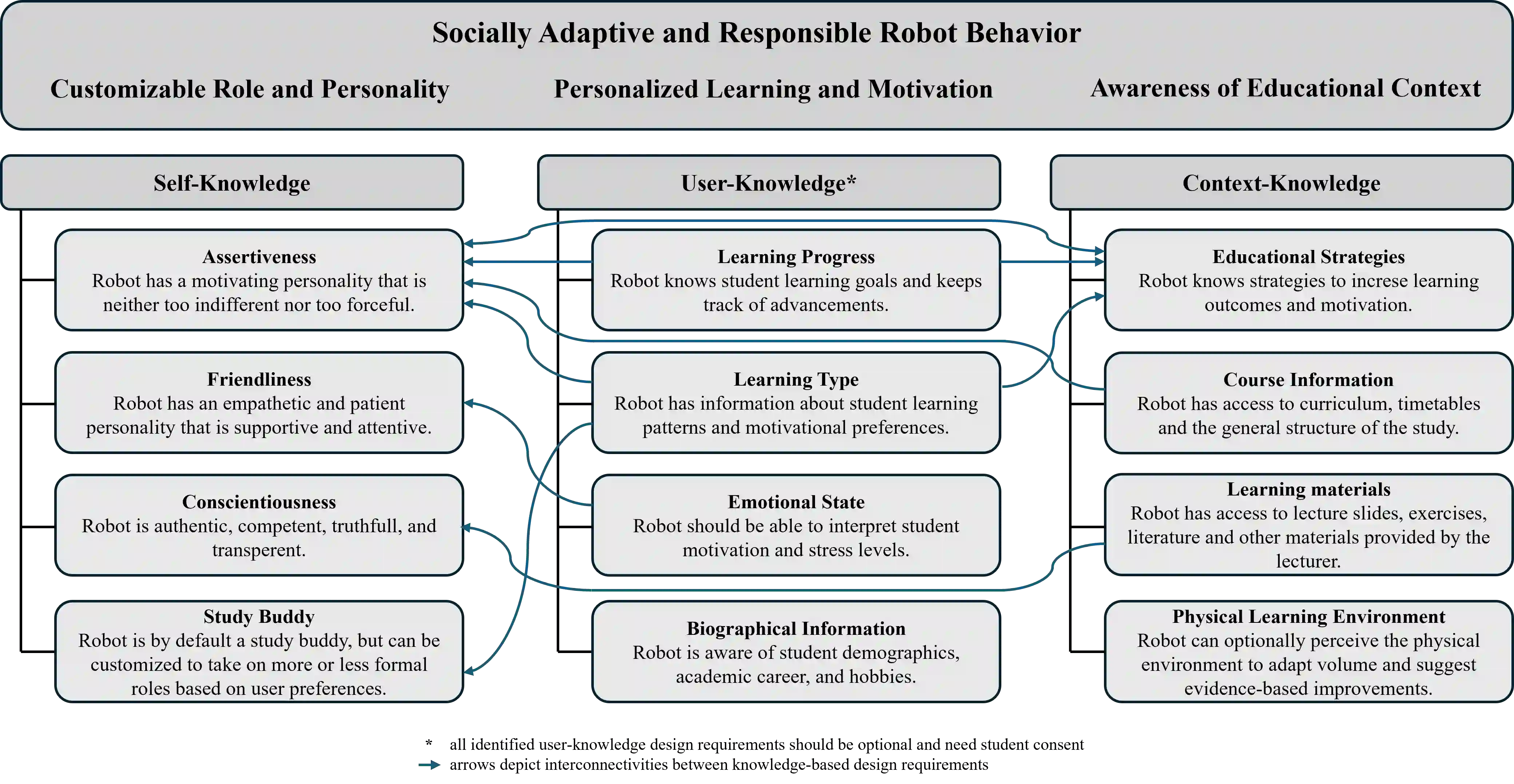

Generative social robots (GSRs) powered by large language models enable adaptive, conversational tutoring but also introduce risks such as hallucinations, overreliance, and privacy violations. Existing frameworks for educational technologies and responsible AI primarily define desired behaviors, yet they rarely specify the knowledge prerequisites that enable generative systems to express these behaviors reliably. To address this gap, we adopt a knowledge-based design perspective and investigate what information tutoring-oriented GSRs require to function responsibly and effectively in higher education. Based on twelve semi-structured interviews with university students and lecturers, we identify twelve design requirements across three knowledge types: self-knowledge (assertive, conscientious and friendly personality with customizable role), user-knowledge (personalized information about student learning goals, learning progress, motivation type, emotional state and background), and context-knowledge (learning materials, educational strategies, course-related information, and physical learning environment). By identifying these knowledge requirements, this work provides a structured foundation for the design of tutoring GSRs and future evaluations, aligning generative system capabilities with pedagogical and ethical expectations.

翻译:由大语言模型驱动的生成式社交机器人(GSRs)能够实现自适应、对话式辅导,但也引入了幻觉、过度依赖和隐私侵犯等风险。现有的教育技术和负责任人工智能框架主要定义了期望行为,却很少明确使生成系统可靠表达这些行为所需的知识前提。为弥补这一不足,本研究采用基于知识的设计视角,探讨面向辅导的GSRs在高等教育中负责任且有效运作需要哪些信息。基于对大学生和讲师的十二次半结构化访谈,我们识别出涵盖三种知识类型的十二项设计需求:自我知识(具备自信、尽责和友好的个性,并支持角色定制)、用户知识(关于学生学习目标、学习进度、动机类型、情绪状态和背景的个性化信息)以及情境知识(学习材料、教育策略、课程相关信息和物理学习环境)。通过明确这些知识需求,本研究为辅导型GSRs的设计及未来评估提供了结构化基础,使生成系统的能力与教学及伦理期望保持一致。