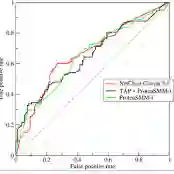

Distinguishing fake or untrue news from satire or humor poses a unique challenge due to their overlapping linguistic features and divergent intent. This study develops WISE (Web Information Satire and Fakeness Evaluation) framework which benchmarks eight lightweight transformer models alongside two baseline models on a balanced dataset of 20,000 samples from Fakeddit, annotated as either fake news or satire. Using stratified 5-fold cross-validation, we evaluate models across comprehensive metrics including accuracy, precision, recall, F1-score, ROC-AUC, PR-AUC, MCC, Brier score, and Expected Calibration Error. Our evaluation reveals that MiniLM, a lightweight model, achieves the highest accuracy (87.58%) among all models, while RoBERTa-base achieves the highest ROC-AUC (95.42%) and strong accuracy (87.36%). DistilBERT offers an excellent efficiency-accuracy trade-off with 86.28\% accuracy and 93.90\% ROC-AUC. Statistical tests confirm significant performance differences between models, with paired t-tests and McNemar tests providing rigorous comparisons. Our findings highlight that lightweight models can match or exceed baseline performance, offering actionable insights for deploying misinformation detection systems in real-world, resource-constrained settings.

翻译:区分虚假或不实新闻与讽刺或幽默内容因其重叠的语言特征和相异的意图而构成独特挑战。本研究开发了WISE(网络信息讽刺性与虚假性评估)框架,在来自Fakeddit的20,000个标注为虚假新闻或讽刺内容的平衡数据集上,对八种轻量级Transformer模型及两种基线模型进行基准测试。通过分层五折交叉验证,我们使用包括准确率、精确率、召回率、F1分数、ROC-AUC、PR-AUC、MCC、Brier分数和期望校准误差在内的综合指标评估模型性能。评估结果表明,轻量级模型MiniLM在所有模型中取得最高准确率(87.58%),而RoBERTa-base获得最高ROC-AUC(95.42%)并保持较强准确率(87.36%)。DistilBERT在效率与准确率间展现出优异平衡,达到86.28%准确率与93.90% ROC-AUC。统计检验通过配对t检验和McNemar检验证实模型间存在显著性能差异。我们的研究结果强调,轻量级模型能够匹配甚至超越基线性能,为在现实世界资源受限环境中部署虚假信息检测系统提供了可行见解。