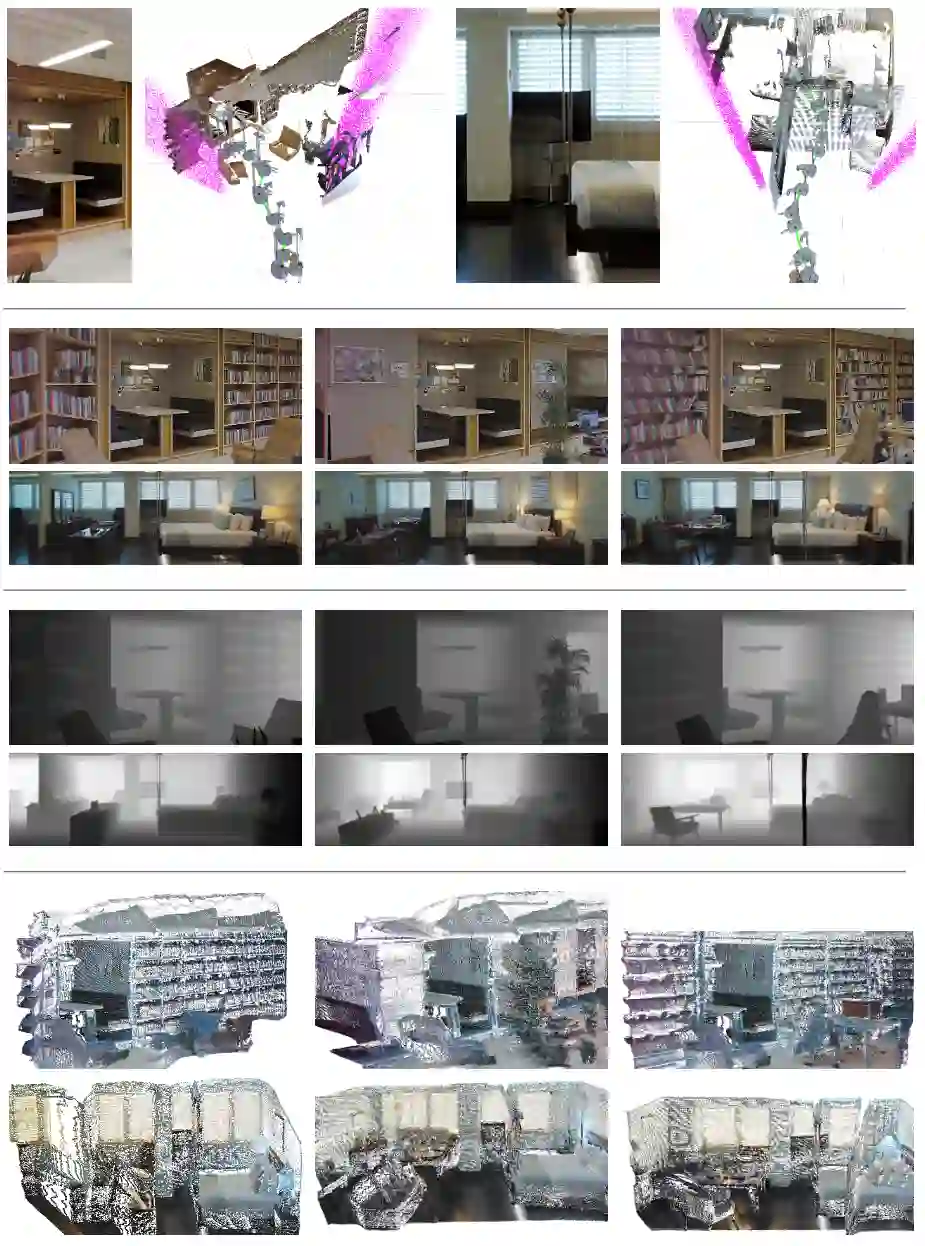

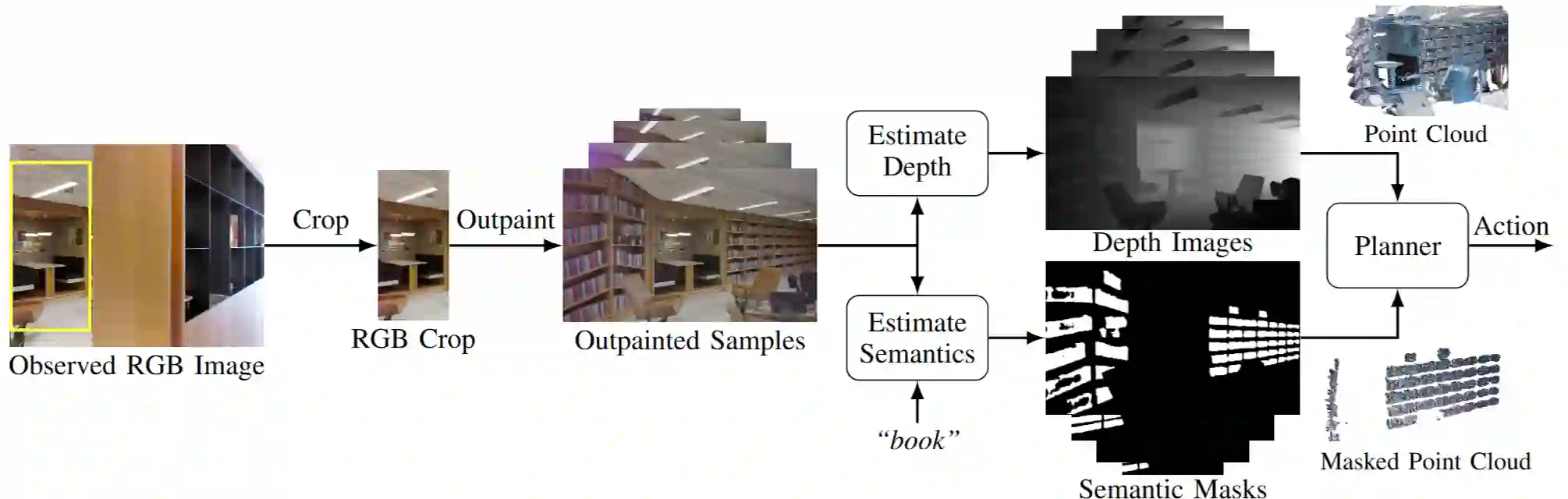

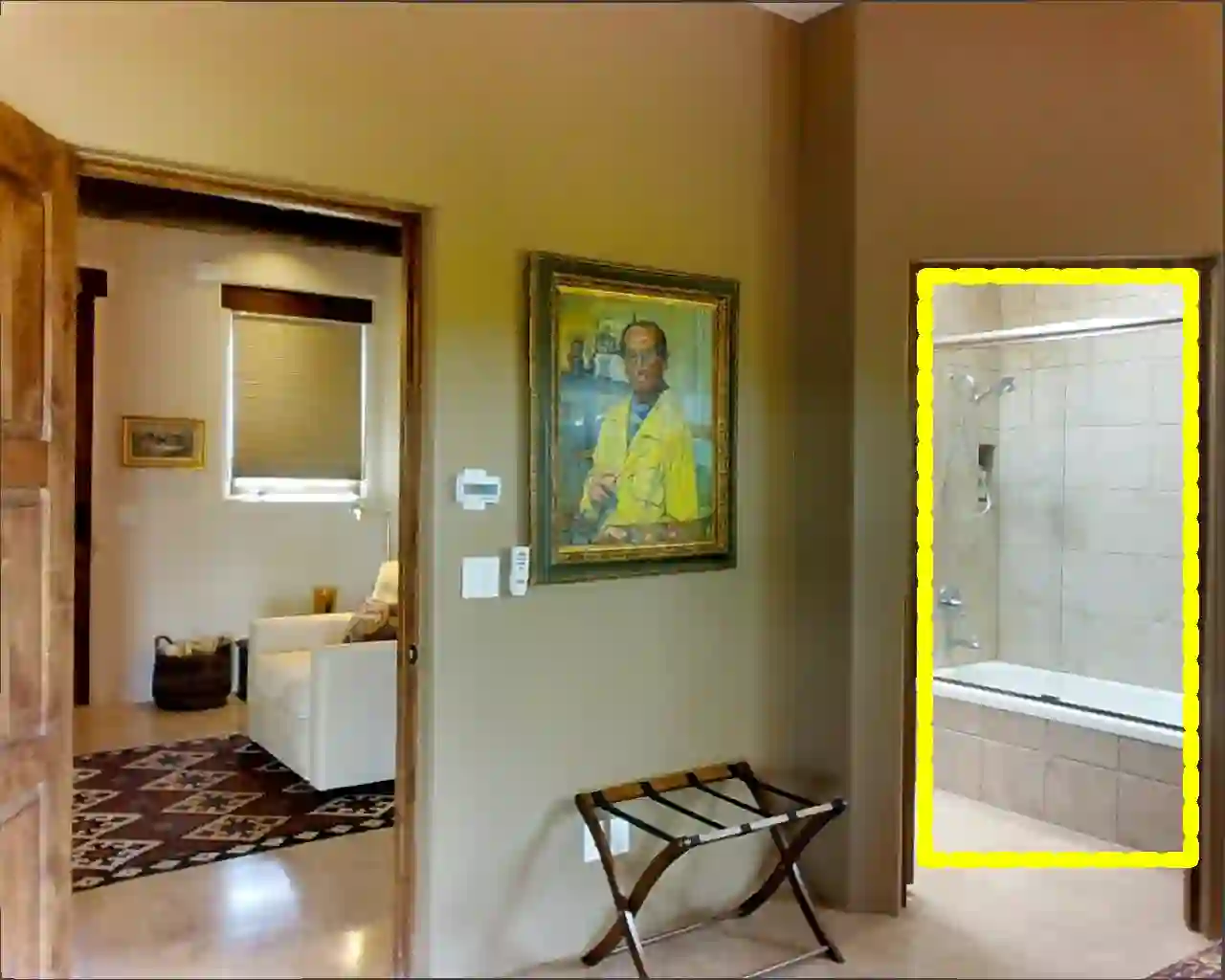

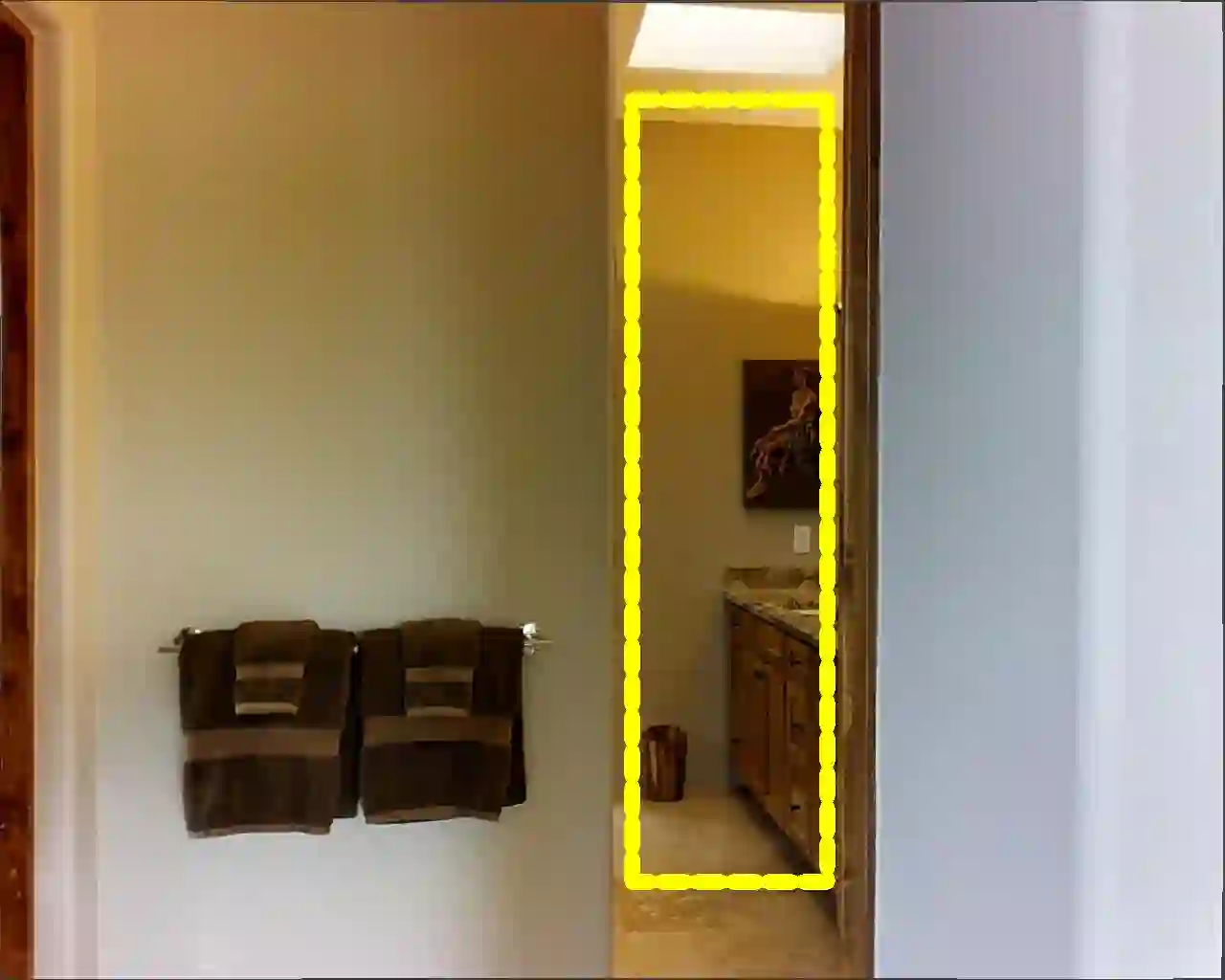

Priors are vital for planning under partial observability, yet difficult to obtain in practice. We present a sampling-based pipeline that leverages large-scale pretrained generative models to produce probabilistic priors capturing environmental uncertainty and spatio-semantic relationships in a zero-shot manner. Conditioned on partial observations, the pipeline recovers complete RGB-D point cloud samples with occupancy and target semantics, formulated to be directly useful in configuration-space planning. We establish a Matterport3D benchmark of rooms partially visible through doorways, where a robot must navigate to an unobserved target object. Effective priors for this setting must represent both occupancy and target-location uncertainty in unobserved regions. Experiments show that our approach recovers commonsense spatial semantics consistent with ground truth, yielding diverse, clean 3D point clouds usable in motion planning, highlight the promise of generative models as a rich source of priors for robotic planning.

翻译:先验对于部分可观测条件下的规划至关重要,但在实践中难以获取。我们提出了一种基于采样的流程,该流程利用大规模预训练生成模型,以零样本方式生成捕获环境不确定性及空间语义关系的概率先验。在部分观测条件下,该流程能够恢复完整的RGB-D点云样本,这些样本包含占据信息与目标语义,其构建形式可直接用于构型空间规划。我们基于Matterport3D数据集建立了一个基准测试场景:房间仅能通过门口进行部分观测,机器人必须导航至一个未被观测到的目标物体。适用于此场景的有效先验必须能表征未观测区域的占据不确定性与目标位置不确定性。实验表明,我们的方法能够恢复与真实情况一致的常识性空间语义,生成多样化、干净的3D点云,可直接用于运动规划,这突显了生成模型作为机器人规划丰富先验来源的潜力。