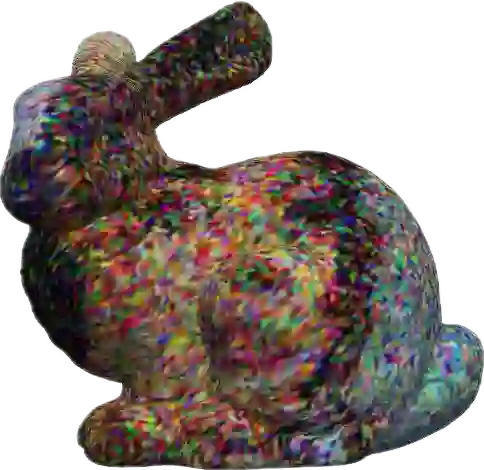

This paper addresses the problem of generating textures for 3D mesh assets. Existing approaches often rely on image diffusion models to generate multi-view image observations, which are then transformed onto the mesh surface to produce a single texture. However, due to the gap between multi-view images and 3D space, such process is susceptible to arange of issues such as geometric inconsistencies, visibility occlusion, and baking artifacts. To overcome this problem, we propose a novel approach that directly generates texture on 3D meshes. Our approach leverages heat dissipation diffusion, which serves as an efficient operator that propagates features on the geometric surface of a mesh, while remaining insensitive to the specific layout of the wireframe. By integrating this technique into a generative diffusion pipeline, we significantly improve the efficiency of texture generation compared to existing texture generation methods. We term our approach DoubleDiffusion, as it combines heat dissipation diffusion with denoising diffusion to enable native generative learning on 3D mesh surfaces.

翻译:本文研究三维网格资产的纹理生成问题。现有方法通常依赖图像扩散模型生成多视角图像观测,随后将其映射至网格表面以生成单一纹理。然而,由于多视角图像与三维空间之间存在差异,该流程易产生几何不一致性、可见性遮挡与烘焙伪影等问题。为克服此局限,我们提出一种直接在三维网格上生成纹理的新方法。该方法利用热耗散扩散作为高效算子,可在网格几何表面传播特征,同时对线框的具体布局保持不敏感性。通过将该技术整合至生成式扩散流程,相较于现有纹理生成方法,我们显著提升了纹理生成效率。我们将该方法命名为DoubleDiffusion,因其融合了热耗散扩散与去噪扩散机制,实现了三维网格表面的原生生成式学习。