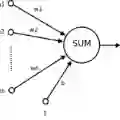

Physics-Informed Neural Networks (PINNs) are an emerging tool for approximating the solution of Partial Differential Equations (PDEs) in both forward and inverse problems. PINNs minimize a loss function which includes the PDE residual determined for a set of collocation points. Previous work has shown that the number and distribution of these collocation points have a significant influence on the accuracy of the PINN solution. Therefore, the effective placement of these collocation points is an active area of research. Specifically, adaptive collocation point sampling methods have been proposed, which have been reported to scale poorly to higher dimensions. In this work, we address this issue and present the Point Adaptive Collocation Method for Artificial Neural Networks (PACMANN). Inspired by classic optimization problems, this approach incrementally moves collocation points toward regions of higher residuals using gradient-based optimization algorithms guided by the gradient of the squared residual. We apply PACMANN for forward and inverse problems, and demonstrate that this method matches the performance of state-of-the-art methods in terms of the accuracy/efficiency tradeoff for the low-dimensional problems, while outperforming available approaches for high-dimensional problems; the best performance is observed for the Adam optimizer. Key features of the method include its low computational cost and simplicity of integration in existing physics-informed neural network pipelines.

翻译:物理信息神经网络(PINNs)是一种新兴工具,用于在正问题和反问题中近似求解偏微分方程(PDEs)。PINNs通过最小化损失函数进行优化,该损失函数包含针对一组配置点计算的PDE残差。先前研究表明,这些配置点的数量与分布对PINN求解精度具有显著影响。因此,如何有效布置这些配置点成为当前研究的热点领域。特别值得注意的是,已有研究提出了自适应配置点采样方法,但据报道这些方法在高维问题中扩展性较差。本研究针对该问题,提出了面向人工神经网络的点自适应配置方法(PACMANN)。受经典优化问题启发,该方法基于平方残差梯度引导,采用梯度优化算法逐步将配置点向高残差区域移动。我们将PACMANN应用于正问题与反问题求解,实验证明:在低维问题中,本方法在精度/效率权衡方面与最先进方法性能相当;在高维问题中,本方法显著优于现有方法——其中Adam优化器表现出最佳性能。该方法的核心优势在于计算成本低,且能简便地集成到现有物理信息神经网络流程中。