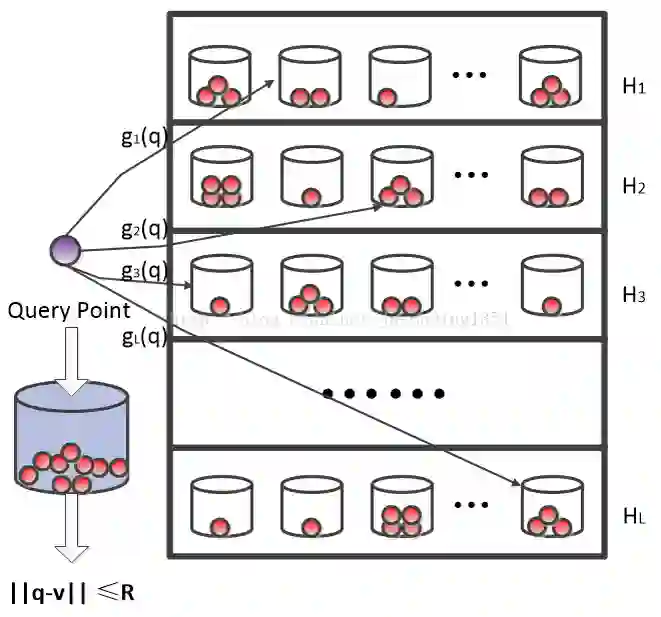

This work suggests faster and space-efficient index construction algorithms for LSH for Euclidean distance (\textit{a.k.a.}~\ELSH) and cosine similarity (\textit{a.k.a.}~\SRP). The index construction step of these LSHs relies on grouping data points into several bins of hash tables based on their hashcode. To generate an $m$-dimensional hashcode of the $d$-dimensional data point, these LSHs first project the data point onto a $d$-dimensional random Gaussian vector and then discretise the resulting inner product. The time and space complexity of both \ELSH~and \SRP~for computing an $m$-sized hashcode of a $d$-dimensional vector is $O(md)$, which becomes impractical for large values of $m$ and $d$. To overcome this problem, we propose two alternative LSH hashcode generation algorithms both for Euclidean distance and cosine similarity, namely, \CSELSH, \HCSELSH~and \CSSRP, \HCSSRP, respectively. \CSELSH~and \CSSRP~are based on count sketch \cite{count_sketch} and \HCSELSH~and \HCSSRP~utilize higher-order count sketch \cite{shi2019higher}. These proposals significantly reduce the hashcode computation time from $O(md)$ to $O(d)$. Additionally, both \CSELSH~and \CSSRP~reduce the space complexity from $O(md)$ to $O(d)$; ~and \HCSELSH, \HCSSRP~ reduce the space complexity from $O(md)$ to $O(N \sqrt[N]{d})$ respectively, where $N\geq 1$ denotes the size of the input/reshaped tensor. Our proposals are backed by strong mathematical guarantees, and we validate their performance through simulations on various real-world datasets.

翻译:本研究针对欧氏距离(简称\ELSH)和余弦相似度(简称\SRP)的局部敏感哈希(LSH)提出了更快速且空间高效的索引构建算法。这些LSH的索引构建步骤依赖于根据数据点的哈希码将其分组到哈希表的多个桶中。为了生成$d$维数据点的$m$维哈希码,这些LSH首先将数据点投影到$d$维随机高斯向量上,然后对所得内积进行离散化。\ELSH和\SRP计算$d$维向量$m$维哈希码的时间与空间复杂度均为$O(md)$,当$m$和$d$取值较大时,该复杂度将变得不切实际。为解决此问题,我们分别针对欧氏距离和余弦相似度提出了两种替代的LSH哈希码生成算法,即\CSELSH、\HCSELSH与\CSSRP、\HCSSRP。\CSELSH和\CSSRP基于计数草图(count sketch)\cite{count_sketch},而\HCSELSH和\HCSSRP则利用高阶计数草图\cite{shi2019higher}。这些方案将哈希码计算时间从$O(md)$显著降低至$O(d)$。此外,\CSELSH和\CSSRP将空间复杂度从$O(md)$降低至$O(d)$;而\HCSELSH和\HCSSRP分别将空间复杂度从$O(md)$降低至$O(N \sqrt[N]{d})$,其中$N\geq 1$表示输入/重塑张量的尺寸。我们的方案具有坚实的数学理论保证,并通过在多种真实世界数据集上的仿真实验验证了其性能。