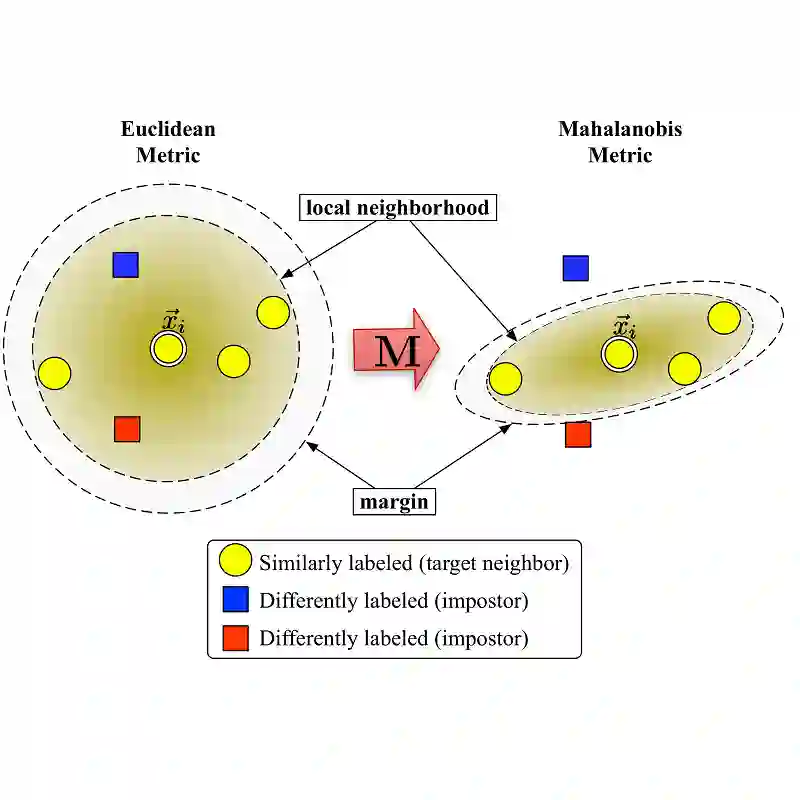

The Forward-Forward (FF) algorithm was recently proposed as a local learning method to address the limitations of backpropagation (BP), offering biological plausibility along with memory-efficient and highly parallelized computational benefits. However, it suffers from suboptimal performance and poor generalization, largely due to inadequate theoretical support and a lack of effective learning strategies. In this work, we reformulate FF using distance metric learning and propose a distance-forward algorithm (DF) to improve FF performance in supervised vision tasks while preserving its local computational properties, making it competitive for efficient on-chip learning. To achieve this, we reinterpret FF through the lens of centroid-based metric learning and develop a goodness-based N-pair margin loss to facilitate the learning of discriminative features. Furthermore, we integrate layer-collaboration local update strategies to reduce information loss caused by greedy local parameter updates. Our method surpasses existing FF models and other advanced local learning approaches, with accuracies of 99.7\% on MNIST, 88.2\% on CIFAR-10, 59\% on CIFAR-100, 95.9\% on SVHN, and 82.5\% on ImageNette, respectively. Moreover, it achieves comparable performance with less than 40\% memory cost compared to BP training, while exhibiting stronger robustness to multiple types of hardware-related noise, demonstrating its potential for online learning and energy-efficient computation on neuromorphic chips.

翻译:前向前向算法(FF)作为一种局部学习方法被提出,旨在解决反向传播(BP)的局限性,兼具生物合理性、内存高效性和高度并行化的计算优势。然而,其性能欠佳且泛化能力较差,主要源于理论支撑不足和缺乏有效的学习策略。本研究通过距离度量学习重新构建FF,并提出一种距离前向算法(DF),以提升FF在监督视觉任务中的性能,同时保留其局部计算特性,使其在高效片上学习中具备竞争力。为此,我们从基于质心的度量学习视角重新阐释FF,并设计了一种基于“优良度”的N对间隔损失,以促进判别性特征的学习。此外,我们整合了层协作局部更新策略,以减少贪婪式局部参数更新造成的信息损失。我们的方法超越了现有FF模型及其他先进的局部学习方法,在MNIST、CIFAR-10、CIFAR-100、SVHN和ImageNette数据集上分别达到了99.7%、88.2%、59%、95.9%和82.5%的准确率。此外,与BP训练相比,该方法以低于40%的内存开销实现了可比性能,同时对多种硬件相关噪声表现出更强的鲁棒性,展现了其在神经形态芯片上进行在线学习和高效能计算的潜力。