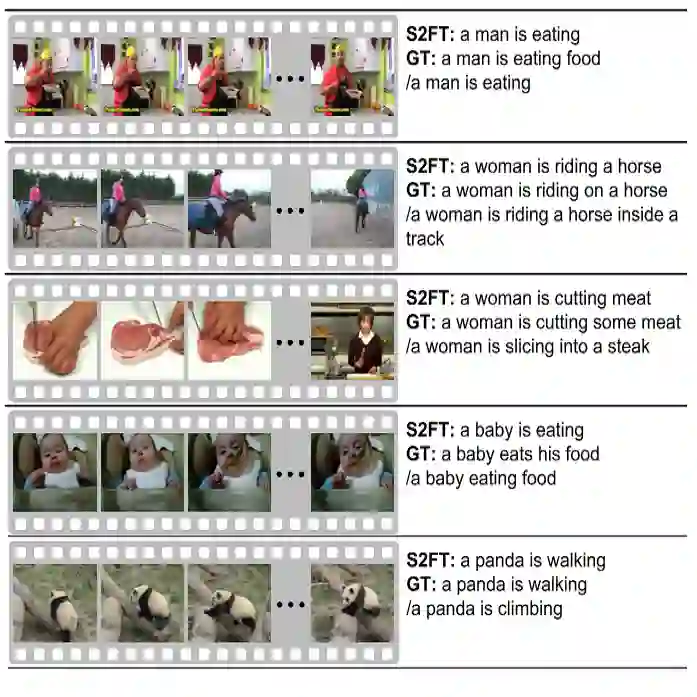

Video captioning generate a sentence that describes the video content. Existing methods always require a number of captions (\eg, 10 or 20) per video to train the model, which is quite costly. In this work, we explore the possibility of using only one or very few ground-truth sentences, and introduce a new task named few-supervised video captioning. Specifically, we propose a few-supervised video captioning framework that consists of lexically constrained pseudo-labeling module and keyword-refined captioning module. Unlike the random sampling in natural language processing that may cause invalid modifications (\ie, edit words), the former module guides the model to edit words using some actions (\eg, copy, replace, insert, and delete) by a pretrained token-level classifier, and then fine-tunes candidate sentences by a pretrained language model. Meanwhile, the former employs the repetition penalized sampling to encourage the model to yield concise pseudo-labeled sentences with less repetition, and selects the most relevant sentences upon a pretrained video-text model. Moreover, to keep semantic consistency between pseudo-labeled sentences and video content, we develop the transformer-based keyword refiner with the video-keyword gated fusion strategy to emphasize more on relevant words. Extensive experiments on several benchmarks demonstrate the advantages of the proposed approach in both few-supervised and fully-supervised scenarios. The code implementation is available at https://github.com/mlvccn/PKG_VidCap

翻译:视频描述生成旨在生成描述视频内容的语句。现有方法通常需要每个视频配备大量标注语句(例如10或20条)来训练模型,成本较高。本研究探索仅使用一条或极少量真实标注语句的可能性,并提出名为"少监督视频描述生成"的新任务。具体而言,我们提出了一个少监督视频描述生成框架,包含词汇约束伪标注模块和关键词精化描述模块。前者通过预训练的词元级分类器引导模型使用特定操作(如复制、替换、插入和删除)编辑词汇,避免了自然语言处理中随机采样可能导致的无效修改(即编辑词汇),随后通过预训练语言模型对候选语句进行微调。同时,该模块采用重复惩罚采样机制鼓励模型生成重复更少的简洁伪标注语句,并基于预训练视频-文本模型选择最相关的语句。此外,为保持伪标注语句与视频内容的语义一致性,我们开发了基于Transformer的关键词精化器,结合视频-关键词门控融合策略以强化相关词汇的权重。在多个基准数据集上的大量实验表明,该方法在少监督和全监督场景下均具有优势。代码实现详见https://github.com/mlvccn/PKG_VidCap