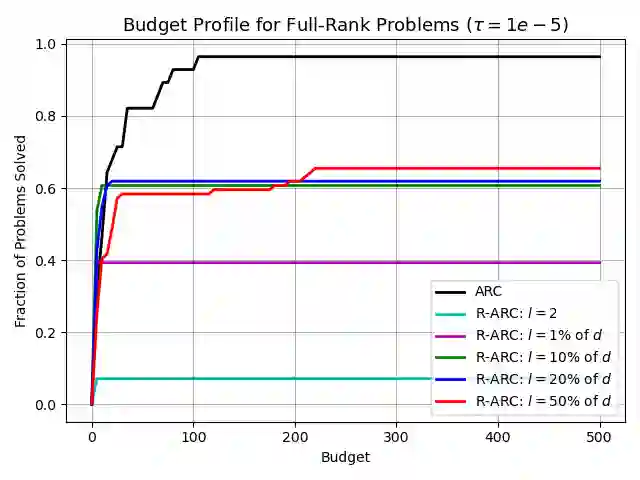

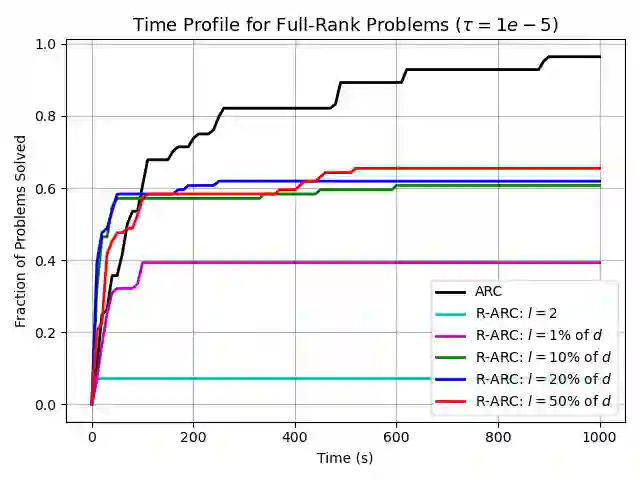

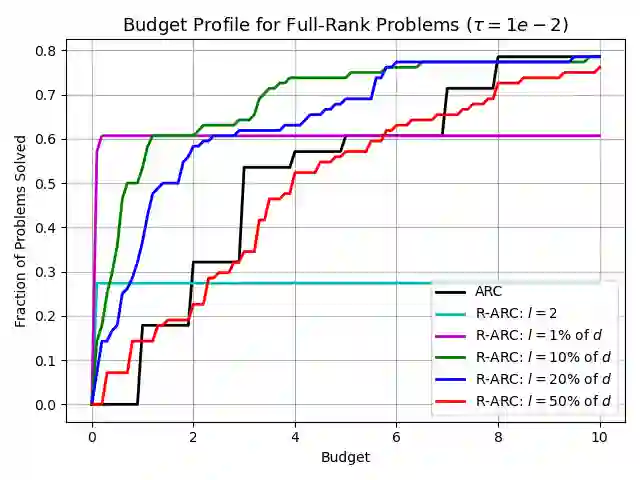

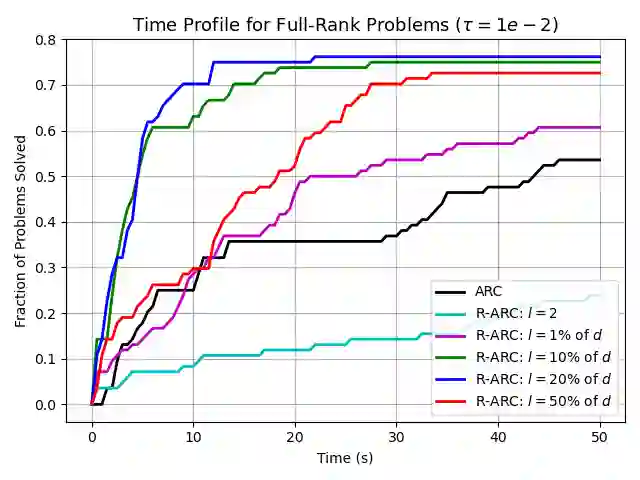

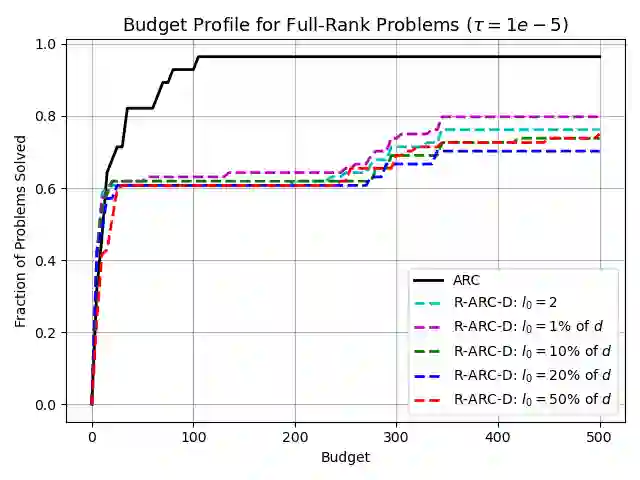

We propose and analyze random subspace variants of the second-order Adaptive Regularization using Cubics (ARC) algorithm. These methods iteratively restrict the search space to some random subspace of the parameters, constructing and minimizing a local model only within this subspace. Thus, our variants only require access to (small-dimensional) projections of first- and second-order problem derivatives and calculate a reduced step inexpensively. Under suitable assumptions, the ensuing methods maintain the optimal first-order, and second-order, global rates of convergence of (full-dimensional) cubic regularization, while showing improved scalability both theoretically and numerically, particularly when applied to low-rank functions. When applied to the latter, our adaptive variant naturally adapts the subspace size to the true rank of the function, without knowing it a priori.

翻译:我们提出并分析了二阶自适应立方正则化(ARC)算法的随机子空间变体。这些方法迭代地将搜索空间限制在参数的某个随机子空间内,仅在此子空间内构建并最小化局部模型。因此,我们的变体仅需要获取问题一阶和二阶导数在(低维)投影上的信息,并以较低成本计算降维步长。在适当假设下,所提出的方法保持了(全维)立方正则化的最优一阶和二阶全局收敛速率,同时在理论和数值上均展现出更好的可扩展性,尤其在应用于低秩函数时表现突出。当应用于低秩函数时,我们的自适应变体能自然地根据函数的真实秩调整子空间大小,而无需事先知晓该秩值。