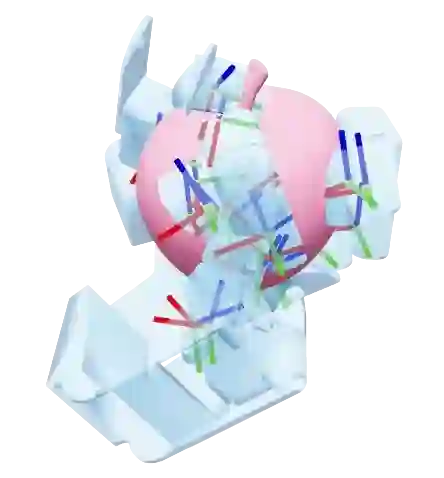

Dexterous grasping remains a central challenge in robotics due to the complexity of its high-dimensional state and action space. We introduce T(R,O) Grasp, a diffusion-based framework that efficiently generates accurate and diverse grasps across multiple robotic hands. At its core is the T(R,O) Graph, a unified representation that models spatial transformations between robotic hands and objects while encoding their geometric properties. A graph diffusion model, coupled with an efficient inverse kinematics solver, supports both unconditioned and conditioned grasp synthesis. Extensive experiments on a diverse set of dexterous hands show that T(R,O) Grasp achieves average success rate of 94.83%, inference speed of 0.21s, and throughput of 41 grasps per second on an NVIDIA A100 40GB GPU, substantially outperforming existing baselines. In addition, our approach is robust and generalizable across embodiments while significantly reducing memory consumption. More importantly, the high inference speed enables closed-loop dexterous manipulation, underscoring the potential of T(R,O) Grasp to scale into a foundation model for dexterous grasping.

翻译:灵巧抓取因其高维状态与动作空间的复杂性,始终是机器人学领域的核心挑战。本文提出T(R,O) Grasp,一种基于扩散的框架,能够高效地为多种机器人手生成准确且多样化的抓取姿态。其核心是T(R,O)图,这是一种统一表示,可建模机器人手与物体之间的空间变换,同时编码其几何属性。结合高效逆运动学求解器的图扩散模型,支持无条件及条件化的抓取合成。在多种灵巧手上进行的广泛实验表明,T(R,O) Grasp在NVIDIA A100 40GB GPU上实现了94.83%的平均成功率、0.21秒的推理速度以及每秒41个抓取的吞吐量,显著优于现有基线方法。此外,我们的方法在不同构型间具有鲁棒性和泛化能力,同时显著降低了内存消耗。更重要的是,其高推理速度为实现闭环灵巧操作提供了可能,这凸显了T(R,O) Grasp有潜力扩展为灵巧抓取的基础模型。