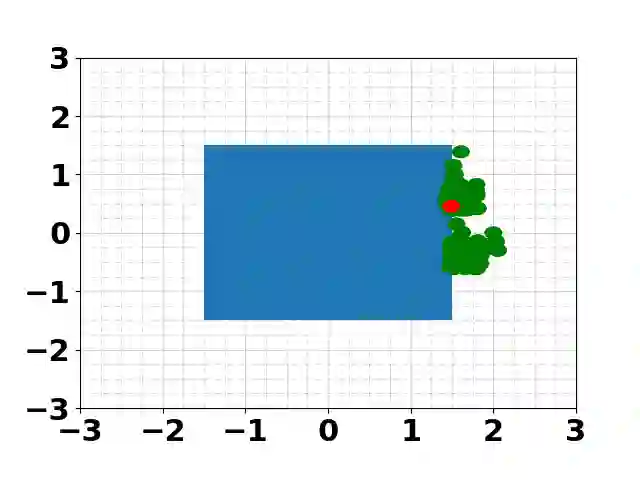

Many modern robotic systems such as multi-robot systems and manipulators exhibit redundancy, a property owing to which they are capable of executing multiple tasks. This work proposes a novel method, based on the Reinforcement Learning (RL) paradigm, to train redundant robots to be able to execute multiple tasks concurrently. Our approach differs from typical multi-objective RL methods insofar as the learned tasks can be combined and executed in possibly time-varying prioritized stacks. We do so by first defining a notion of task independence between learned value functions. We then use our definition of task independence to propose a cost functional that encourages a policy, based on an approximated value function, to accomplish its control objective while minimally interfering with the execution of higher priority tasks. This allows us to train a set of control policies that can be executed simultaneously. We also introduce a version of fitted value iteration to learn to approximate our proposed cost functional efficiently. We demonstrate our approach on several scenarios and robotic systems.

翻译:许多现代机器人系统,如多机器人系统和机械臂,都具有冗余特性,这使得它们能够执行多个任务。本文提出了一种基于强化学习(RL)范式的新方法,用于训练冗余机器人使其能够并发执行多个任务。我们的方法与典型的多目标RL方法不同之处在于,学习到的任务可以组合并以可能随时间变化的优先级堆栈形式执行。为此,我们首先定义了学习到的价值函数之间的任务独立性概念。然后,我们利用该任务独立性定义,提出了一种成本泛函,该泛函鼓励基于近似价值函数的策略在实现其控制目标的同时,对更高优先级任务的执行产生最小干扰。这使我们能够训练一组可同时执行的控制策略。我们还引入了一种拟合价值迭代的变体,以高效学习近似我们提出的成本泛函。我们在多种场景和机器人系统上验证了所提方法。