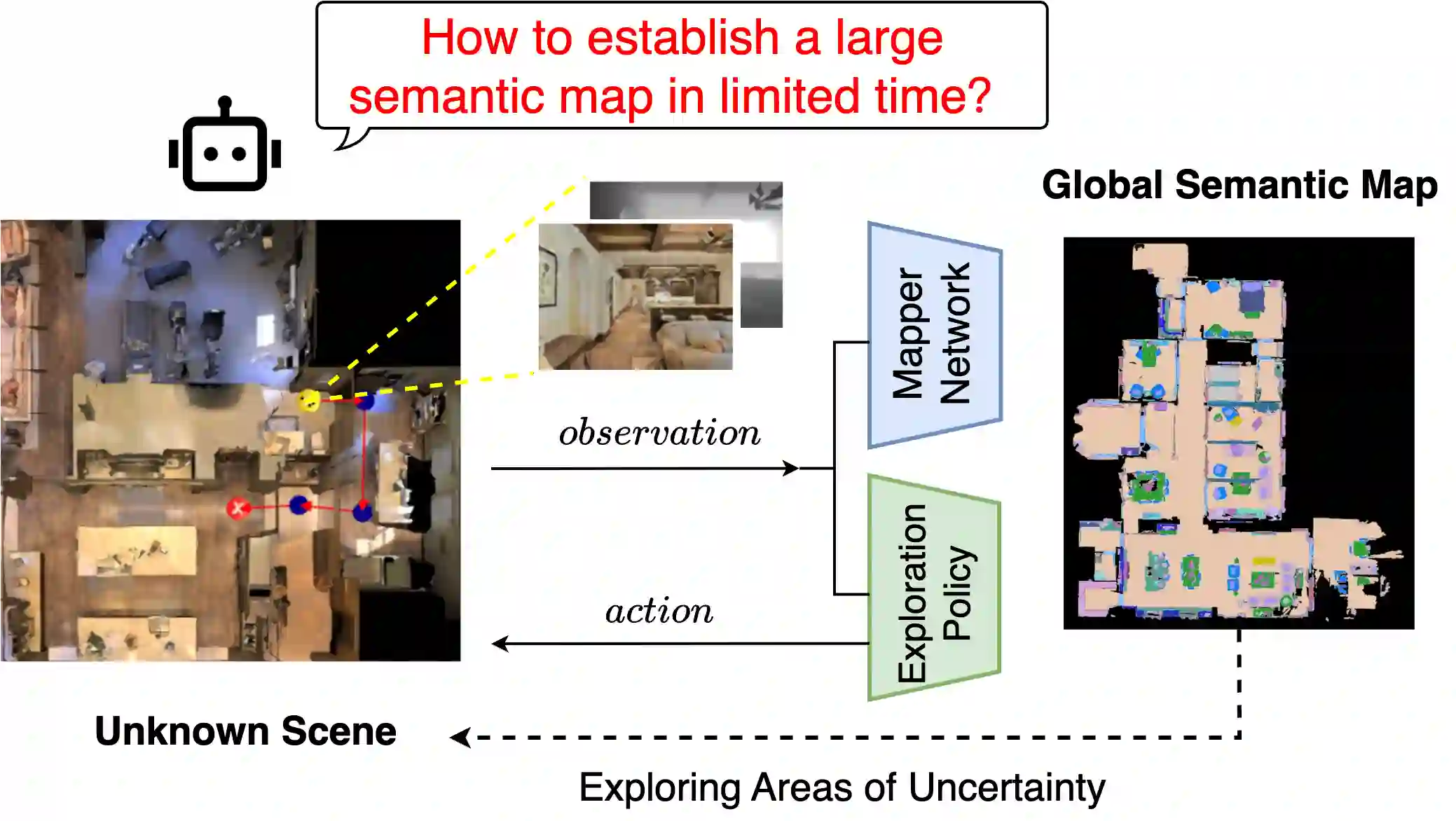

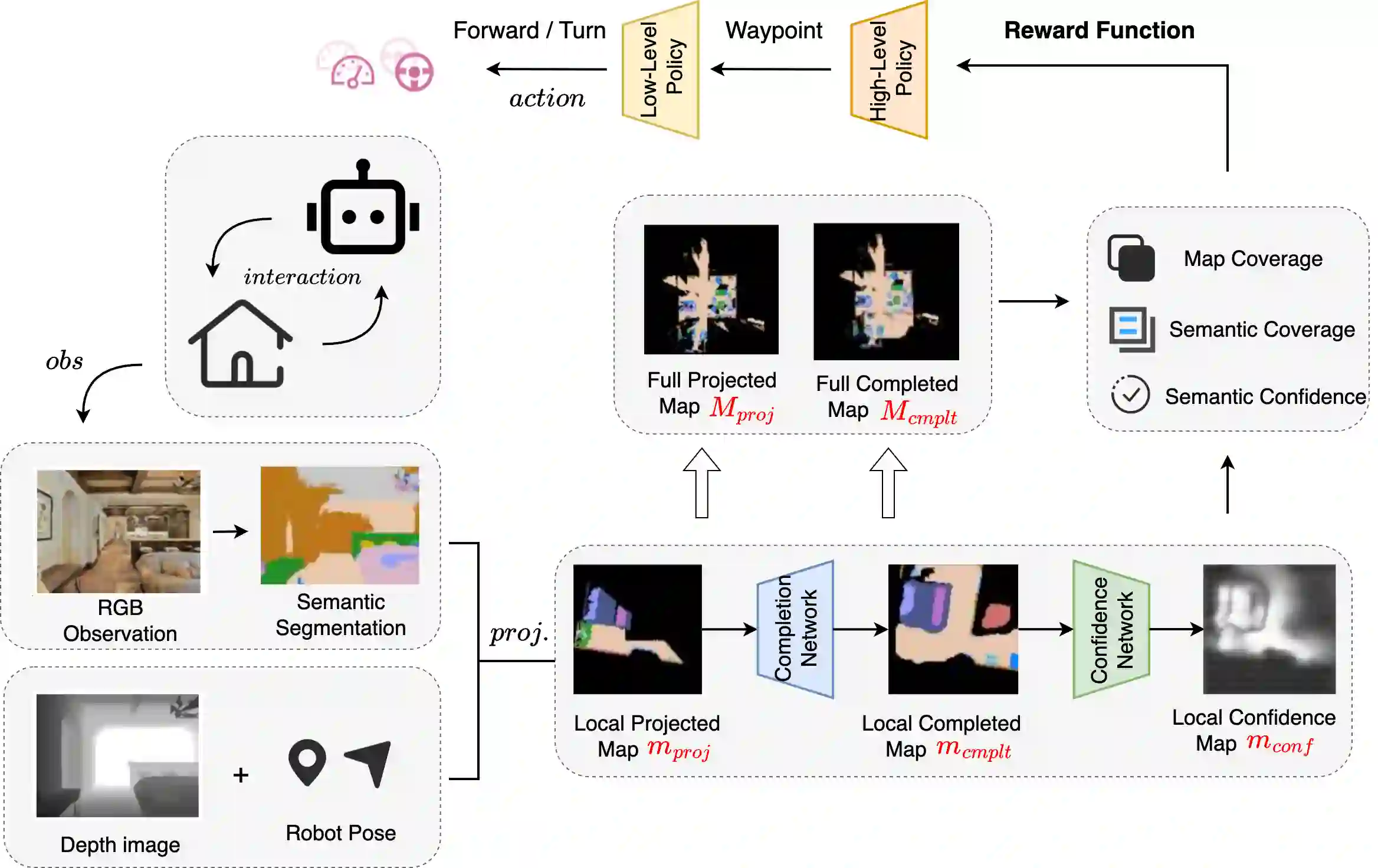

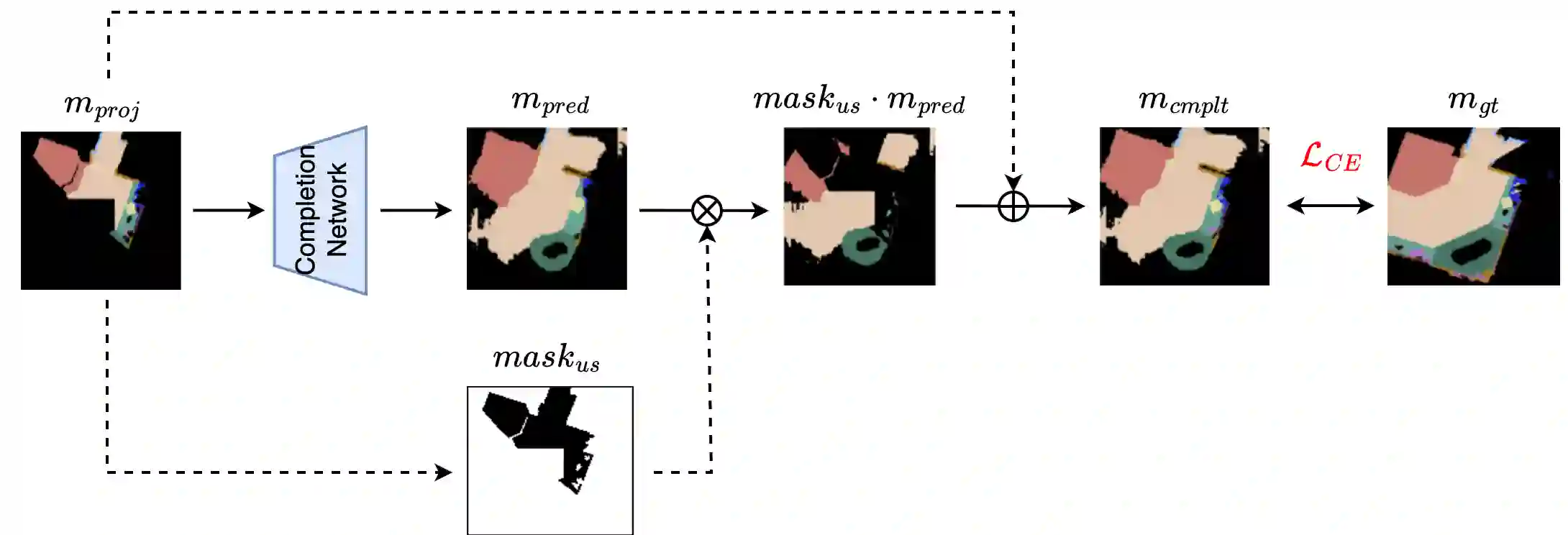

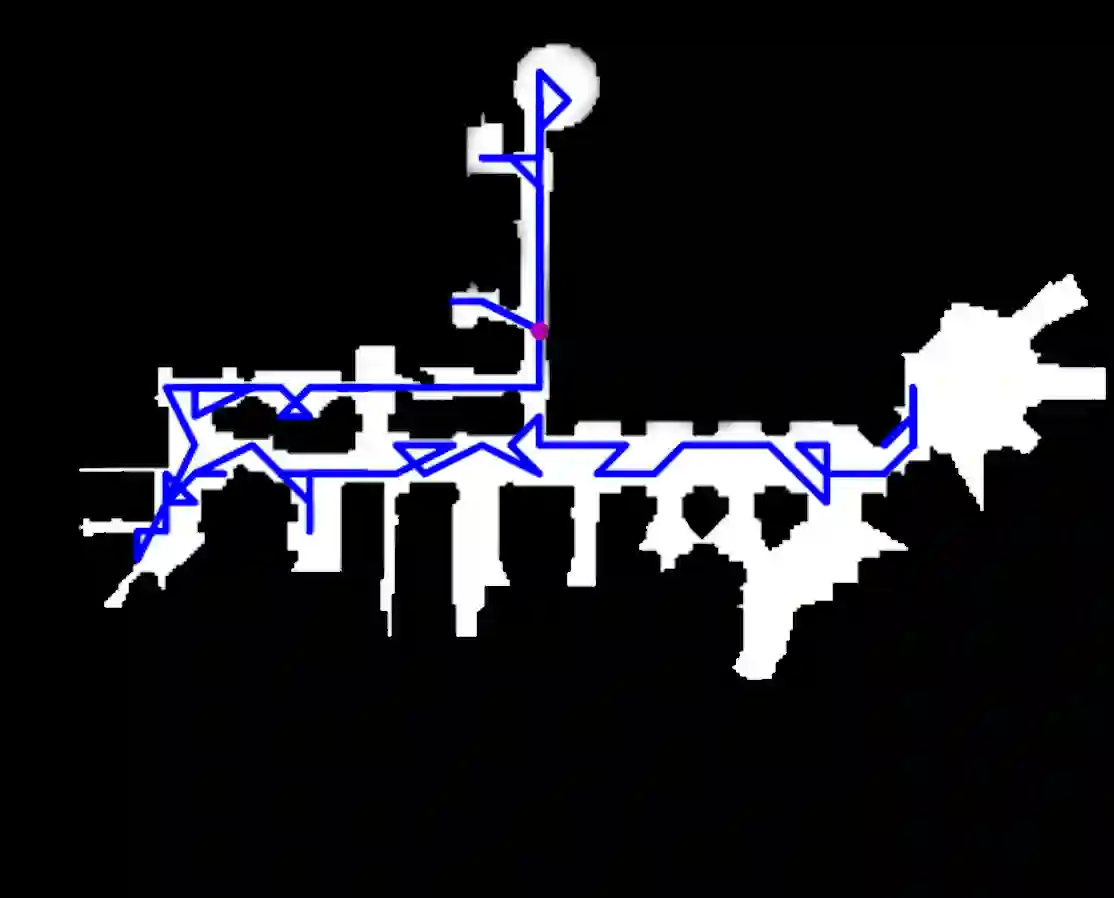

In this paper, we propose SEA, a novel approach for active robot exploration through semantic map prediction and a reinforcement learning-based hierarchical exploration policy. Unlike existing learning-based methods that rely on one-step waypoint prediction, our approach enhances the agent's long-term environmental understanding to facilitate more efficient exploration. We propose an iterative prediction-exploration framework that explicitly predicts the missing areas of the map based on current observations. The difference between the actual accumulated map and the predicted global map is then used to guide exploration. Additionally, we design a novel reward mechanism that leverages reinforcement learning to update the long-term exploration strategies, enabling us to construct an accurate semantic map within limited steps. Experimental results demonstrate that our method significantly outperforms state-of-the-art exploration strategies, achieving superior coverage ares of the global map within the same time constraints.

翻译:本文提出SEA,一种通过语义地图预测与基于强化学习的层次化探索策略实现机器人主动探索的新方法。不同于现有依赖单步路径点预测的学习方法,我们的方法通过增强智能体对环境的长期理解来促进更高效的探索。我们提出一种迭代的预测-探索框架,该框架基于当前观测显式预测地图中的缺失区域。随后,实际累积地图与预测全局地图之间的差异被用于引导探索。此外,我们设计了一种新颖的奖励机制,利用强化学习更新长期探索策略,从而能够在有限步数内构建精确的语义地图。实验结果表明,我们的方法显著优于当前最先进的探索策略,在相同时间约束下实现了对全局地图更优的覆盖范围。