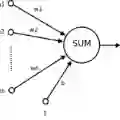

Neuromorphic computing with crossbar arrays has emerged as a promising alternative to improve computing efficiency for machine learning. Previous work has focused on implementing crossbar arrays to perform basic mathematical operations. However, in this paper, we explore the impact of mapping the layers of an artificial neural network onto physical cross-bar arrays arranged in tiles across a chip. We have developed a simplified mapping algorithm to determine the number of physical tiles, with fixed optimal array dimensions, and to estimate the minimum area occupied by these tiles for a given design objective. This simplified algorithm is compared with conventional binary linear optimization, which solves the equivalent bin-packing problem. We have found that the optimum solution is not necessarily related to the minimum number of tiles; rather, it is shown to be an interaction between tile array capacity and the scaling properties of its peripheral circuits. Additionally, we have discovered that square arrays are not always the best choice for optimal mapping, and that performance optimization comes at the cost of total tile area

翻译:利用交叉阵列进行神经形态计算已成为提升机器学习计算效率的一种有前景的替代方案。先前的研究主要集中于实现交叉阵列以执行基本数学运算。然而,本文探讨了将人工神经网络各层映射到芯片上以瓦片形式排列的物理交叉阵列所产生的影响。我们开发了一种简化的映射算法,用于确定物理瓦片的数量(基于固定的最优阵列尺寸),并估算在给定设计目标下这些瓦片所占用的最小面积。我们将此简化算法与解决等效装箱问题的传统二进制线性优化方法进行了比较。我们发现,最优解并不一定与瓦片数量最少相关;相反,它被证明是瓦片阵列容量与其外围电路缩放特性之间相互作用的结果。此外,我们还发现,对于优化映射而言,方形阵列并非总是最佳选择,并且性能优化是以总瓦片面积为代价的。