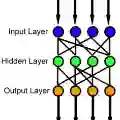

The single-layer feedforward neural network with random weights is a recurring motif in the neural networks literature. The advantage of these networks is their simplified training, which reduces to solving a ridge-regression problem. A general assumption is that these networks require a large number of hidden neurons relative to the dimensionality of the data samples, in order to achieve good classification accuracy. Contrary to this assumption, here we show that one can obtain good classification results even if the number of hidden neurons has the same order of magnitude as the dimensionality of the data samples, if this dimensionality is reasonably high. Inspired by this result, we also develop an efficient iterative residual training method for such random neural networks, and we extend the algorithm to the least-squares kernel version of the neural network model. Moreover, we also describe an encryption (obfuscation) method which can be used to protect both the data and the resulted network model.

翻译:具有随机权重的单层前馈神经网络是神经网络文献中反复出现的主题。这类网络的优势在于其简化的训练过程,可归结为求解一个岭回归问题。通常认为,为了获得良好的分类精度,这些网络需要相对于数据样本维度而言大量的隐藏神经元。与此假设相反,本文证明,若数据维度足够高,即使隐藏神经元数量与数据样本维度处于同一数量级,仍可获得良好的分类结果。受此启发,我们为这类随机神经网络开发了一种高效的迭代残差训练方法,并将该算法扩展至神经网络模型的最小二乘核版本。此外,我们还描述了一种可用于保护数据和最终网络模型的加密(混淆)方法。