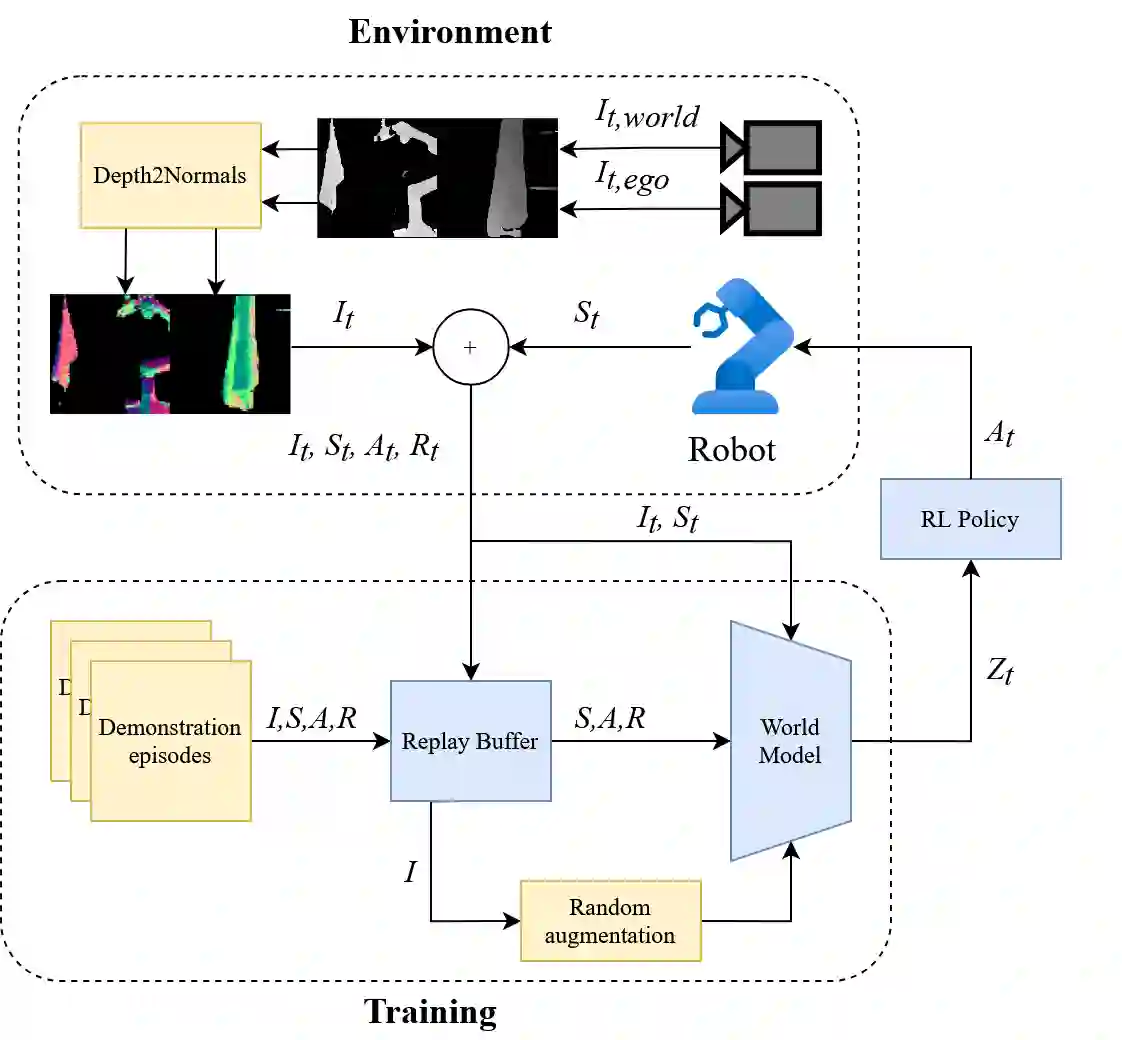

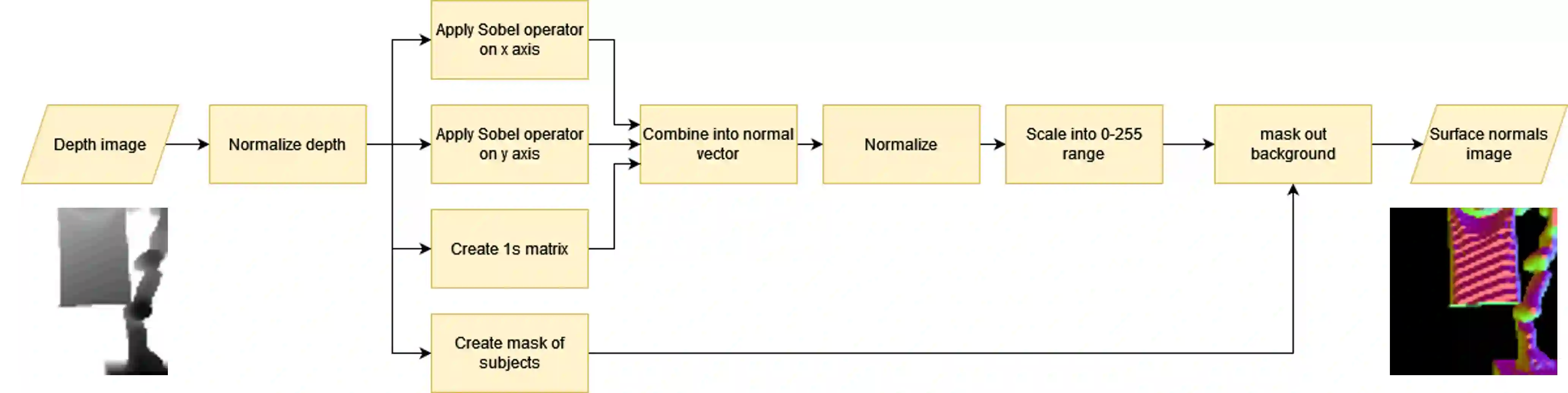

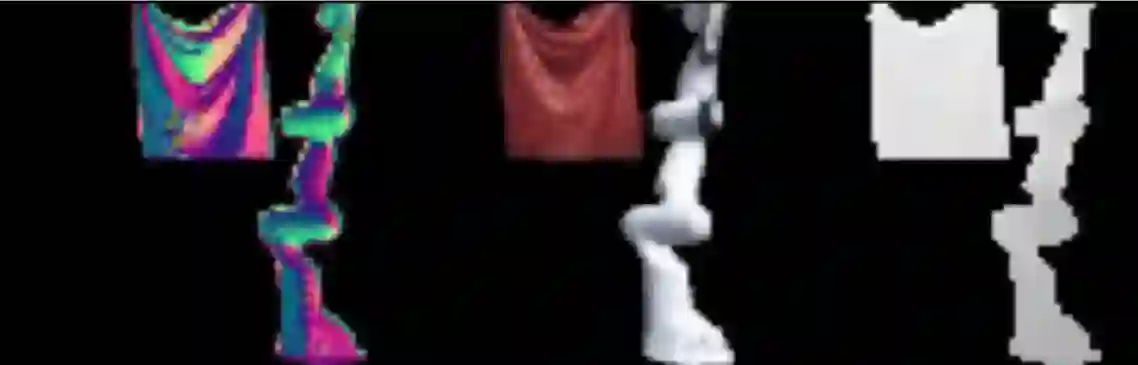

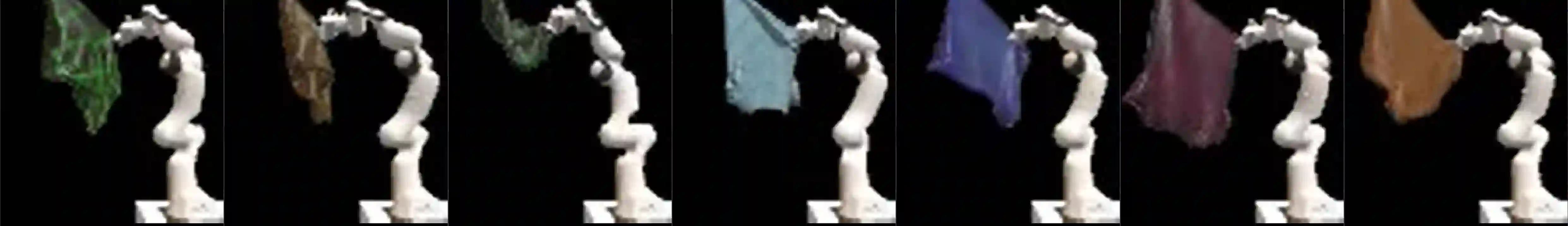

Learning to manipulate cloth is both a paradigmatic problem for robotic research and a problem of immediate relevance to a variety of applications ranging from assistive care to the service industry. The complex physics of the deformable object makes this problem of cloth manipulation nontrivial. In order to create a general manipulation strategy that addresses a variety of shapes, sizes, fold and wrinkle patterns, in addition to the usual problems of appearance variations, it becomes important to carefully consider model structure and their implications for generalisation performance. In this paper, we present an approach to in-air cloth manipulation that uses a variation of a recently proposed reinforcement learning architecture, DreamerV2. Our implementation modifies this architecture to utilise surface normals input, in addition to modiying the replay buffer and data augmentation procedures. Taken together these modifications represent an enhancement to the world model used by the robot, addressing the physical complexity of the object being manipulated by the robot. We present evaluations both in simulation and in a zero-shot deployment of the trained policies in a physical robot setup, performing in-air unfolding of a variety of different cloth types, demonstrating the generalisation benefits of our proposed architecture.

翻译:学习布料操作既是机器人研究中的典型问题,也是从辅助护理到服务业等多种应用中直接相关的问题。可变形物体的复杂物理特性使得布料操作这一问题变得非平凡。为了创建能够处理各种形状、尺寸、折叠和褶皱模式(除了通常的外观变化问题)的通用操作策略,仔细考虑模型结构及其对泛化性能的影响变得至关重要。在本文中,我们提出了一种空中布料操作方法,该方法使用了最近提出的强化学习架构 DreamerV2 的一个变体。我们的实现修改了该架构,以利用表面法线输入,并修改了经验回放缓冲区与数据增强流程。这些修改共同代表了对机器人所用世界模型的增强,旨在应对机器人所操作物体的物理复杂性。我们提供了在仿真中的评估,以及在物理机器人设置中对训练好的策略进行零样本部署的评估,执行了多种不同类型布料的空中展开任务,从而证明了我们所提出架构的泛化优势。