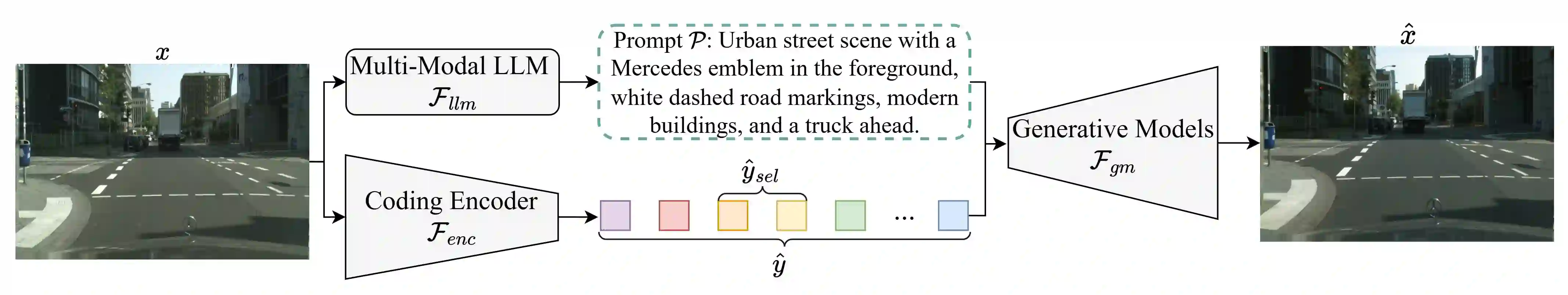

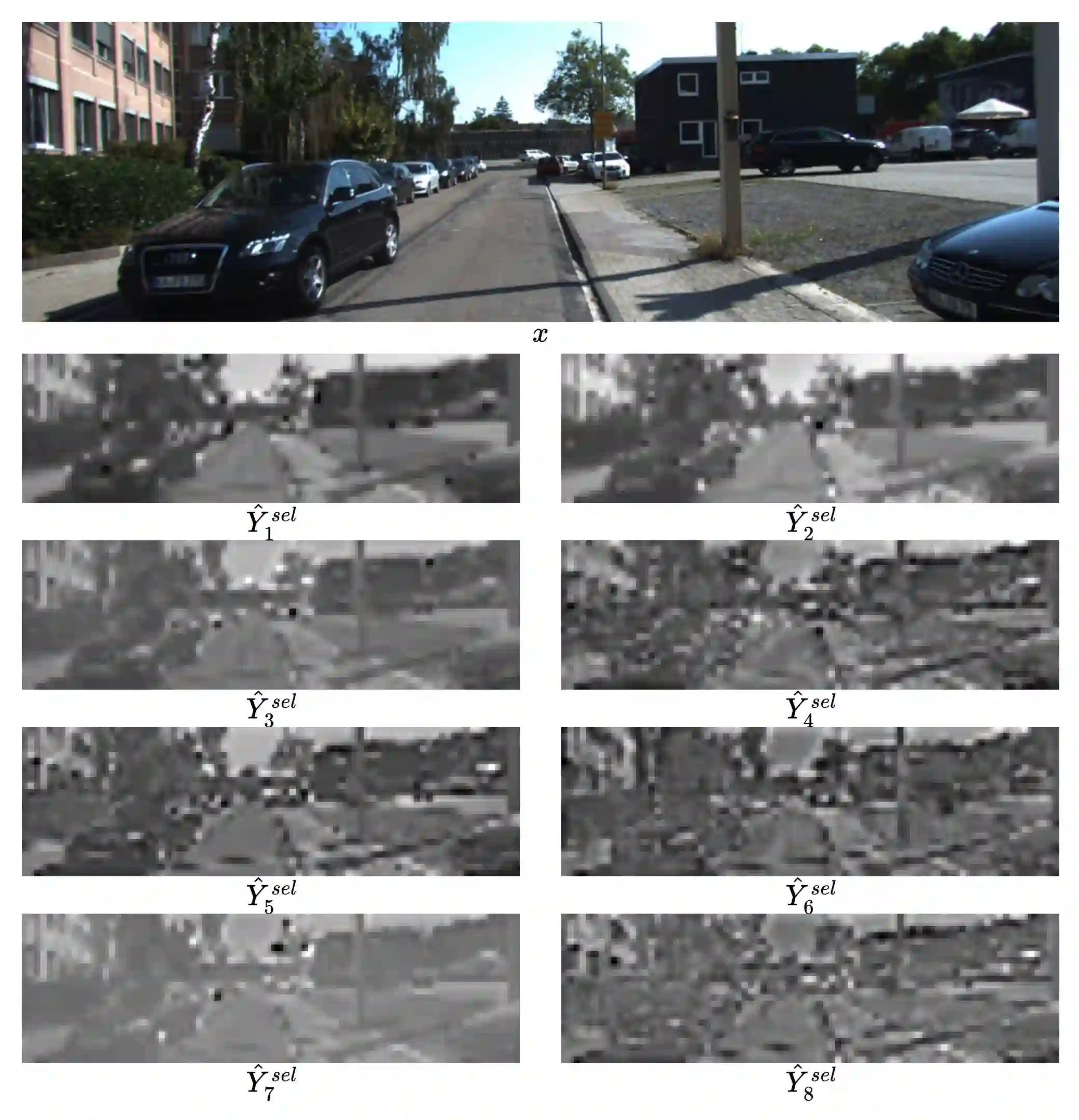

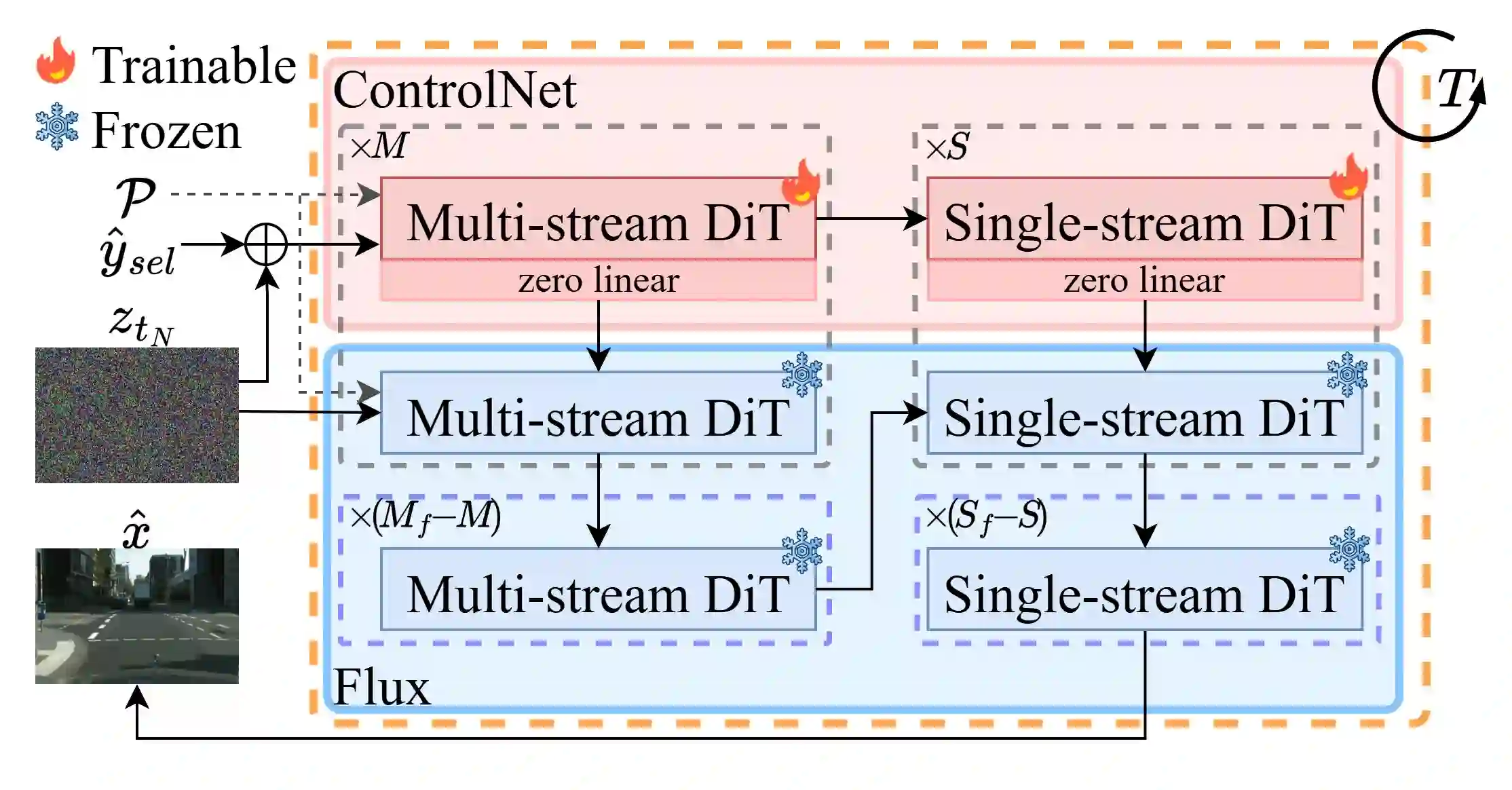

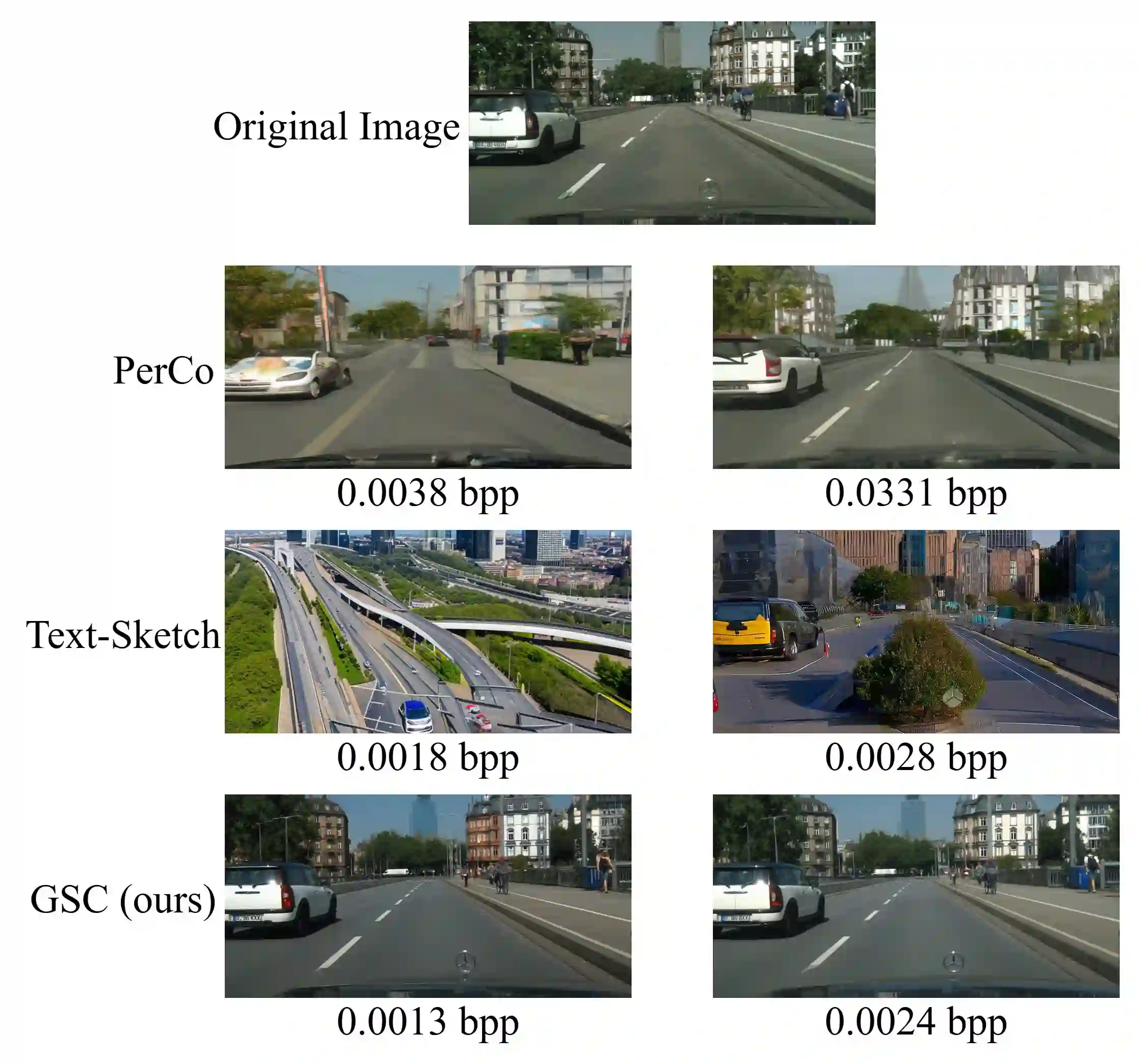

We consider the problem of ultra-low bit rate visual communication for remote vision analysis, human interactions and control in challenging scenarios with very low communication bandwidth, such as deep space exploration, battlefield intelligence, and robot navigation in complex environments. In this paper, we ask the following important question: can we accurately reconstruct the visual scene using only a very small portion of the bit rate in existing coding methods while not sacrificing the accuracy of vision analysis and performance of human interactions? Existing text-to-image generation models offer a new approach for ultra-low bitrate image description. However, they can only achieve a semantic-level approximation of the visual scene, which is far insufficient for the purpose of visual communication and remote vision analysis and human interactions. To address this important issue, we propose to seamlessly integrate image generation with deep image compression, using joint text and coding latent to guide the rectified flow models for precise generation of the visual scene. The semantic text description and coding latent are both encoded and transmitted to the decoder at a very small bit rate. Experimental results demonstrate that our method can achieve the same image reconstruction quality and vision analysis accuracy as existing methods while using much less bandwidth. The code will be released upon paper acceptance.

翻译:本文研究在通信带宽极低的挑战性场景(如深空探测、战场情报、复杂环境下的机器人导航)中,面向远程视觉分析、人机交互与控制任务的超低比特率视觉通信问题。本文提出一个关键问题:能否仅使用现有编码方法中极小部分的比特率,在不牺牲视觉分析精度与人机交互性能的前提下,准确重建视觉场景?现有的文本到图像生成模型为超低比特率图像描述提供了新思路,但其仅能实现视觉场景的语义级近似,远不能满足视觉通信、远程视觉分析及人机交互的需求。为解决这一重要问题,我们提出将图像生成与深度图像压缩无缝融合,利用联合文本与编码隐变量引导修正流模型,以实现视觉场景的精确生成。语义文本描述与编码隐变量均以极低比特率编码并传输至解码端。实验结果表明,本方法在显著降低带宽占用的同时,能够达到与现有方法相当的图像重建质量与视觉分析精度。代码将在论文录用后公开。