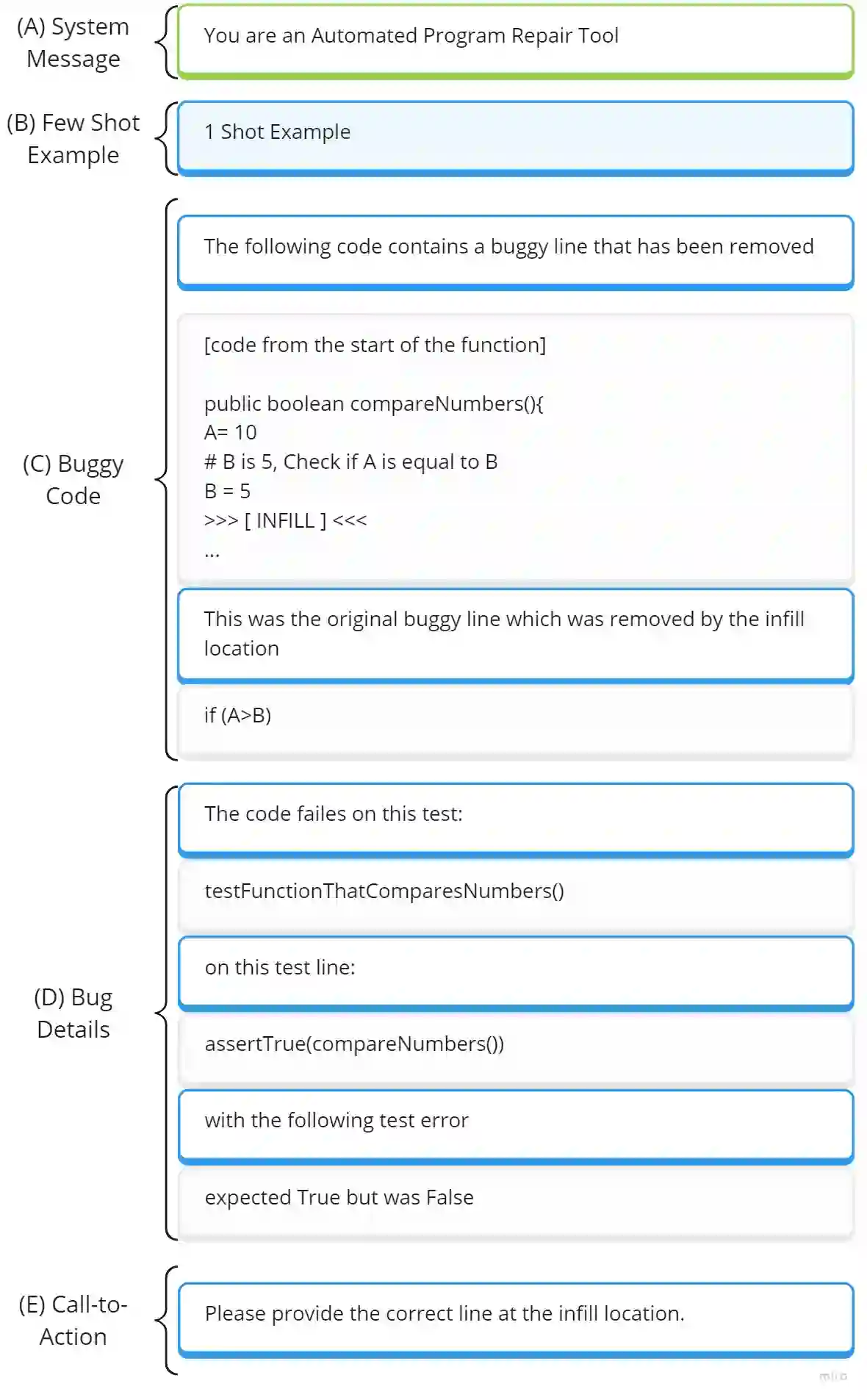

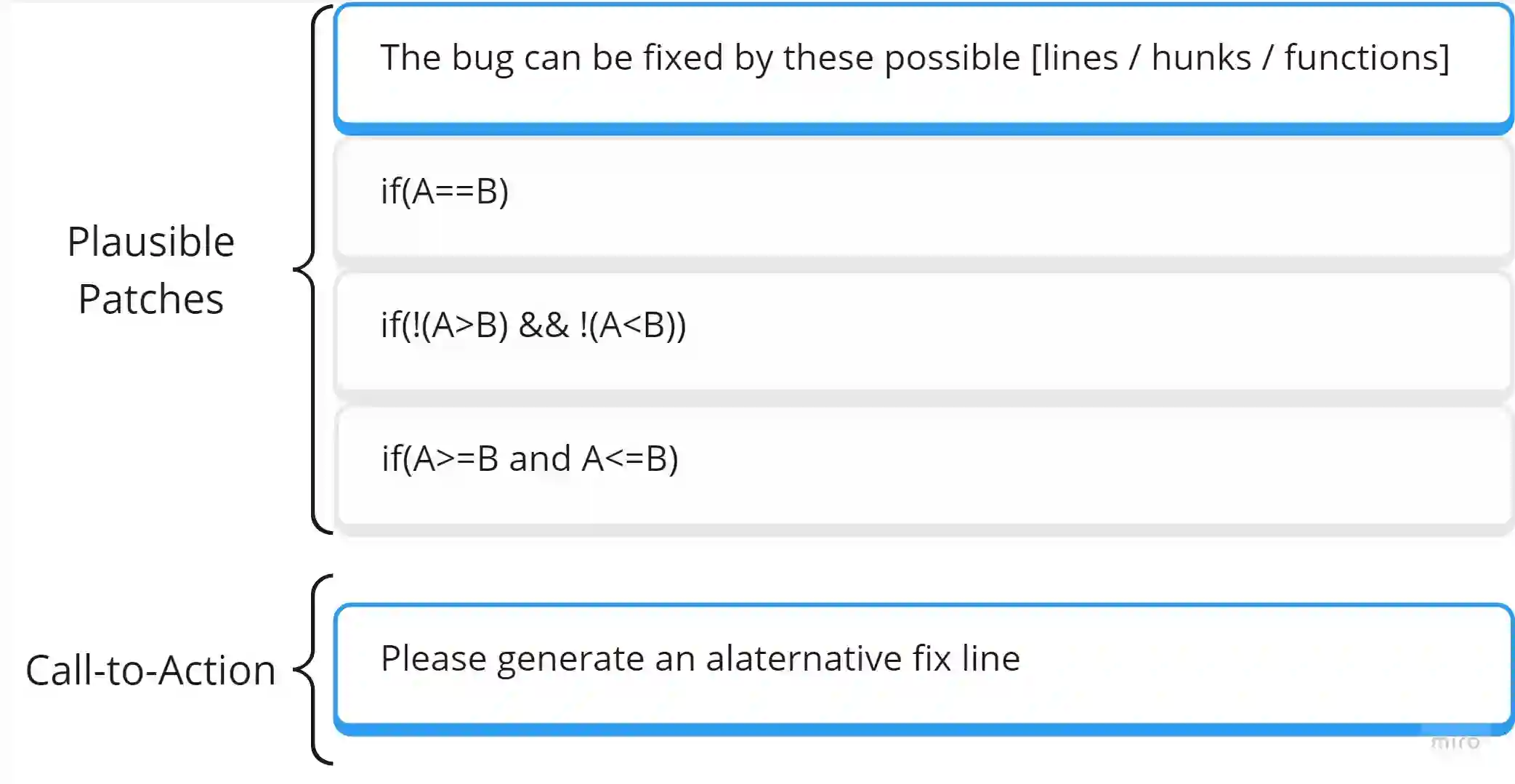

Large language models (LLM) have proven to be effective at automated program repair (APR). However, using LLMs can be highly costly, with companies invoicing users by the number of tokens. In this paper, we propose CigaR, the first LLM-based APR tool that focuses on minimizing the repair cost. CigaR works in two major steps: generating a plausible patch and multiplying plausible patches. CigaR optimizes the prompts and the prompt setting to maximize the information given to LLMs in the smallest possible number of tokens. Our experiments on 267 bugs from the widely used Defects4J dataset shows that CigaR reduces the token cost by 62. On average, CigaR spends 171k tokens per bug while the baseline uses 451k tokens. On the subset of bugs that are fixed by both, CigaR spends 20k per bug while the baseline uses 695k tokens, a cost saving of 97. Our extensive experiments show that CigaR is a cost-effective LLM-based program repair tool that uses a low number of tokens to generate automatic patches.

翻译:大型语言模型(LLM)已被证明在自动程序修复(APR)中卓有成效。然而,使用LLM可能代价高昂——企业通常按token数量向用户收费。本文提出CigaR,这是首个聚焦于最小化修复成本的LLM式APR工具。CigaR通过两大步骤运作:生成可行补丁与扩增可行补丁。它优化提示词及提示设置,力求用最少的token数量向LLM传递最大信息量。基于广泛使用的Defects4J数据集中267个缺陷的实验表明,CigaR将token成本降低62%。平均而言,CigaR处理每个缺陷耗时171k token,而基线方法需451k token。在两者均能修复的缺陷子集上,CigaR仅消耗20k token/缺陷,基线则需695k token,成本节省达97%。大量实验证明,CigaR是一种代价高效的LLM式程序修复工具,能以极低token消耗生成自动化补丁。