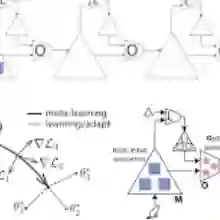

Large language models (LLMs) often struggle to learn from corrective feedback within a conversational context. They are rarely proactive in soliciting this feedback, even when faced with ambiguity, which can make their dialogues feel static, one-sided, and lacking the adaptive qualities of human conversation. To address these limitations, we draw inspiration from social meta-learning (SML) in humans - the process of learning how to learn from others. We formulate SML as a finetuning methodology, training LLMs to solicit and learn from language feedback in simulated pedagogical dialogues, where static tasks are converted into interactive social learning problems. SML effectively teaches models to use conversation to solve problems they are unable to solve in a single turn. This capability generalises across domains; SML on math problems produces models that better use feedback to solve coding problems and vice versa. Furthermore, despite being trained only on fully-specified problems, these models are better able to solve underspecified tasks where critical information is revealed over multiple turns. When faced with this ambiguity, SML-trained models make fewer premature answer attempts and are more likely to ask for the information they need. This work presents a scalable approach to developing AI systems that effectively learn from language feedback.

翻译:大型语言模型(LLM)在对话语境中往往难以从纠正性反馈中有效学习。即使面临模糊情境,它们也极少主动寻求反馈,这导致其对话显得静态化、单向化,缺乏人类对话的适应特性。为突破这些局限,我们借鉴人类的社会元学习(SML)机制——即学习如何向他人学习的认知过程。我们将SML构建为一种微调方法,通过在模拟教学对话中训练LLM主动寻求并学习语言反馈,将静态任务转化为交互式社会学习问题。SML能有效指导模型利用对话解决单轮次无法处理的问题。该能力具备跨领域泛化特性:基于数学问题训练的SML模型能更有效地利用反馈解决编程问题,反之亦然。此外,尽管仅使用完整定义的任务进行训练,这些模型在处理信息需多轮对话逐步揭示的非完备定义任务时表现更优。面对此类模糊情境,经SML训练的模型会减少过早尝试回答的行为,并更倾向于主动获取必要信息。本研究提出了一种可扩展的技术路径,用于开发能高效学习语言反馈的人工智能系统。