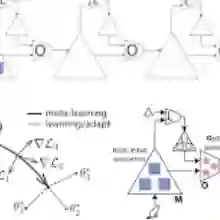

Large language models (LLMs) are increasingly evaluated on reasoning tasks, yet their logical abilities remain contested. To address this, we study LLMs' reasoning in a well-defined fragment of logic: syllogistic reasoning. We cast the problem as premise selection and construct controlled datasets to isolate logical competence. Beyond evaluation, an open challenge is enabling LLMs to acquire abstract inference patterns that generalize to novel structures. We propose to apply few-shot meta-learning to this domain, thereby encouraging models to extract rules across tasks rather than memorize patterns within tasks. Although meta-learning has been little explored in the context of logic learnability, our experiments show that it is effective: small models (1.5B-7B) fine-tuned with meta-learning demonstrate strong gains in generalization, with especially pronounced benefits in low-data regimes. These meta-learned models outperform GPT-4o and o3-mini on our syllogistic reasoning task.

翻译:大型语言模型(LLMs)在推理任务上的评估日益增多,但其逻辑能力仍存在争议。为此,我们研究了LLMs在逻辑学中一个明确定义的片段——三段论推理——中的表现。我们将该问题构建为前提选择任务,并构建了受控数据集以隔离逻辑能力。除了评估之外,一个开放的挑战是如何使LLMs能够习得可推广到新结构的抽象推理模式。我们提出在该领域应用少样本元学习,从而鼓励模型跨任务提取规则,而非在任务内记忆模式。尽管元学习在逻辑可学习性领域的研究尚少,但我们的实验表明该方法具有显著效果:经过元学习微调的小型模型(1.5B-7B)在泛化能力上表现出显著提升,尤其在低数据场景中收益更为突出。这些经过元学习的模型在我们的三段论推理任务中超越了GPT-4o和o3-mini的表现。