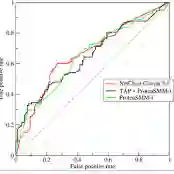

Large Language Models (LLMs) are increasingly deployed in critical applications requiring reliable reasoning, yet their internal reasoning processes remain difficult to evaluate systematically. Existing methods focus on final-answer correctness, providing limited insight into how reasoning unfolds across intermediate steps. We present EvalQReason, a framework that quantifies LLM reasoning quality through step-level probability distribution analysis without requiring human annotation. The framework introduces two complementary algorithms: Consecutive Step Divergence (CSD), which measures local coherence between adjacent reasoning steps, and Step-to-Final Convergence (SFC), which assesses global alignment with final answers. Each algorithm employs five statistical metrics to capture reasoning dynamics. Experiments across mathematical and medical datasets with open-source 7B-parameter models demonstrate that CSD-based features achieve strong predictive performance for correctness classification, with classical machine learning models reaching F1=0.78 and ROC-AUC=0.82, and sequential neural models substantially improving performance (F1=0.88, ROC-AUC=0.97). CSD consistently outperforms SFC, and sequential architectures outperform classical machine learning approaches. Critically, reasoning dynamics prove domain-specific: mathematical reasoning exhibits clear divergence-based discrimination patterns between correct and incorrect solutions, while medical reasoning shows minimal discriminative signals, revealing fundamental differences in how LLMs process different reasoning types. EvalQReason enables scalable, process-aware evaluation of reasoning reliability, establishing probability-based divergence analysis as a principled approach for trustworthy AI deployment.

翻译:大型语言模型(LLMs)在需要可靠推理的关键应用中日益普及,然而其内部推理过程仍难以进行系统性评估。现有方法主要关注最终答案的正确性,对推理在中间步骤如何展开的洞察有限。我们提出EvalQReason框架,该框架通过分步概率分布分析量化LLM的推理质量,无需人工标注。该框架引入两种互补算法:连续步骤发散度(CSD),用于衡量相邻推理步骤间的局部连贯性;步骤至最终收敛度(SFC),用于评估与最终答案的全局一致性。每种算法采用五种统计指标来捕捉推理动态。在数学和医学数据集上使用开源70亿参数模型进行的实验表明,基于CSD的特征在正确性分类任务中实现了强大的预测性能:经典机器学习模型达到F1=0.78和ROC-AUC=0.82,而序列神经网络模型显著提升了性能(F1=0.88,ROC-AUC=0.97)。CSD始终优于SFC,序列架构也优于经典机器学习方法。关键的是,推理动态被证明具有领域特异性:数学推理在正确与错误解之间展现出基于发散度的清晰判别模式,而医学推理则显示出极小的判别信号,这揭示了LLMs处理不同类型推理的根本差异。EvalQReason实现了可扩展的、过程感知的推理可靠性评估,确立了基于概率的发散度分析作为可信AI部署的一种原则性方法。