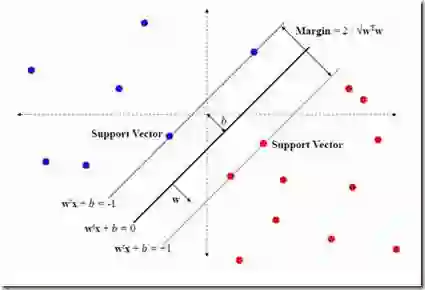

Human emotions can be conveyed through nuanced touch gestures. However, there is a lack of understanding of how consistently emotions can be conveyed to robots through touch. This study explores the consistency of touch-based emotional expression toward a robot by integrating tactile and auditory sensory reading of affective haptic expressions. We developed a piezoresistive pressure sensor and used a microphone to mimic touch and sound channels, respectively. In a study with 28 participants, each conveyed 10 emotions to a robot using spontaneous touch gestures. Our findings reveal a statistically significant consistency in emotion expression among participants. However, some emotions obtained low intraclass correlation values. Additionally, certain emotions with similar levels of arousal or valence did not exhibit significant differences in the way they were conveyed. We subsequently constructed a multi-modal integrating touch and audio features to decode the 10 emotions. A support vector machine (SVM) model demonstrated the highest accuracy, achieving 40% for 10 classes, with "Attention" being the most accurately conveyed emotion at a balanced accuracy of 87.65%.

翻译:人类情感可通过微妙的触触手势进行传递。然而,目前对于通过触觉向机器人传递情感的一致性尚缺乏深入理解。本研究通过整合情感触觉表达中的触觉与听觉感官信息,探索了面向机器人的触觉情感表达的一致性。我们开发了一种压阻式压力传感器,并利用麦克风分别模拟触觉与声音通道。在一项包含28名参与者的研究中,每位参与者使用自发触触手势向机器人传递了10种情感。研究结果显示,参与者在情感表达上存在统计学显著的一致性。然而,部分情感的内部相关系数值较低。此外,某些具有相似唤醒度或效价水平的情感在传递方式上未表现出显著差异。随后,我们构建了一个融合触觉与音频特征的多模态模型,用于解码这10种情感。支持向量机(SVM)模型表现出最高的分类准确率,在10类情感识别中达到40%,其中"关注"(Attention)是传递最准确的情感,其平衡准确率为87.65%。