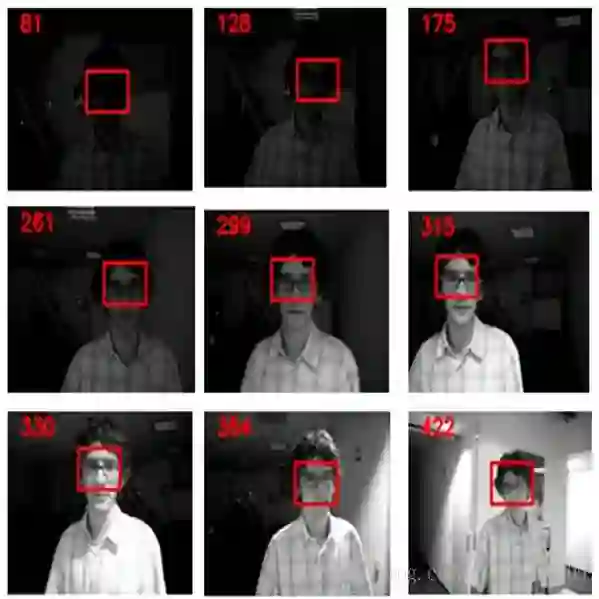

Referring Multi-Object Tracking (RMOT) aims to track specific targets based on language descriptions and is vital for interactive AI systems such as robotics and autonomous driving. However, existing RMOT models rely solely on 2D RGB data, making it challenging to accurately detect and associate targets characterized by complex spatial semantics (e.g., ``the person closest to the camera'') and to maintain reliable identities under severe occlusion, due to the absence of explicit 3D spatial information. In this work, we propose a novel task, RGBD Referring Multi-Object Tracking (DRMOT), which explicitly requires models to fuse RGB, Depth (D), and Language (L) modalities to achieve 3D-aware tracking. To advance research on the DRMOT task, we construct a tailored RGBD referring multi-object tracking dataset, named DRSet, designed to evaluate models' spatial-semantic grounding and tracking capabilities. Specifically, DRSet contains RGB images and depth maps from 187 scenes, along with 240 language descriptions, among which 56 descriptions incorporate depth-related information. Furthermore, we propose DRTrack, a MLLM-guided depth-referring tracking framework. DRTrack performs depth-aware target grounding from joint RGB-D-L inputs and enforces robust trajectory association by incorporating depth cues. Extensive experiments on the DRSet dataset demonstrate the effectiveness of our framework.

翻译:指代多目标跟踪(RMOT)旨在根据语言描述追踪特定目标,对于机器人、自动驾驶等交互式人工智能系统至关重要。然而,现有RMOT模型仅依赖二维RGB数据,由于缺乏显式的三维空间信息,难以准确检测和关联具有复杂空间语义特征的目标(例如“距离相机最近的人”),且在严重遮挡下难以维持可靠的身份一致性。本文提出一种新任务——RGBD指代多目标跟踪(DRMOT),该任务明确要求模型融合RGB、深度(D)与语言(L)模态以实现三维感知的跟踪。为推进DRMOT任务研究,我们构建了专用的RGBD指代多目标跟踪数据集DRSet,旨在评估模型的空间语义 grounding 与跟踪能力。具体而言,DRSet包含来自187个场景的RGB图像与深度图,以及240条语言描述,其中56条描述包含深度相关信息。此外,我们提出DRTrack——一种由MLLM引导的深度指代跟踪框架。该框架能够从RGB-D-L联合输入中执行深度感知的目标 grounding,并通过融入深度线索强化轨迹关联的鲁棒性。在DRSet数据集上的大量实验验证了我们框架的有效性。