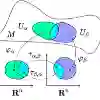

Statistical learning under distributional drift remains poorly characterized, especially in closed-loop settings where learning alters the data-generating law. We introduce an intrinsic drift budget $C_T$ that quantifies the cumulative information-geometric motion of the data distribution along the realized learner-environment trajectory, measured in Fisher-Rao distance (the Riemannian metric induced by Fisher information on a statistical manifold of data-generating laws). The budget decomposes this motion into exogenous change (environmental drift that would occur without intervention) and policy-sensitive feedback contributions (drift induced by the learner's actions through the closed loop). This yields a rate-based characterization: in prequential reproducibility bounds -- where performance on the realized stream is used to predict one-step-ahead performance under the next distribution -- the drift contribution enters through the average drift rate $C_T/T$, i.e., normalized cumulative Fisher-Rao motion per time step. We prove a drift--feedback bound of order $T^{-1/2} + C_T/T$ (up to a controlled second-order remainder) and establish a matching minimax lower bound on a canonical subclass, showing this dependence is tight up to constants. Consequently, when $C_T/T$ is nonnegligible, one-step-ahead reproducibility admits an irreducible accuracy floor of the same order. Finally, the framework places exogenous drift, adaptive data analysis, and performative feedback within a common geometric account of distributional motion.

翻译:统计学习在分布漂移下的特性仍未得到充分刻画,尤其在闭环场景中——学习过程会改变数据生成规律。我们引入了一个内在漂移预算$C_T$,用于量化沿已实现的学习者-环境轨迹的数据分布在信息几何意义上的累积运动,该运动以Fisher-Rao距离(由统计流形上数据生成规律的Fisher信息诱导的黎曼度量)进行测量。该预算将运动分解为外生变化(无干预情况下发生的环境漂移)与策略敏感的反馈贡献(学习者行为通过闭环机制诱导的漂移)。由此得到基于速率的表征:在预测可重现性界中——其中已实现数据流上的性能被用于预测下一分布下的单步前瞻性能——漂移贡献通过平均漂移速率$C_T/T$(即单位时间步长归一化的累积Fisher-Rao运动)体现。我们证明了$T^{-1/2} + C_T/T$阶的漂移-反馈界(在受控二阶余项范围内),并在典型子类上建立了匹配的极小极大下界,表明该依赖关系在常数因子内是紧致的。因此,当$C_T/T$不可忽略时,单步前瞻可重现性存在同阶的不可约精度下界。最终,该框架将外生漂移、自适应数据分析和执行反馈统一于分布运动的共同几何描述中。