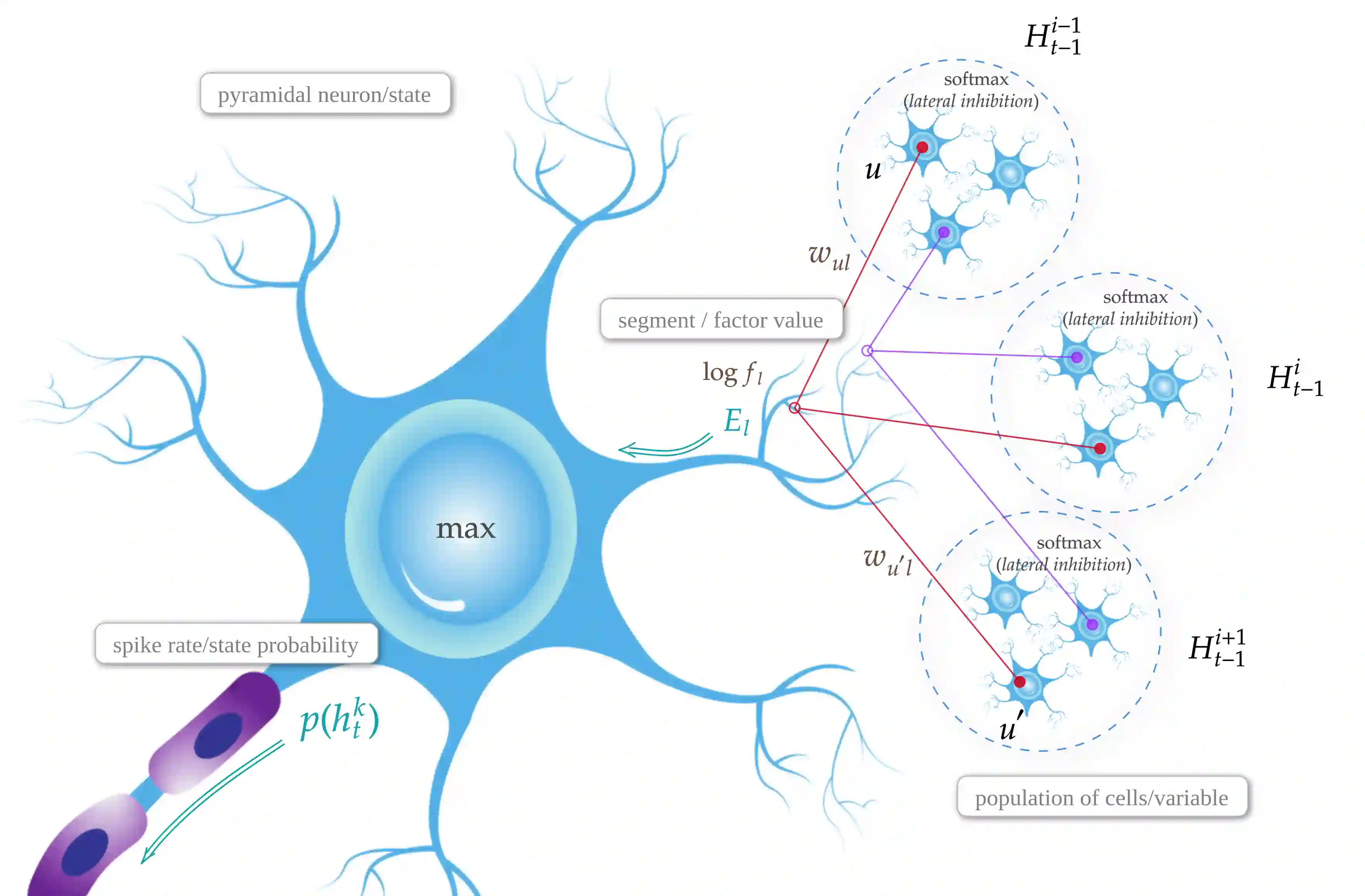

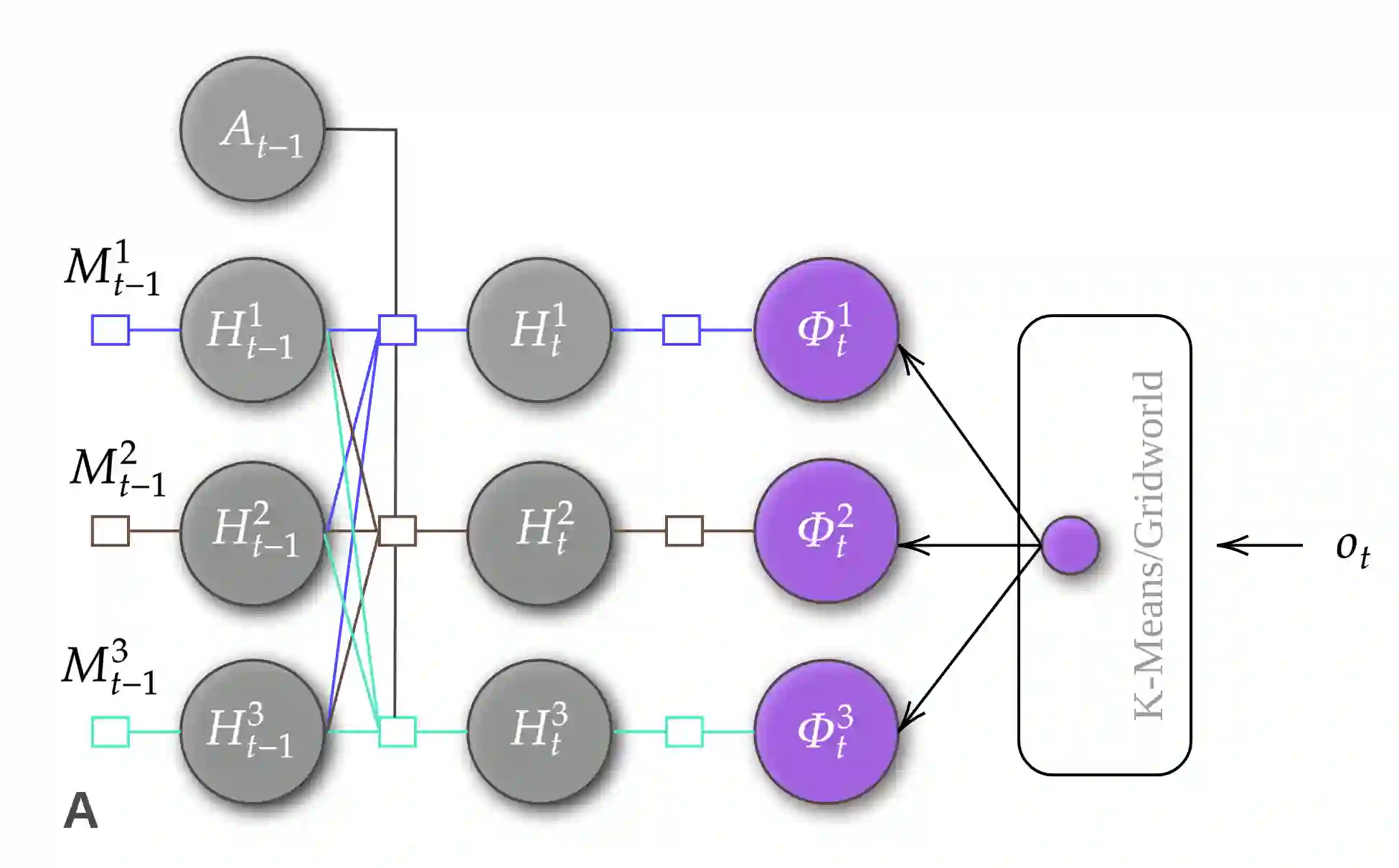

This paper presents a novel approach to address the challenge of online temporal memory learning for decision-making under uncertainty in non-stationary, partially observable environments. The proposed algorithm, Distributed Hebbian Temporal Memory (DHTM), is based on factor graph formalism and a multicomponent neuron model. DHTM aims to capture sequential data relationships and make cumulative predictions about future observations, forming Successor Features (SF). Inspired by neurophysiological models of the neocortex, the algorithm utilizes distributed representations, sparse transition matrices, and local Hebbian-like learning rules to overcome the instability and slow learning process of traditional temporal memory algorithms like RNN and HMM. Experimental results demonstrate that DHTM outperforms LSTM and a biologically inspired HMM-like algorithm, CSCG, in the case of non-stationary datasets. Our findings suggest that DHTM is a promising approach for addressing the challenges of online sequence learning and planning in dynamic environments.

翻译:本文提出了一种新颖方法,以解决非平稳、部分可观测环境下不确定性决策中的在线时序记忆学习难题。所提出的算法——分布式赫布时序记忆(DHTM)——基于因子图形式体系和多组分神经元模型。DHTM旨在捕捉序列数据关系并对未来观测进行累积预测,从而形成后继特征(SF)。受新皮层神经生理学模型的启发,该算法利用分布式表征、稀疏转移矩阵和局部类赫布学习规则,以克服传统时序记忆算法(如RNN和HMM)的不稳定性和缓慢学习过程。实验结果表明,在非平稳数据集情况下,DHTM的性能优于LSTM及受生物学启发的类HMM算法CSCG。我们的研究结果表明,DHTM是解决动态环境中在线序列学习与规划挑战的一种有前景的方法。