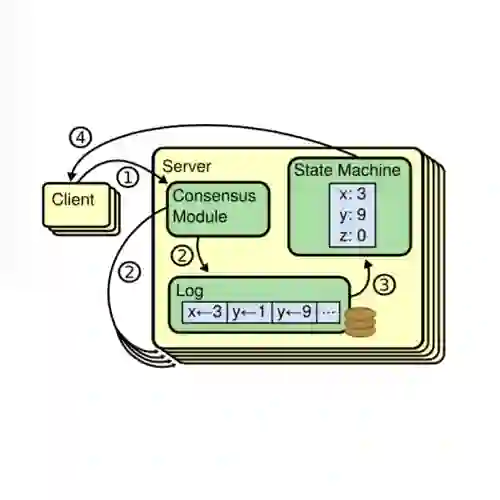

Distributed key-value stores are widely adopted to support elastic big data applications, leveraging purpose-built consensus algorithms like Raft to ensure data consistency. However, through systematic analysis, we reveal a critical performance issue in such consistent stores, i.e., overlapping persistence operations between consensus protocols and underlying storage engines result in significant I/O overhead. To address this issue, we present Nezha, a prototype distributed storage system that innovatively integrates key-value separation with Raft to provide scalable throughput in a strong consistency guarantee. Nezha redesigns the persistence strategy at the operation level and incorporates leveled garbage collection, significantly improving read and write performance while preserving Raft's safety properties. Experimental results demonstrate that, on average, Nezha achieves throughput improvements of 460.2%, 12.5%, and 72.6% for put, get, and scan operations, respectively.

翻译:分布式键值存储系统被广泛用于支持弹性大数据应用,其通常采用Raft等专用共识算法来确保数据一致性。然而,通过系统化分析,我们揭示了此类强一致性存储系统存在一个关键性能问题:共识协议与底层存储引擎间的持久化操作重叠导致了显著的I/O开销。为解决该问题,我们提出了哪吒——一个创新性地将键值分离技术与Raft协议相集成的原型分布式存储系统,在保证强一致性的同时提供可扩展的吞吐量。哪吒在操作层面重新设计了持久化策略,并引入分层垃圾回收机制,在保持Raft安全特性的同时显著提升了读写性能。实验结果表明,哪吒在put、get和scan操作上的平均吞吐量分别提升了460.2%、12.5%和72.6%。