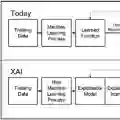

The critical need for transparent and trustworthy machine learning in cybersecurity operations drives the development of this integrated Explainable AI (XAI) framework. Our methodology addresses three fundamental challenges in deploying AI for threat detection: handling massive datasets through Strategic Sampling Methodology that preserves class distributions while enabling efficient model development; ensuring experimental rigor via Automated Data Leakage Prevention that systematically identifies and removes contaminated features; and providing operational transparency through Integrated XAI Implementation using SHAP analysis for model-agnostic interpretability across algorithms. Applied to the CIC-IDS2017 dataset, our approach maintains detection efficacy while reducing computational overhead and delivering actionable explanations for security analysts. The framework demonstrates that explainability, computational efficiency, and experimental integrity can be simultaneously achieved, providing a robust foundation for deploying trustworthy AI systems in security operations centers where decision transparency is paramount.

翻译:网络安全运营中对透明可信机器学习的迫切需求推动了本集成可解释人工智能框架的开发。我们的方法解决了在威胁检测中部署人工智能面临的三个基本挑战:通过策略性采样方法处理海量数据集,在保持类别分布的同时实现高效模型开发;通过自动化数据泄漏预防确保实验严谨性,系统性地识别并移除污染特征;通过集成可解释人工智能实现提供操作透明度,利用SHAP分析为跨算法模型提供与模型无关的可解释性。在CIC-IDS2017数据集上的应用表明,该方法在保持检测效能的同时降低了计算开销,并为安全分析师提供了可操作的决策解释。该框架证明可解释性、计算效率与实验完整性可同时实现,为在决策透明度至关重要的安全运营中心部署可信人工智能系统奠定了坚实基础。