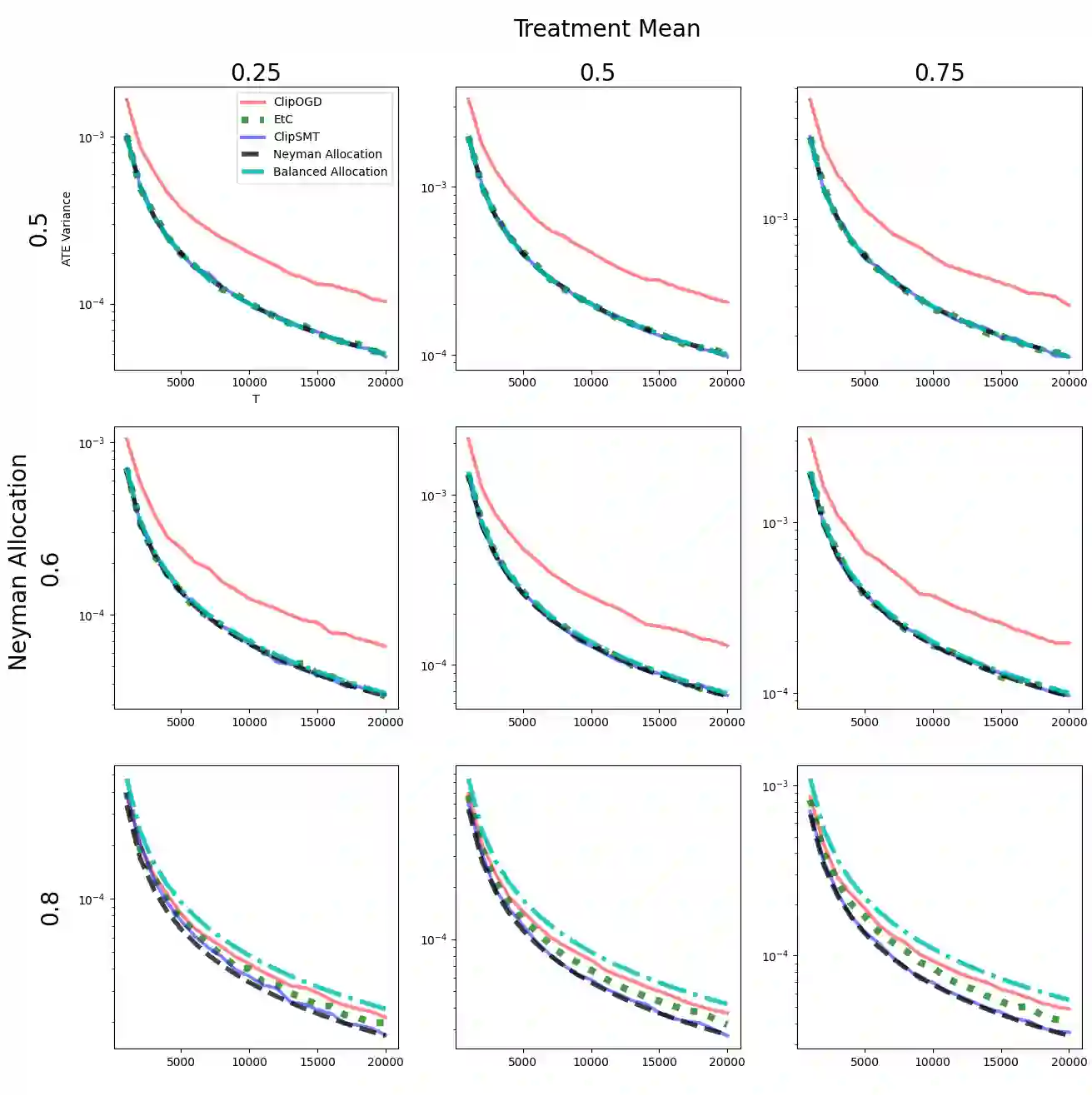

Estimation of the Average Treatment Effect (ATE) is a core problem in causal inference with strong connections to Off-Policy Evaluation in Reinforcement Learning. This paper considers the problem of adaptively selecting the treatment allocation probability in order to improve estimation of the ATE. The majority of prior work on adaptive ATE estimation focus on asymptotic guarantees, and in turn overlooks important practical considerations such as the difficulty of learning the optimal treatment allocation as well as hyper-parameter selection. Existing non-asymptotic methods are limited by poor empirical performance and exponential scaling of the Neyman regret with respect to problem parameters. In order to address these gaps, we propose and analyze the Clipped Second Moment Tracking (ClipSMT) algorithm, a variant of an existing algorithm with strong asymptotic optimality guarantees, and provide finite sample bounds on its Neyman regret. Our analysis shows that ClipSMT achieves exponential improvements in Neyman regret on two fronts: improving the dependence on $T$ from $O(\sqrt{T})$ to $O(\log T)$, as well as reducing the exponential dependence on problem parameters to a polynomial dependence. Finally, we conclude with simulations which show the marked improvement of ClipSMT over existing approaches.

翻译:平均处理效应(ATE)的估计是因果推断的核心问题,与强化学习中的离策略评估密切相关。本文研究如何自适应选择处理分配概率以改进ATE估计。现有自适应ATE估计研究大多聚焦于渐近保证,因而忽视了若干重要实际考量,例如最优处理分配的学习难度以及超参数选择问题。现有非渐近方法受限于较差的实证性能,且其奈曼遗憾随问题参数呈指数级增长。为弥补这些不足,我们提出并分析了截断二阶矩追踪(ClipSMT)算法——该算法是具有强渐近最优性保证的现有算法变体,并给出了其奈曼遗憾的有限样本界。分析表明ClipSMT在两方面实现了奈曼遗憾的指数级改进:将$T$的依赖关系从$O(\sqrt{T})$提升至$O(\log T)$,同时将问题参数的指数依赖降低为多项式依赖。最后,我们通过仿真实验证明ClipSMT相较现有方法具有显著优势。