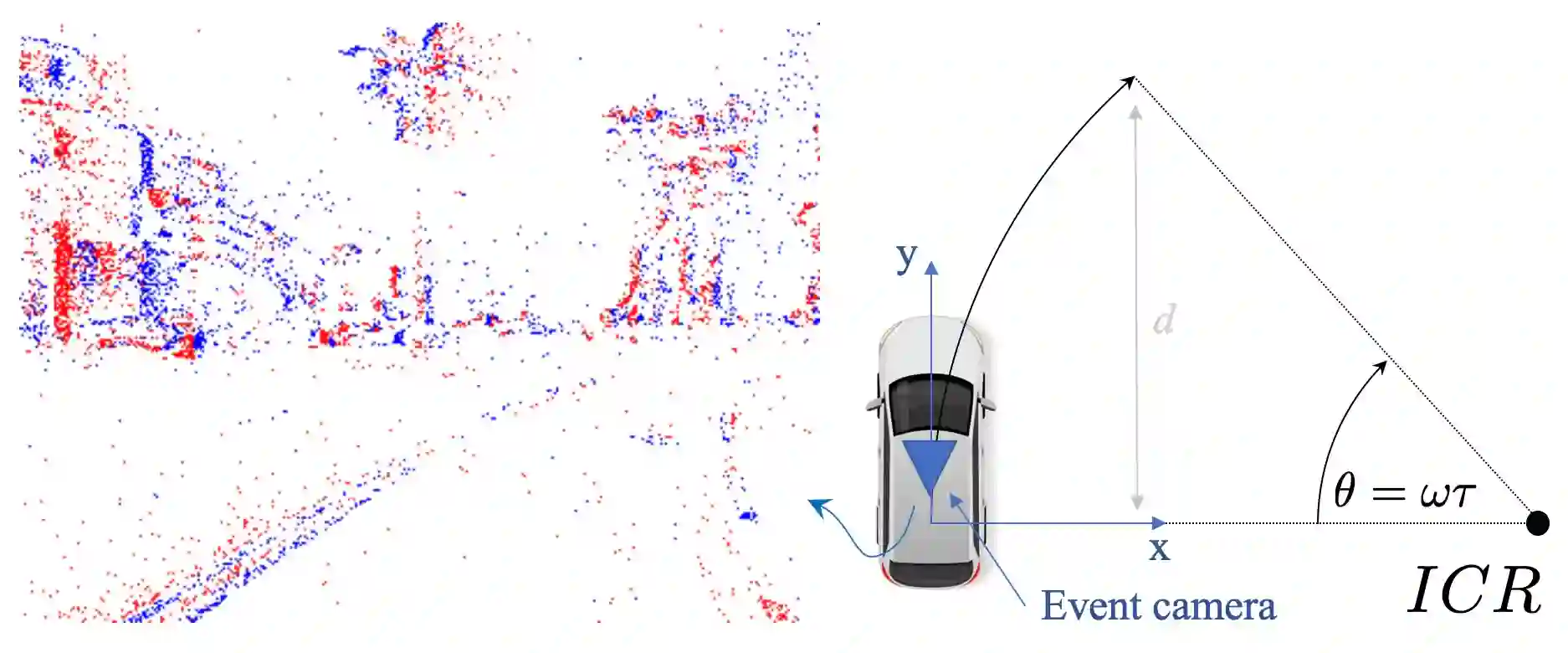

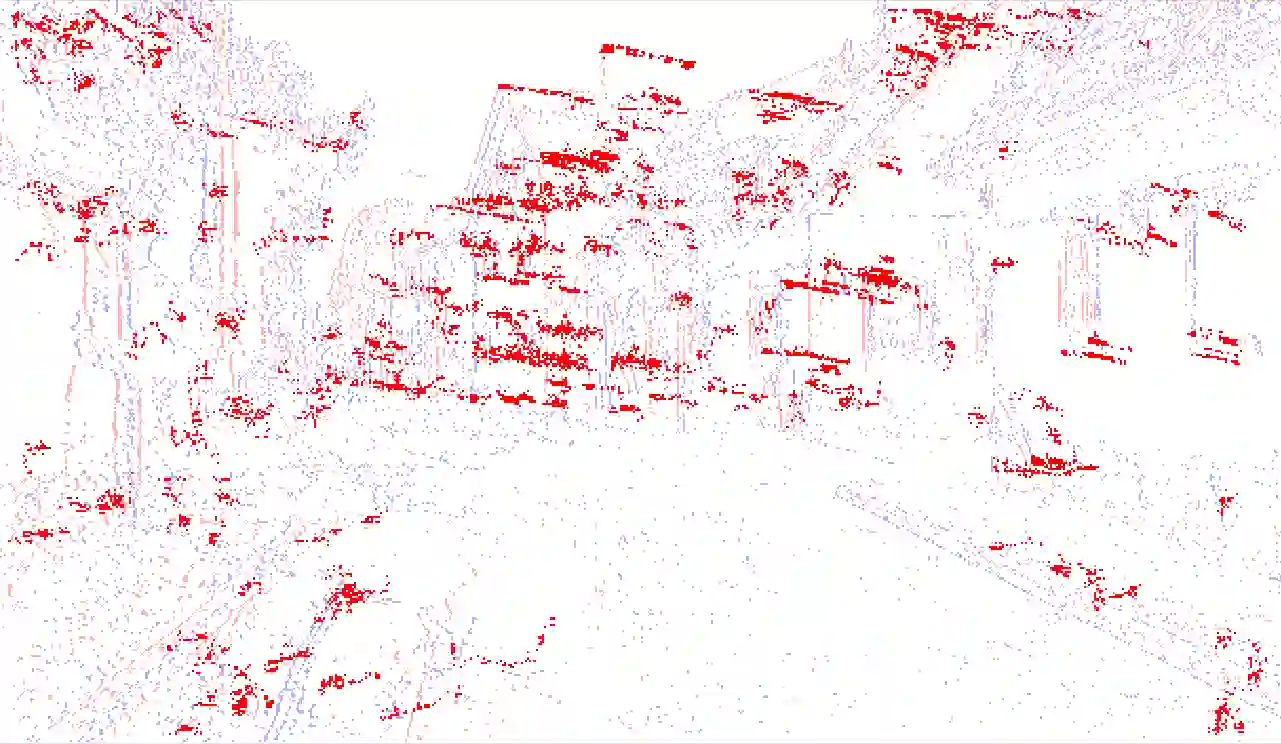

Despite the promise of superior performance under challenging conditions, event-based motion estimation remains a hard problem owing to the difficulty of extracting and tracking stable features from event streams. In order to robustify the estimation, it is generally believed that fusion with other sensors is a requirement. In this work, we demonstrate reliable, purely event-based visual odometry on planar ground vehicles by employing the constrained non-holonomic motion model of Ackermann steering platforms. We extend single feature n-linearities for regular frame-based cameras to the case of quasi time-continuous event-tracks, and achieve a polynomial form via variable degree Taylor expansions. Robust averaging over multiple event tracks is simply achieved via histogram voting. As demonstrated on both simulated and real data, our algorithm achieves accurate and robust estimates of the vehicle's instantaneous rotational velocity, and thus results that are comparable to the delta rotations obtained by frame-based sensors under normal conditions. We furthermore significantly outperform the more traditional alternatives in challenging illumination scenarios. The code is available at \url{https://github.com/gowanting/NHEVO}.

翻译:尽管基于事件的视觉传感器在挑战性环境下具有优越性能的潜力,但从事件流中提取和跟踪稳定特征的困难使得基于事件的运动估计仍是一个难题。为增强估计的鲁棒性,普遍认为需要与其他传感器融合。本文通过采用阿克曼转向平台的非完整运动约束模型,展示了在平面地面车辆上仅利用事件实现可靠视觉里程计的方法。我们将传统帧相机中单一特征n线性方法扩展到准连续时间事件轨迹情形,并通过变阶泰勒展开获得多项式形式。通过简单的直方图投票即可实现多个事件轨迹的鲁棒平均。在仿真和真实数据上的实验表明,我们的算法能够准确鲁棒地估计车辆瞬时旋转速度,其效果与正常条件下基于帧传感器的增量旋转估计相当。此外,在具有挑战性的光照场景中,我们显著优于传统替代方案。代码开源在 \url{https://github.com/gowanting/NHEVO}。