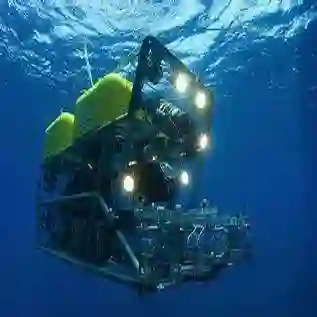

Autonomous Underwater Robots (AURs) operate in challenging underwater environments, including low visibility and harsh water conditions. Such conditions present challenges for software engineers developing perception modules for the AUR software. To successfully carry out these tasks, deep learning has been incorporated into the AUR software to support its operations. However, the unique challenges of underwater environments pose difficulties for deep learning models, which often rely on labeled data that is scarce and noisy. This may undermine the trustworthiness of AUR software that relies on perception modules. Vision-Language Models (VLMs) offer promising solutions for AUR software as they generalize to unseen objects and remain robust in noisy conditions by inferring information from contextual cues. Despite this potential, their performance and uncertainty in underwater environments remain understudied from a software engineering perspective. Motivated by the needs of an industrial partner in assurance and risk management for maritime systems to assess the potential use of VLMs in this context, we present an empirical evaluation of VLM-based perception modules within the AUR software. We assess their ability to detect underwater trash by computing performance, uncertainty, and their relationship, to enable software engineers to select appropriate VLMs for their AUR software.

翻译:自主水下机器人在具有挑战性的水下环境中运行,包括低能见度和恶劣的水体条件。这些条件为开发自主水下机器人软件感知模块的软件工程师带来了挑战。为成功执行这些任务,深度学习已被整合到自主水下机器人软件中以支持其操作。然而,水下环境的独特挑战给深度学习模型带来了困难,这些模型通常依赖于稀缺且带有噪声的标注数据。这可能会损害依赖感知模块的自主水下机器人软件的可信度。视觉-语言模型为自主水下机器人软件提供了有前景的解决方案,因为它们能够泛化到未见过的物体,并通过从上下文线索中推断信息在噪声条件下保持鲁棒性。尽管具有这种潜力,但从软件工程的角度来看,它们在水下环境中的性能和不确定性仍未得到充分研究。基于工业合作伙伴在海事系统保证和风险管理方面评估视觉-语言模型在此背景下潜在应用的需求,我们对自主水下机器人软件中基于视觉-语言模型的感知模块进行了实证评估。我们通过计算性能、不确定性及其相互关系来评估它们检测水下垃圾的能力,以使软件工程师能够为其自主水下机器人软件选择合适的视觉-语言模型。