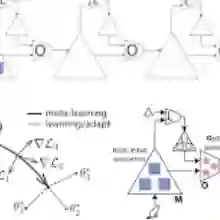

In this paper, we address the problem of class-generalizable anomaly detection, where the objective is to develop a unified model by focusing our learning on the available normal data and a small amount of anomaly data in order to detect the completely unseen anomalies, also referred to as the out-of-distribution (OOD) classes. Adding to this challenge is the fact that the anomaly data is rare and costly to label. To achieve this, we propose a multidirectional meta-learning algorithm -- at the inner level, the model aims to learn the manifold of the normal data (representation); at the outer level, the model is meta-tuned with a few anomaly samples to maximize the softmax confidence margin between the normal and anomaly samples (decision surface calibration), treating normals as in-distribution (ID) and anomalies as out-of-distribution (OOD). By iteratively repeating this process over multiple episodes of predominantly normal and a small number of anomaly samples, we realize a multidirectional meta-learning framework. This two-level optimization, enhanced by multidirectional training, enables stronger generalization to unseen anomaly classes.

翻译:本文研究类别泛化的异常检测问题,其目标是通过聚焦于可用的正常数据与少量异常数据来学习一个统一模型,以检测完全未见过的异常类别(亦称分布外类别)。该问题的挑战在于异常数据稀缺且标注成本高昂。为此,我们提出一种多方向元学习算法:在内部层级,模型学习正常数据的流形结构(表征学习);在外部层级,模型利用少量异常样本进行元调优,以最大化正常样本与异常样本间的softmax置信度边界(决策面校准),将正常样本视为分布内数据,异常样本视为分布外数据。通过在以正常样本为主、辅以少量异常样本构成的多个训练回合中迭代重复此过程,我们实现了一个多方向元学习框架。这种通过多方向训练增强的双层级优化机制,能够实现对未见异常类别更强的泛化能力。