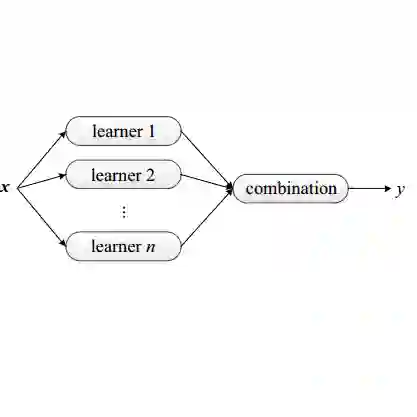

Unsupervised ensemble learning emerged to address the challenge of combining multiple learners' predictions without access to ground truth labels or additional data. This paradigm is crucial in scenarios where evaluating individual classifier performance or understanding their strengths is challenging due to limited information. We propose a novel deep energy-based method for constructing an accurate meta-learner using only the predictions of individual learners, potentially capable of capturing complex dependence structures between them. Our approach requires no labeled data, learner features, or problem-specific information, and has theoretical guarantees for when learners are conditionally independent. We demonstrate superior performance across diverse ensemble scenarios, including challenging mixture of experts settings. Our experiments span standard ensemble datasets and curated datasets designed to test how the model fuses expertise from multiple sources. These results highlight the potential of unsupervised ensemble learning to harness collective intelligence, especially in data-scarce or privacy-sensitive environments.

翻译:非监督集成学习旨在解决在缺乏真实标签或额外数据的情况下,如何有效融合多个学习器预测结果的挑战。该范式在信息受限、难以评估单个分类器性能或理解其优势的场景中至关重要。我们提出了一种新颖的深度能量方法,仅利用个体学习器的预测来构建一个精确的元学习器,该方法有望捕捉学习器之间复杂的依赖结构。我们的方法无需标注数据、学习器特征或特定问题信息,并在学习器条件独立时具备理论保证。我们在多种集成场景中展示了卓越的性能,包括具有挑战性的专家混合设置。实验涵盖了标准集成数据集以及专门设计用于测试模型如何融合多源专业知识的精选数据集。这些结果突显了非监督集成学习在利用集体智慧方面的潜力,特别是在数据稀缺或隐私敏感的环境中。