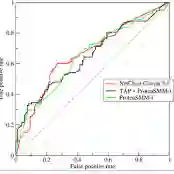

Semi-supervised learning (SSL) addresses the critical challenge of training accurate models when labeled data is scarce but unlabeled data is abundant. Graph-based SSL (GSSL) has emerged as a popular framework that captures data structure through graph representations. Classic graph SSL methods, such as Label Propagation and Label Spreading, aim to compute low-dimensional representations where points with the same labels are close in representation space. Although often effective, these methods can be suboptimal on data with complex label distributions. In our work, we develop AUC-spec, a graph approach that computes a low-dimensional representation that maximizes class separation. We compute this representation by optimizing the Area Under the ROC Curve (AUC) as estimated via the labeled points. We provide a detailed analysis of our approach under a product-of-manifold model, and show that the required number of labeled points for AUC-spec is polynomial in the model parameters. Empirically, we show that AUC-spec balances class separation with graph smoothness. It demonstrates competitive results on synthetic and real-world datasets while maintaining computational efficiency comparable to the field's classic and state-of-the-art methods.

翻译:半监督学习(SSL)旨在解决标注数据稀缺但未标注数据充足时训练精确模型的关键挑战。基于图的半监督学习(GSSL)已成为一种流行框架,其通过图表示捕捉数据结构。经典的图SSL方法(如标签传播和标签扩散)旨在计算低维表示,使得相同标签的点在表示空间中距离相近。尽管这些方法通常有效,但在具有复杂标签分布的数据上可能并非最优。本文提出AUC-spec——一种通过计算最大化类别分离度的低维表示的图方法。我们通过优化基于标注点估计的受试者工作特征曲线下面积(AUC)来计算该表示。我们在流形乘积模型下对本方法进行了详细分析,证明AUC-spec所需的标注点数量是模型参数的多项式函数。实验表明,AUC-spec在类别分离度与图平滑性之间取得平衡,在合成与真实数据集上展现出具有竞争力的结果,同时保持了与该领域经典方法及前沿方法相当的计算效率。