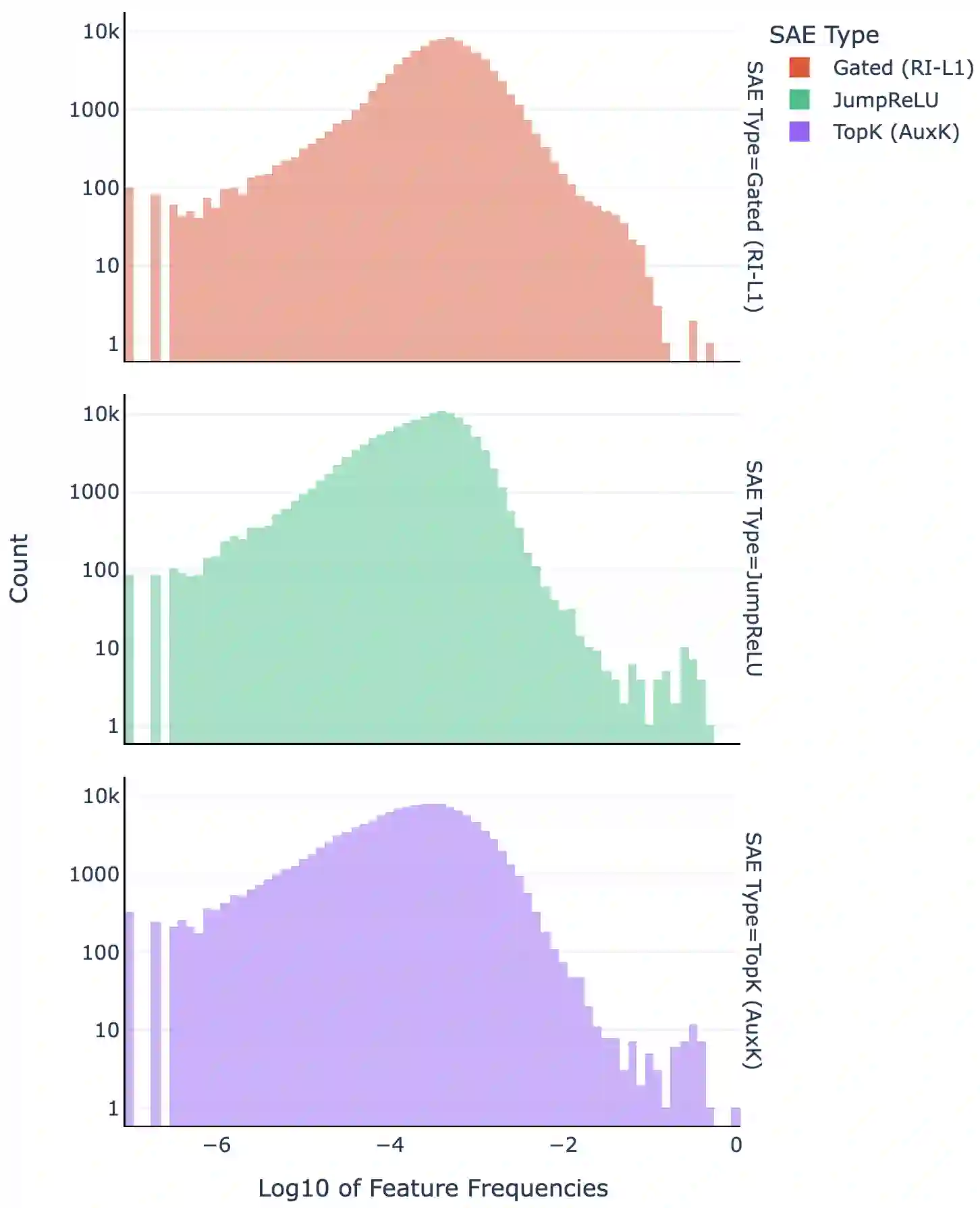

Sparse autoencoders (SAEs) are a promising unsupervised approach for identifying causally relevant and interpretable linear features in a language model's (LM) activations. To be useful for downstream tasks, SAEs need to decompose LM activations faithfully; yet to be interpretable the decomposition must be sparse -- two objectives that are in tension. In this paper, we introduce JumpReLU SAEs, which achieve state-of-the-art reconstruction fidelity at a given sparsity level on Gemma 2 9B activations, compared to other recent advances such as Gated and TopK SAEs. We also show that this improvement does not come at the cost of interpretability through manual and automated interpretability studies. JumpReLU SAEs are a simple modification of vanilla (ReLU) SAEs -- where we replace the ReLU with a discontinuous JumpReLU activation function -- and are similarly efficient to train and run. By utilising straight-through-estimators (STEs) in a principled manner, we show how it is possible to train JumpReLU SAEs effectively despite the discontinuous JumpReLU function introduced in the SAE's forward pass. Similarly, we use STEs to directly train L0 to be sparse, instead of training on proxies such as L1, avoiding problems like shrinkage.

翻译:稀疏自编码器(SAE)是一种有前景的无监督方法,可用于识别语言模型(LM)激活中具有因果相关性和可解释性的线性特征。为了在下游任务中发挥作用,SAE需要忠实地分解LM激活;而为了保持可解释性,分解又必须是稀疏的——这两个目标存在内在张力。本文提出JumpReLU稀疏自编码器,该模型在Gemma 2 9B激活数据上,相较于Gated SAE和TopK SAE等近期进展,能够在给定稀疏度水平下实现最先进的重建保真度。我们通过人工与自动化可解释性研究进一步证明,这种性能提升并未以牺牲可解释性为代价。JumpReLU稀疏自编码器是对经典(ReLU)稀疏自编码器的简易改进——将ReLU激活函数替换为不连续的JumpReLU激活函数——并保持相似的训练与运行效率。通过系统性地应用直通估计器(STE),我们展示了如何在SAE前向传播中引入不连续JumpReLU函数的情况下仍能有效训练模型。同样地,我们利用STE直接训练L0稀疏性(而非通过L1等代理目标进行训练),从而规避了权重收缩等问题。