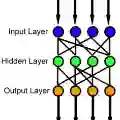

Deep learning has made significant applications in the field of data science and natural science. Some studies have linked deep neural networks to dynamic systems, but the network structure is restricted to the residual network. It is known that residual networks can be regarded as a numerical discretization of dynamic systems. In this paper, we back to the classical network structure and prove that the vanilla feedforward networks could also be a numerical discretization of dynamic systems, where the width of the network is equal to the dimension of the input and output. Our proof is based on the properties of the leaky-ReLU function and the numerical technique of splitting method to solve differential equations. Our results could provide a new perspective for understanding the approximation properties of feedforward neural networks.

翻译:深度学习在数据科学和自然科学领域取得了重要应用。已有研究将深度神经网络与动力系统联系起来,但网络结构仅限于残差网络。众所周知,残差网络可视为动力系统的数值离散化。本文回归经典网络结构,证明普通前馈神经网络同样可以成为动力系统的数值离散化,其中网络宽度等于输入和输出的维度。我们的证明基于leaky-ReLU函数的性质以及求解微分方程的分裂数值方法。该研究结果可为理解前馈神经网络的逼近特性提供新的视角。