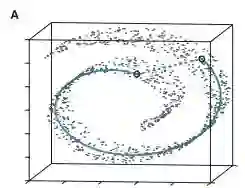

Building general-purpose embodied agents across diverse hardware remains a central challenge in robotics, often framed as the ''one-brain, many-forms'' paradigm. Progress is hindered by fragmented data, inconsistent representations, and misaligned training objectives. We present ABot-M0, a framework that builds a systematic data curation pipeline while jointly optimizing model architecture and training strategies, enabling end-to-end transformation of heterogeneous raw data into unified, efficient representations. From six public datasets, we clean, standardize, and balance samples to construct UniACT-dataset, a large-scale dataset with over 6 million trajectories and 9,500 hours of data, covering diverse robot morphologies and task scenarios. Unified pre-training improves knowledge transfer and generalization across platforms and tasks, supporting general-purpose embodied intelligence. To improve action prediction efficiency and stability, we propose the Action Manifold Hypothesis: effective robot actions lie not in the full high-dimensional space but on a low-dimensional, smooth manifold governed by physical laws and task constraints. Based on this, we introduce Action Manifold Learning (AML), which uses a DiT backbone to predict clean, continuous action sequences directly. This shifts learning from denoising to projection onto feasible manifolds, improving decoding speed and policy stability. ABot-M0 supports modular perception via a dual-stream mechanism that integrates VLM semantics with geometric priors and multi-view inputs from plug-and-play 3D modules such as VGGT and Qwen-Image-Edit, enhancing spatial understanding without modifying the backbone and mitigating standard VLM limitations in 3D reasoning. Experiments show components operate independently with additive benefits. We will release all code and pipelines for reproducibility and future research.

翻译:构建跨多样化硬件的通用具身智能体仍然是机器人学的一个核心挑战,常被表述为"一个大脑,多种形态"的范式。这一进程受到数据碎片化、表示不一致以及训练目标不匹配的阻碍。我们提出了ABot-M0框架,该框架构建了一个系统化的数据整理流程,同时联合优化模型架构与训练策略,实现了将异构原始数据端到端地转化为统一、高效的表征。我们从六个公共数据集中清洗、标准化并平衡样本,构建了UniACT-dataset,这是一个包含超过600万条轨迹、9500小时数据的大规模数据集,涵盖了多样化的机器人形态和任务场景。统一的预训练提升了跨平台和跨任务的知识迁移与泛化能力,为通用具身智能提供支持。为了提高动作预测的效率和稳定性,我们提出了动作流形假说:有效的机器人动作并非存在于完整的高维空间中,而是位于一个受物理定律和任务约束支配的低维、平滑流形上。基于此,我们引入了动作流形学习(AML),它使用DiT主干网络直接预测干净、连续的动作序列。这将学习过程从去噪转变为向可行流形上的投影,从而提高了解码速度与策略稳定性。ABot-M0通过双流机制支持模块化感知,该机制将VLM语义与几何先验以及来自即插即用3D模块(如VGGT和Qwen-Image-Edit)的多视角输入相融合,在不修改主干网络的情况下增强空间理解能力,并缓解了标准VLM在3D推理方面的局限性。实验表明,各组件可独立运行并产生叠加效益。我们将发布所有代码和流程以确保可复现性并支持未来研究。