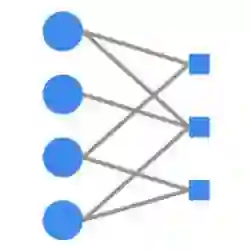

Deep neural networks (DNNs) lack the precise semantics and definitive probabilistic interpretation of probabilistic graphical models (PGMs). In this paper, we propose an innovative solution by constructing infinite tree-structured PGMs that correspond exactly to neural networks. Our research reveals that DNNs, during forward propagation, indeed perform approximations of PGM inference that are precise in this alternative PGM structure. Not only does our research complement existing studies that describe neural networks as kernel machines or infinite-sized Gaussian processes, it also elucidates a more direct approximation that DNNs make to exact inference in PGMs. Potential benefits include improved pedagogy and interpretation of DNNs, and algorithms that can merge the strengths of PGMs and DNNs.

翻译:深度神经网络(DNNs)缺乏概率图模型(PGMs)所具有的精确语义和明确的概率解释。本文提出一种创新性解决方案,通过构建与神经网络精确对应的无限树结构PGMs。我们的研究表明,在前向传播过程中,DNNs实际上执行的是PGM推断的近似计算,且在这种替代的PGM结构中具有精确性。本研究不仅补充了将神经网络描述为核机器或无限维高斯过程的现有研究,还阐明了DNNs对PGMs精确推断所作出的更直接近似。潜在益处包括改进DNNs的教学与解释,以及开发能够融合PGMs与DNNs优势的算法。