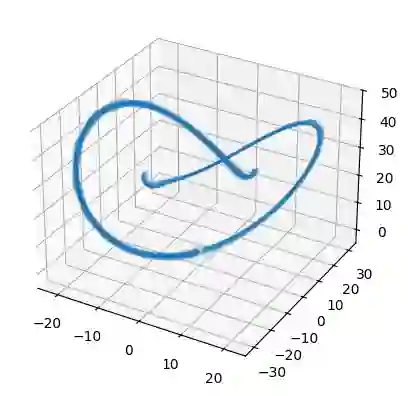

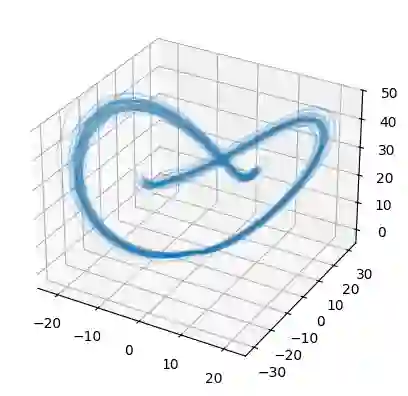

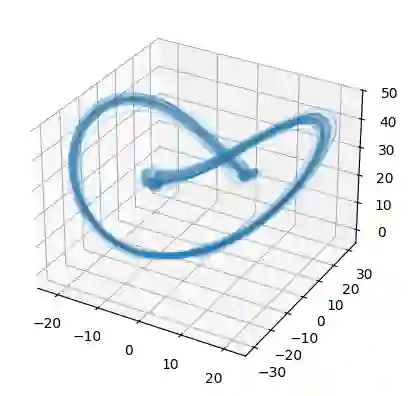

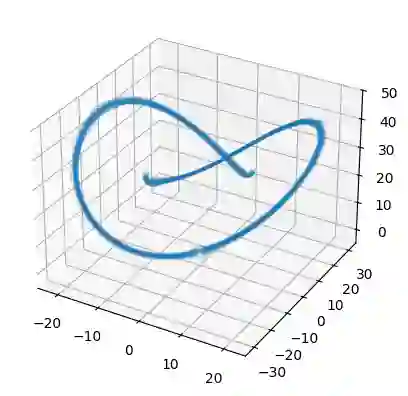

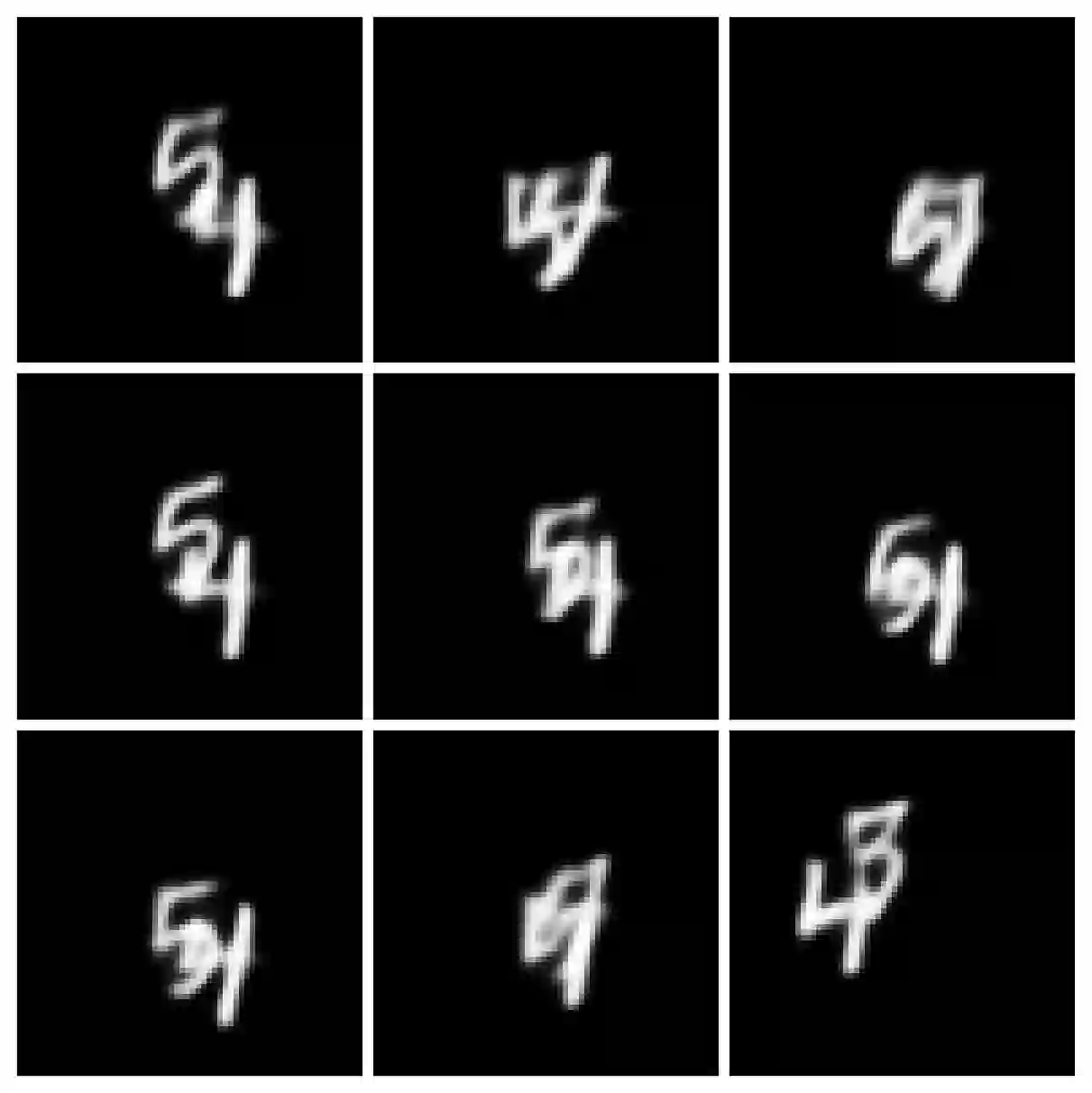

Stochastic differential equations (SDEs) are well suited to modelling noisy and irregularly sampled time series found in finance, physics, and machine learning. Traditional approaches require costly numerical solvers to sample between arbitrary time points. We introduce Neural Stochastic Flows (NSFs) and their latent variants, which directly learn (latent) SDE transition laws using conditional normalising flows with architectural constraints that preserve properties inherited from stochastic flows. This enables one-shot sampling between arbitrary states and yields up to two orders of magnitude speed-ups at large time gaps. Experiments on synthetic SDE simulations and on real-world tracking and video data show that NSFs maintain distributional accuracy comparable to numerical approaches while dramatically reducing computation for arbitrary time-point sampling.

翻译:随机微分方程(SDEs)非常适合建模金融、物理和机器学习中常见的噪声大且采样不规则的时间序列。传统方法需要昂贵的数值求解器在任意时间点之间进行采样。我们提出了神经随机流(NSFs)及其潜在变体,它们利用带有架构约束的条件归一化流直接学习(潜在)SDE的转移规律,这些约束保留了从随机流继承的特性。这使得在任意状态之间进行单次采样成为可能,并在较大时间间隔下实现高达两个数量级的加速。在合成SDE模拟以及真实世界跟踪和视频数据上的实验表明,NSFs在保持与数值方法相当的分布精度的同时,显著降低了任意时间点采样的计算成本。