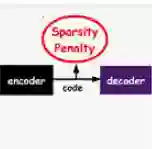

In this work, we explore the intersection of sparse coding theory and deep learning to enhance our understanding of feature extraction capabilities in advanced neural network architectures. We begin by introducing a novel class of Deep Sparse Coding (DSC) models and establish a thorough theoretical analysis of their uniqueness and stability properties. By applying iterative algorithms to these DSC models, we derive convergence rates for convolutional neural networks (CNNs) in their ability to extract sparse features. This provides a strong theoretical foundation for the use of CNNs in sparse feature learning tasks. We additionally extend this convergence analysis to more general neural network architectures, including those with diverse activation functions, as well as self-attention and transformer-based models. This broadens the applicability of our findings to a wide range of deep learning methods for deep sparse feature extraction. Inspired by the strong connection between sparse coding and CNNs, we also explore training strategies to encourage neural networks to learn more sparse features. Through numerical experiments, we demonstrate the effectiveness of these approaches, providing valuable insights for the design of efficient and interpretable deep learning models.

翻译:本文探索了稀疏编码理论与深度学习的交叉领域,以深化对先进神经网络架构特征提取能力的理解。我们首先引入一类新型深度稀疏编码模型,并对其唯一性和稳定性特性进行了系统的理论分析。通过对这些DSC模型应用迭代算法,我们推导出卷积神经网络在提取稀疏特征能力方面的收敛速率。这为CNN在稀疏特征学习任务中的应用奠定了坚实的理论基础。我们进一步将收敛性分析扩展到更一般的神经网络架构,包括具有多样化激活函数的网络,以及基于自注意力和Transformer的模型。这拓宽了我们研究结果在多种深度稀疏特征提取深度学习方法中的适用性。受稀疏编码与CNN之间紧密联系的启发,我们还探索了鼓励神经网络学习更稀疏特征的训练策略。通过数值实验,我们验证了这些方法的有效性,为设计高效且可解释的深度学习模型提供了有价值的见解。