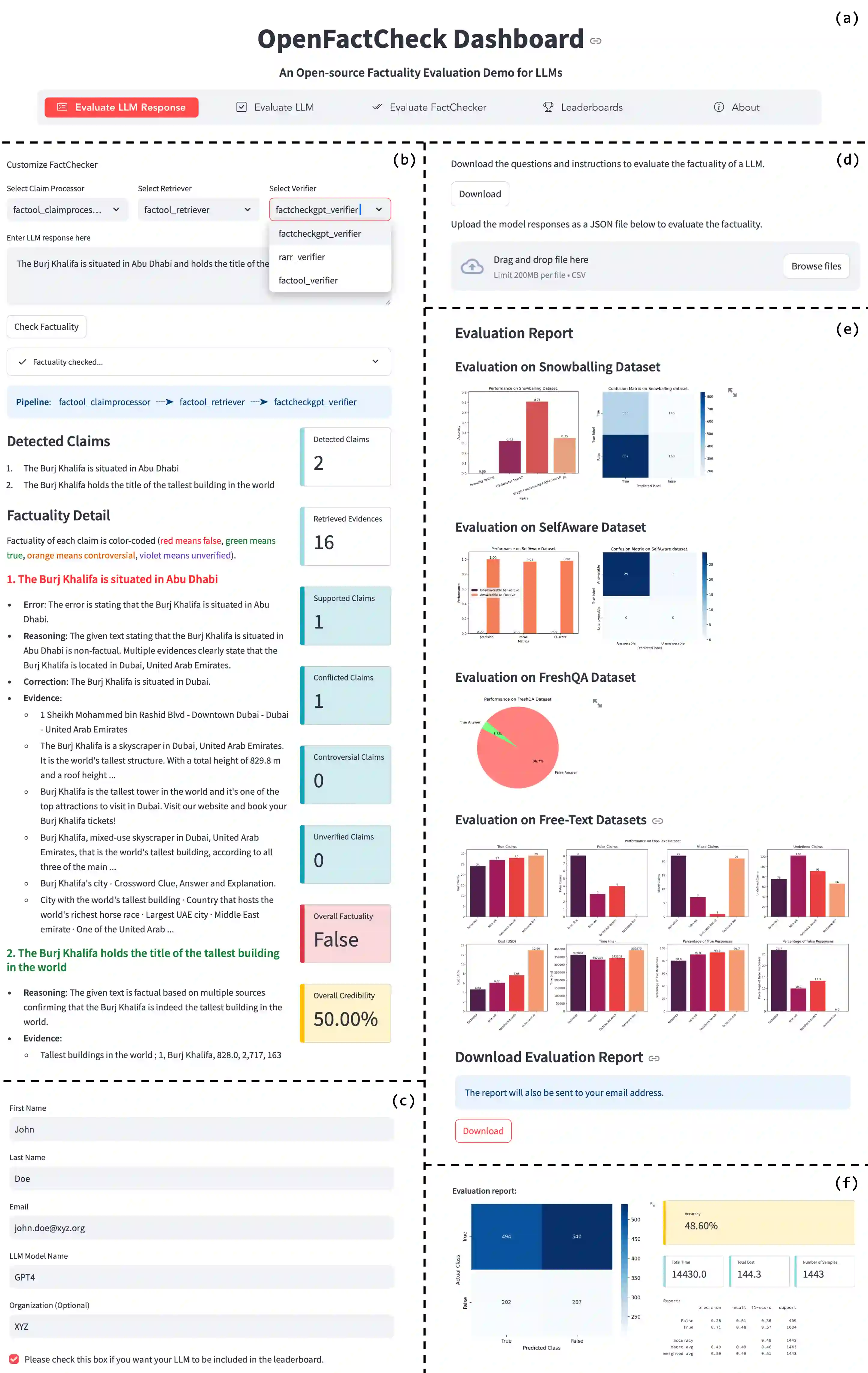

The increased use of large language models (LLMs) across a variety of real-world applications calls for automatic tools to check the factual accuracy of their outputs, as LLMs often hallucinate. This is difficult as it requires assessing the factuality of free-form open-domain responses. While there has been a lot of research on this topic, different papers use different evaluation benchmarks and measures, which makes them hard to compare and hampers future progress. To mitigate these issues, we developed OpenFactCheck, a unified framework, with three modules: (i) RESPONSEEVAL, which allows users to easily customize an automatic fact-checking system and to assess the factuality of all claims in an input document using that system, (ii) LLMEVAL, which assesses the overall factuality of an LLM, and (iii) CHECKEREVAL, a module to evaluate automatic fact-checking systems. OpenFactCheck is open-sourced (https://github.com/mbzuai-nlp/openfactcheck) and publicly released as a Python library (https://pypi.org/project/openfactcheck/) and also as a web service (http://app.openfactcheck.com). A video describing the system is available at https://youtu.be/-i9VKL0HleI.

翻译:随着大语言模型(LLMs)在各类实际应用中的日益普及,对其输出内容的事实准确性进行自动化核查的需求愈发迫切,因为大语言模型常产生幻觉性内容。这一任务具有挑战性,因其需要对自由形式的开放领域回答进行事实性评估。尽管已有大量相关研究,但不同论文采用不同的评估基准与度量标准,导致结果难以比较,阻碍了后续进展。为缓解这些问题,我们开发了OpenFactCheck这一统一框架,包含三个核心模块:(i)RESPONSEEVAL,允许用户便捷地定制自动化事实核查系统,并基于该系统评估输入文档中所有主张的事实性;(ii)LLMEVAL,用于评估大语言模型的整体事实性水平;(iii)CHECKEREVAL,用于评估自动化事实核查系统性能的模块。OpenFactCheck已开源(https://github.com/mbzuai-nlp/openfactcheck),并以Python库(https://pypi.org/project/openfactcheck/)及在线服务(http://app.openfactcheck.com)形式公开发布。系统介绍视频详见:https://youtu.be/-i9VKL0HleI。